Review Velocity: The Local SEO Ranking Factor Most Businesses Ignore

Discover how review velocity — the rate at which your business acquires new reviews — impacts local search rankings. Includes optimal velocity benchmarks by industry, Google's recency and diversity weighting, data-driven strategies for maintaining consistent velocity, and tools for tracking your review acquisition rate.

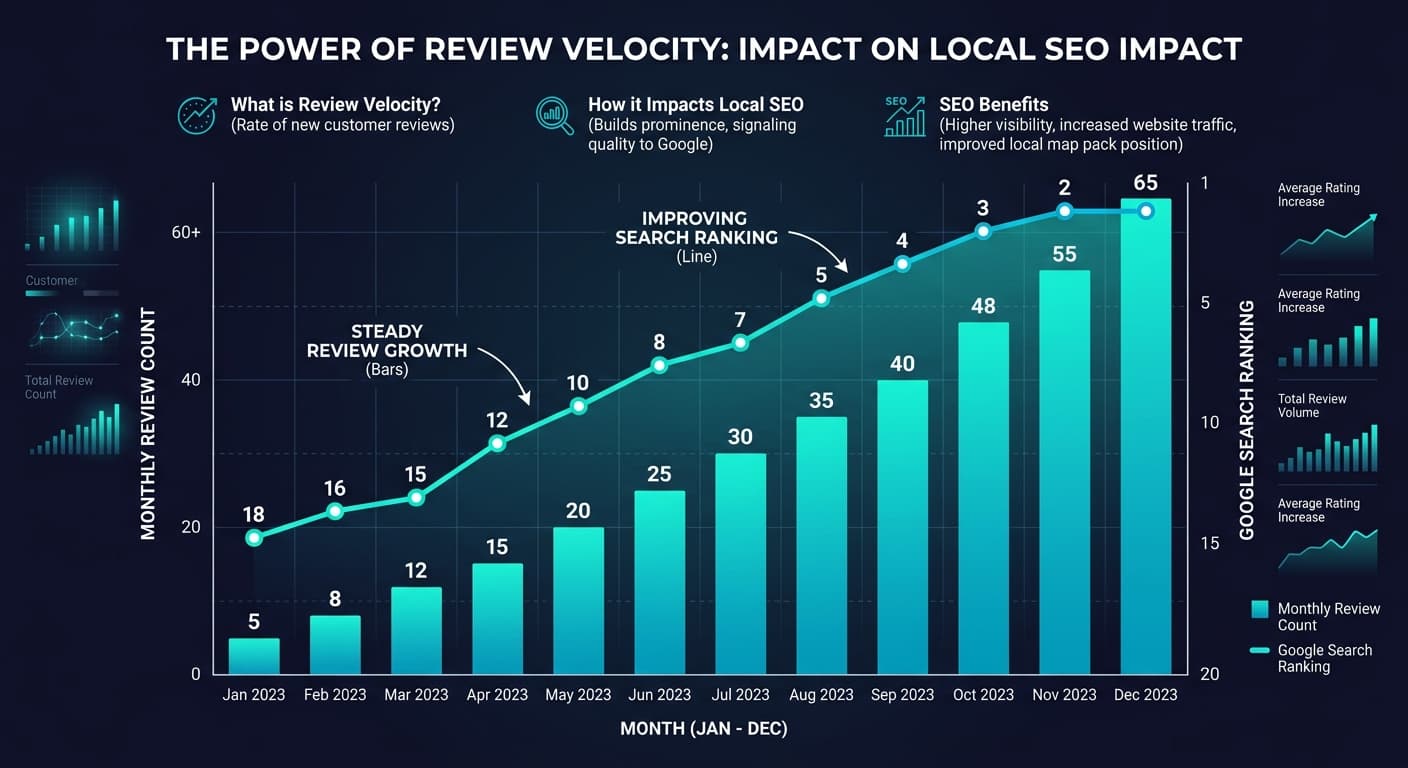

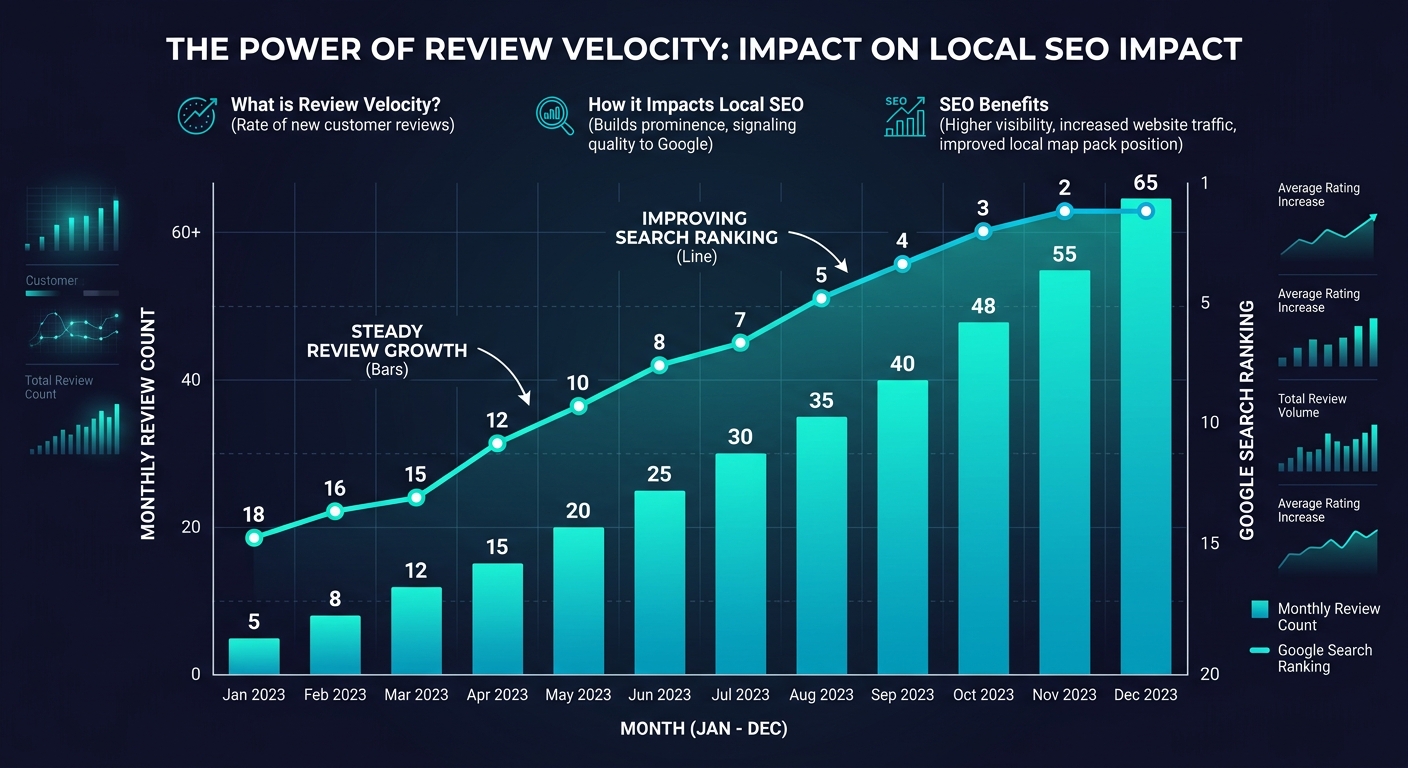

Most local businesses understand that Google reviews matter for search rankings. They know that more stars and more reviews generally correlate with higher visibility in the local pack. What most businesses do not understand — and what gives informed competitors a significant advantage — is that it is not just the total number of reviews that matters. It is the rate at which new reviews arrive.

This rate is called review velocity, and it is one of the most overlooked ranking signals in local SEO.

A business with 500 reviews that stopped receiving new ones six months ago will gradually lose ground to a competitor with 200 reviews that gains 15 new ones every month. Google's algorithm interprets review velocity as a signal of ongoing customer engagement and business relevance. A steady flow of new reviews tells Google that people are actively visiting, purchasing, and evaluating your business — that you are a living, breathing entity worthy of ranking, not a relic with stale social proof.

This article explains what review velocity is, how Google uses it alongside recency and diversity signals, what optimal velocity looks like by industry, and how to build systems that maintain consistent review flow without crossing ethical or platform policy lines.

What Is Review Velocity?

Review velocity is the rate at which a business receives new reviews over a defined time period. It is typically measured as reviews per month or reviews per week, though the exact cadence depends on the industry and business volume.

The formula is straightforward:

Review Velocity = New Reviews / Time Period

A restaurant that received 45 new Google reviews in the past 30 days has a velocity of 45 reviews per month or approximately 1.5 reviews per day. A law firm that received 6 new reviews in the same period has a velocity of 6 per month or roughly 1.4 per week.

Raw velocity numbers are meaningless without context. What matters is your velocity relative to your competitors and relative to what Google expects for your business category and market.

Velocity vs. Volume vs. Recency

These three review metrics are related but distinct, and each influences local rankings differently:

| Metric | Definition | Ranking Impact | Half-Life |

|---|---|---|---|

| Review Volume | Total number of reviews across all time | Moderate — establishes baseline credibility | None — cumulative |

| Review Velocity | Rate of new review acquisition | High — signals ongoing relevance | 60-90 days |

| Review Recency | Age of the most recent reviews | High — freshness signal | 30-60 days |

| Review Diversity | Distribution of ratings and reviewer types | Moderate — authenticity signal | None — cumulative |

Volume is the foundation. You need a minimum number of reviews (typically 10-20 on Google) to enter the ranking conversation at all. But once you cross that threshold, velocity and recency become more influential than raw count.

"A business with 50 reviews gaining 10 per month will outrank a business with 500 reviews gaining zero per month — not immediately, but within three to six months. Google's algorithm rewards momentum, not history."

How Google Uses Review Velocity

Google does not publish its exact ranking algorithm, but years of local SEO research, patent analysis, and controlled experiments have established how review signals contribute to local pack rankings. Here is what we know.

The Freshness Signal

Google weights recent reviews more heavily than old ones. A review posted yesterday contributes more to your ranking than a review posted two years ago. This is the recency signal, and review velocity is the mechanism that keeps your recency score high.

Think of it this way: every review begins depreciating in ranking value from the moment it is posted. The depreciation is not linear — a one-month-old review is nearly as valuable as a one-day-old review, but a twelve-month-old review has lost most of its ranking contribution. Consistent velocity ensures you always have fresh reviews offsetting the depreciation of older ones.

The Engagement Signal

Review velocity also functions as a proxy for business activity. A business that consistently generates reviews is one that consistently serves customers. This engagement signal is particularly important in competitive local markets where multiple businesses have similar star ratings and similar review volumes.

Google's local ranking algorithm considers three primary factors: relevance, distance, and prominence. Review velocity contributes to prominence — the algorithm's assessment of how well-known and well-regarded a business is. A steady stream of reviews is one of the strongest prominence signals available.

The Authenticity Filter

Paradoxically, extremely high velocity spikes can hurt rather than help. If a business that typically receives 5 reviews per month suddenly gets 50 reviews in a single week, Google's review fraud detection systems flag this pattern. It suggests review solicitation campaigns, incentivized reviews, or purchased reviews.

The ideal velocity pattern is consistent and sustainable. A business that goes from 5 reviews per month to 8, then 10, then 12 over several months sends a healthy growth signal. A business that jumps from 5 to 50 and back to 5 sends an alarm.

Optimal Review Velocity by Industry

Velocity benchmarks vary dramatically by industry because customer volume, review motivation, and competitive density differ. Here are research-backed velocity targets for major categories.

| Industry | Monthly Volume (Small Market) | Monthly Volume (Large Market) | Competitive Threshold |

|---|---|---|---|

| Restaurants | 8-15 | 25-60 | Top quartile in category |

| Medical/Dental | 4-8 | 10-20 | 2x nearest competitor |

| Legal Services | 2-5 | 5-12 | Any consistent velocity |

| Home Services (Plumbing, HVAC) | 3-8 | 10-25 | 1.5x nearest competitor |

| Retail (Single Location) | 5-12 | 15-40 | Match category leaders |

| Auto Dealerships | 10-20 | 30-80 | Top 3 in market |

| Hotels/Hospitality | 15-30 | 40-100+ | Match star-tier competitors |

| Real Estate Agents | 2-4 | 4-10 | Any consistent velocity |

| Insurance Agencies | 2-5 | 5-15 | 1.5x nearest competitor |

| SaaS/Software | 3-8 | 10-30 | Match category on G2/Capterra |

These are targets, not requirements. The most important factor is not hitting a specific number but maintaining consistency and exceeding your nearest competitors.

"The business that wins local SEO is not the one with the most reviews or the highest rating — it is the one that most recently demonstrated active customer engagement. Review velocity is the measure of that engagement."

For a deeper analysis of how review count specifically influences ranking position, see our how many reviews to rank on Google guide.

The Recency Weighting Effect

Recency is velocity's sibling signal, and understanding how they interact is essential for strategy.

Google applies a time-decay function to review contributions. While the exact curve is not public, research suggests the following approximate weighting:

| Review Age | Approximate Ranking Weight | Status |

|---|---|---|

| 0-30 days | 100% | Full contribution |

| 31-60 days | 85-90% | Near full |

| 61-90 days | 65-75% | Diminishing |

| 91-180 days | 40-55% | Significant decay |

| 181-365 days | 20-35% | Minimal contribution |

| 365+ days | 5-15% | Background signal only |

This decay curve means that a business relying on a burst of reviews from a campaign six months ago is operating on fumes. The reviews still exist and still contribute to total count and overall rating, but their ranking contribution has decayed by 50-80%.

The practical implication is clear: you need a system that generates reviews every month, not a campaign that generates reviews once a quarter.

What Happens When Velocity Drops to Zero

When a business stops generating new reviews entirely, the effects compound over time:

Month 1-2: Minimal impact. Recent reviews still carry weight and overall volume sustains ranking.

Month 3-4: Noticeable decline. Competitors with active velocity begin overtaking in the local pack, especially for competitive queries.

Month 5-6: Significant impact. The business may drop out of the local 3-pack for primary keywords. Review freshness score is critically low.

Month 7+: Severe impact. Even strong historical review profiles cannot compensate for zero velocity. The business becomes functionally invisible in local search for competitive queries.

This timeline varies by market competitiveness. In a small town with few competitors, zero velocity might not hurt for months. In a major metro with dozens of active competitors, the decline accelerates.

The Diversity Factor

Review velocity interacts with two additional diversity signals that Google evaluates:

Rating Distribution Diversity

A business where every review is 5 stars looks suspicious. A natural rating distribution includes some 4-star, some 3-star, and occasionally lower ratings. Google's systems recognize that perfect scores at high velocity are a red flag for review manipulation.

See What Your Reviews Really Say

Paste any product URL and get an AI-powered SWOT analysis in under 60 seconds.

Try It Free →The ideal distribution for a well-run business looks approximately like this: 65-75% five-star, 15-20% four-star, 5-10% three-star, and 3-5% one-to-two-star. Businesses that aggressively solicit only satisfied customers may inadvertently create an unnaturally perfect distribution that triggers algorithmic suspicion.

Reviewer Profile Diversity

Google evaluates whether your reviewers look like real, diverse customers or like a coordinated group. Signals that raise flags include: reviewers who all joined Google recently, reviewers who have only reviewed your business (one-review accounts), reviewers who all posted from the same geographic location or IP range, and reviewers who use similar language patterns.

Healthy reviewer diversity means your reviews come from accounts with varied review histories, different geographic signals, and natural language variation. This happens automatically when reviews come from genuine customers — it only becomes a problem when reviews are solicited from a narrow group or purchased from review farms.

Strategies for Maintaining Consistent Velocity

Building sustainable review velocity requires systems, not campaigns. Here are strategies organized by effort and impact.

Automated Post-Transaction Requests

The most effective velocity driver is a systematized ask. After every transaction — sale, service call, appointment, delivery — trigger an automated review request via email or SMS. The timing matters: send the request within 24-48 hours while the experience is fresh.

Conversion benchmarks: Email review requests convert at 5-10%. SMS review requests convert at 12-18%. In-person requests (backed by a follow-up email) convert at 25-40%.

For a detailed guide on asking for reviews effectively, see our ask customers for reviews without being annoying tutorial.

The Drip Approach

Instead of emailing your entire customer list at once (which creates a velocity spike), drip review requests over time. Send 10-15 requests per day rather than 200 per week. This creates a natural, consistent velocity pattern that mirrors organic review behavior.

QR Codes at Point of Experience

For brick-and-mortar businesses, place QR codes at the point of maximum satisfaction — on receipts, on service completion forms, on table tents, near the exit. The QR code should link directly to your Google review page, reducing friction to a single scan and tap.

Employee Training

Train every customer-facing employee to make a natural review request at the conclusion of a positive interaction. "We really appreciate your business — if you have a minute, a Google review would mean a lot to us." This verbal prompt, combined with a follow-up email containing the direct review link, is the highest-converting review generation method.

Review Monitoring and Response

Responding to reviews — both positive and negative — has been shown to increase subsequent review velocity by 12-20%. When potential reviewers see that a business reads and responds to reviews, they are more likely to leave one themselves. It signals that their feedback will be heard.

For data on how response rates influence overall ratings and review volume, see our review response rate impact on ratings guide.

Tracking Your Review Velocity

You cannot optimize what you do not measure. Here is how to track velocity systematically.

Manual Tracking

At minimum, record your total Google review count on the first of every month. Calculate the difference to determine your monthly velocity. Track this on a simple spreadsheet alongside your primary competitors.

Automated Monitoring

Tools like Sentimyne track review velocity automatically across multiple platforms, alerting you when velocity drops below your target or when competitors accelerate their acquisition rate. The free tier supports 2 analyses per month — enough to establish your velocity baseline and competitive benchmarks. The Pro plan at $29/month provides ongoing velocity tracking, and the Team plan at $49/month supports multi-location velocity monitoring for businesses with multiple Google Business Profiles.

Key Velocity KPIs

Track these metrics monthly:

- Absolute velocity: Raw number of new reviews per month

- Relative velocity: Your velocity compared to your top 3 competitors

- Velocity trend: Is your velocity increasing, stable, or declining over the past 3-6 months?

- Velocity by platform: Are you gaining reviews where it matters most (Google) or only on secondary platforms?

- Velocity by rating: Are new reviews maintaining or improving your average star rating?

For a comprehensive approach to building review tracking dashboards, see our build a review monitoring dashboard guide.

Common Velocity Mistakes

Mistake 1: The Campaign Spike

Running a review generation campaign that produces 100 reviews in two weeks, then nothing for three months. This creates an unnatural velocity pattern that may trigger Google's fraud filters and provides no long-term ranking benefit.

Mistake 2: Incentivizing Only Happy Customers

Selectively soliciting reviews from customers you know are satisfied. This inflates your velocity but creates an unnaturally positive rating distribution. It also violates FTC guidelines if the solicitation is contingent on a positive review. For a full breakdown of the regulatory landscape, see our FTC fake review rules 2026 guide.

Mistake 3: Ignoring Non-Google Platforms

Focusing all velocity efforts on Google while ignoring Yelp, Facebook, and industry-specific platforms. While Google is the most important platform for local SEO, review diversity across platforms contributes to overall online prominence.

Mistake 4: Not Tracking Competitor Velocity

Knowing your own velocity is only half the picture. If your velocity is 10 per month but your top three competitors each maintain 20, you are losing ground despite consistent effort. Competitive velocity benchmarking is essential for setting realistic targets.

Frequently Asked Questions

What is a good review velocity for a local business?

A good review velocity depends on your industry, market size, and competitive landscape. As a general benchmark, small-market businesses should aim for 5-15 new Google reviews per month, while large-market businesses in competitive categories like restaurants and auto dealerships may need 25-80+ per month to maintain ranking competitiveness. The most important benchmark is relative: your velocity should equal or exceed your nearest direct competitors. Track the top three ranked businesses in your category on Google Maps and aim to match or exceed their monthly review acquisition rate.

Does Google penalize businesses for getting too many reviews too quickly?

Google does not explicitly penalize high review velocity, but its review fraud detection systems flag unnatural velocity patterns. A sudden spike — such as going from 5 reviews per month to 80 in a single week — triggers algorithmic scrutiny that may result in reviews being filtered, held for manual review, or removed. The key is consistency. A gradual, steady increase in velocity (for example, growing from 8 to 12 to 15 per month over a quarter) is interpreted as healthy business growth. A spike followed by a drop is interpreted as a campaign or manipulation.

How does review velocity compare to star rating in local ranking importance?

Both matter, but they serve different ranking functions. Star rating influences click-through rate and conversion (consumers choose higher-rated businesses), while velocity influences ranking position (Google places businesses with active review flow higher in the local pack). In practice, a 4.2-star business with strong velocity will often outrank a 4.8-star business with zero recent reviews. The ideal strategy optimizes both simultaneously — maintaining consistent velocity while ensuring the customer experience generates naturally high ratings. Neither factor works in isolation.

Can responding to reviews improve review velocity?

Yes, research consistently shows that businesses that respond to reviews receive 12-20% more reviews than businesses that do not respond. The mechanism is social proof: when potential reviewers see that a business reads and responds to feedback, they perceive their own review as more likely to be read and valued, which increases their motivation to leave one. Responding also signals to Google that the business is actively managing its online presence, which contributes to the prominence factor in local ranking calculations.

How long does it take for improved review velocity to impact local search rankings?

Most local SEO practitioners observe measurable ranking improvements within 60-90 days of establishing consistent review velocity, assuming the velocity represents a meaningful improvement over the prior baseline and exceeds competitor velocity. Google's local algorithm refreshes more frequently than its organic algorithm, so changes in review signals are typically reflected within weeks. However, moving from no velocity to moderate velocity produces faster results than moving from moderate to high velocity. The first reviews in a drought period have the highest marginal ranking impact.

Ready to try AI-powered review analysis?

Get 2 free SWOT reports per month. No credit card required.

Start FreeRelated Articles

Traditional win/loss analysis relies on expensive interviews with 10-15% response rates. Customer reviews on G2, Capterra, and Trustpilot contain the same buyer signals at scale — for free. Here's the playbook for turning public review data into win/loss intelligence.

How to Analyse Video Product Reviews on YouTube & TikTok at Scale3.4 million video product reviews were posted across YouTube, TikTok and Instagram in a single 5-month period. Learn how to extract structured sentiment, brand mentions, and competitive intelligence from video reviews using AI transcription and NLP.

Review Analysis for Banks, Fintech & Financial Services (2026 Guide)88% of millennials and Gen Z check online reviews before choosing a financial institution. Learn how banks, fintechs, and financial advisors can analyse customer reviews to improve trust, reduce churn, and compete in an industry where a one-star Yelp increase drives 5-9% revenue growth.