Review Analysis for Procurement: Evaluating Vendors Using G2, Capterra & TrustRadius Data

Procurement teams spend weeks evaluating vendors through RFPs and demos — while thousands of unfiltered customer reviews sit on G2, Capterra, and TrustRadius. Learn how to systematically mine review platforms for vendor intelligence that RFP responses will never reveal.

# Review Analysis for Procurement: Evaluating Vendors Using G2, Capterra & TrustRadius Data

Every vendor looks good in an RFP response. They have dedicated teams whose sole job is making their product sound like the exact solution to your requirements. Demos are rehearsed. Case studies are cherry-picked. References are hand-selected.

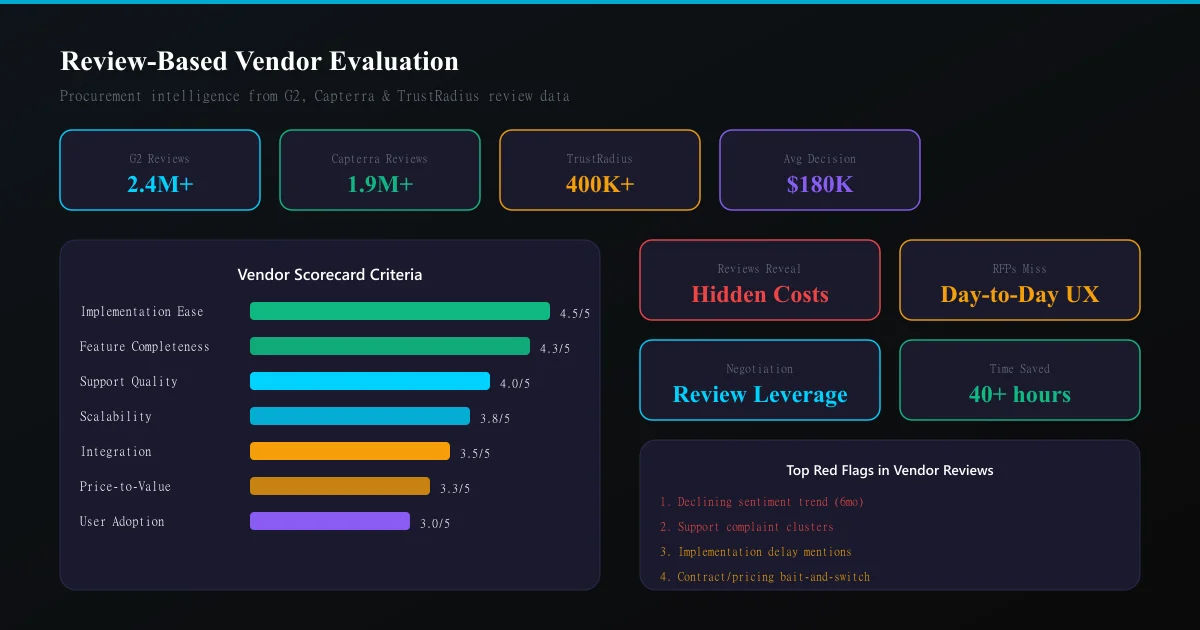

Meanwhile, G2 has 2.4 million verified reviews from real users. Capterra has 1.9 million. TrustRadius has 400K+. These are unfiltered, often brutally honest accounts from organisations that already made the decision you are about to make.

Procurement teams that ignore this data are making six and seven-figure decisions with deliberately incomplete information.

Why traditional procurement misses what reviews reveal

The RFP process answers: "Can this vendor do what we need?" Reviews answer a fundamentally different question: "What is it actually like to use this product after the sales team disappears?"

Specifically, reviews reveal:

- Implementation reality — timelines, hidden costs, resource requirements that never appeared in the SOW

- Support quality over time — not the white-glove onboarding, but month-12 ticket response times

- Product stability — outage frequency, bug severity, update disruption

- Vendor responsiveness — how they handle feature requests, pricing changes, contract renewals

- Organisational fit — which company sizes and industries genuinely succeed vs. struggle

None of this appears in an RFP response. All of it appears in reviews.

The procurement review analysis framework

Platform selection by vendor type

| Vendor category | Primary platform | Secondary platform | Why |

|---|---|---|---|

| Enterprise SaaS | G2 | TrustRadius | Verified enterprise reviewers, detailed pros/cons |

| SMB software | Capterra | G2 | Higher volume, small-team perspectives |

| IT infrastructure | TrustRadius | Gartner Peer Insights | Technical depth, architecture details |

| Marketing tools | G2 | Capterra | Marketer-heavy reviewer base |

| HR/People tech | G2 | TrustRadius | Enterprise HR buyer presence |

The SWOT extraction method for vendor evaluation

Apply a modified SWOT analysis framework specifically designed for procurement:

Strengths (from positive reviews): - What do enthusiastic users consistently praise? - Which features get described as "game-changing" or "couldn't live without"? - Where does the product clearly outperform alternatives?

Weaknesses (from negative reviews): - What recurring complaints appear across multiple reviewers? - Which features are described as "clunky," "broken," or "needs improvement"? - What limitations do reviewers discover only after purchase?

Opportunities (from feature requests and comparisons): - What roadmap items are reviewers requesting? - Where do reviewers say they supplement with other tools? - Which use cases are "almost" served but not quite?

Threats (from churn indicators): - Why do reviewers say they are considering switching? - What competitive products are mentioned as alternatives? - Which recent changes have generated backlash?

Building a vendor scorecard from review data

Step 1: Define your evaluation criteria

Before touching review data, establish 6-8 criteria that matter for your specific purchase:

- Implementation ease (time-to-value)

- Feature completeness for your use case

- Support quality and responsiveness

- Scalability and performance

- Integration capabilities

- Price-to-value ratio

- Vendor stability and roadmap

- User adoption and training curve

Step 2: Filter reviews by relevance

Not all reviews are equally relevant to your situation. Filter for:

- Company size match — G2 and TrustRadius let you filter by reviewer company size. A 50-person company's experience is irrelevant if you are a 5,000-person enterprise

- Industry relevance — some products serve certain verticals better than others

- Recency — prioritise reviews from the last 12 months. Software evolves rapidly

- Verified status — stick to verified reviews to avoid fake review contamination

- Review depth — short "great product!" reviews add no procurement value. Filter for 200+ word reviews

Step 3: Quantitative scoring

For each evaluation criterion, score the vendor 1-5 based on review evidence:

- 5: Consistently praised, multiple detailed positive accounts

- 4: Mostly positive with minor caveats

- 3: Mixed reviews, significant variation in experience

- 2: More negative than positive, recurring complaints

- 1: Consistently criticised, structural weakness evident

Weight scores by your priority ranking. A vendor scoring 5 on "integration capabilities" matters more if integration is your #1 requirement.

See What Your Reviews Really Say

Paste any product URL and get an AI-powered SWOT analysis in under 60 seconds.

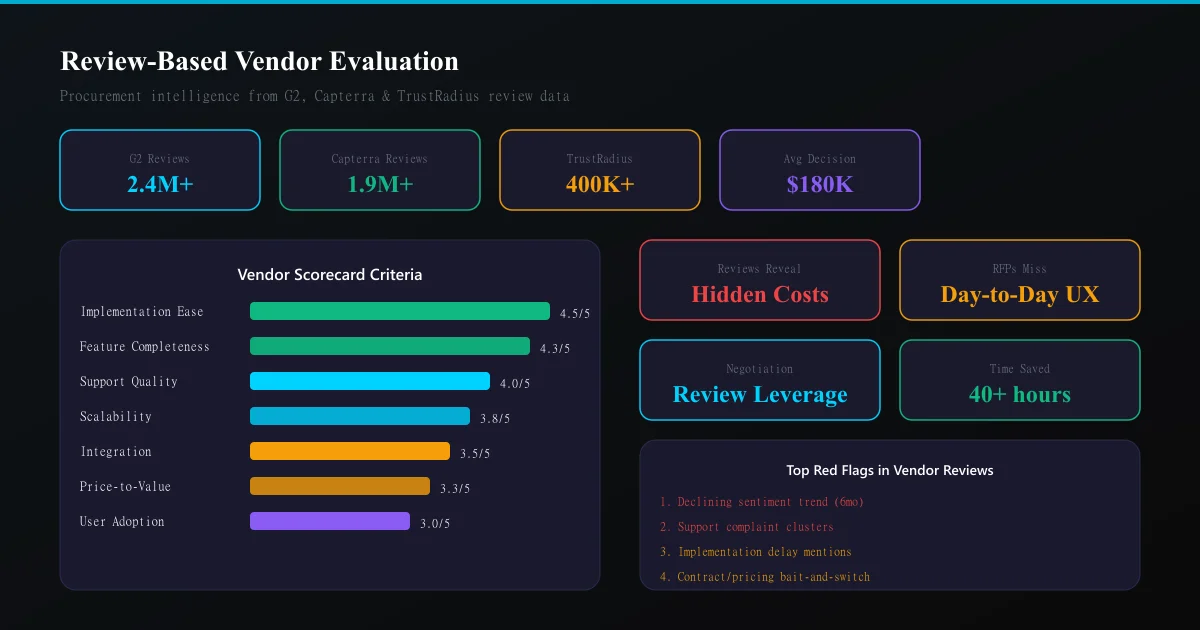

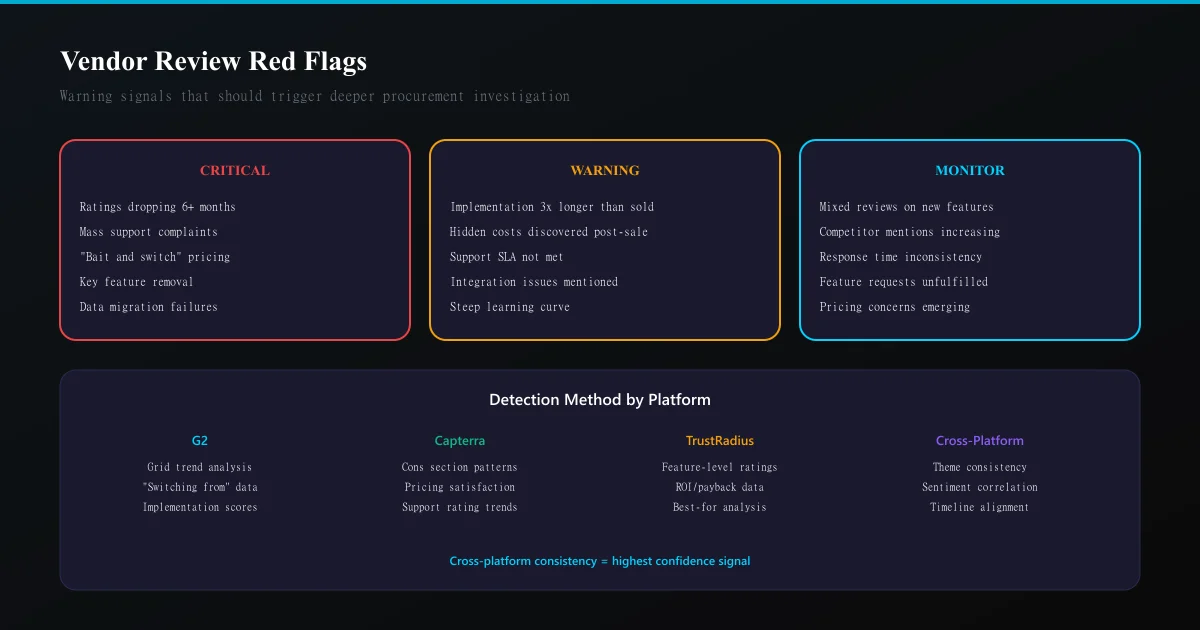

Try It Free →Step 4: Red flag identification

Certain review patterns should trigger immediate deeper investigation:

- Declining sentiment trend — ratings dropping over the last 6 months suggest internal problems

- Support complaint clusters — sudden spikes in support-related reviews indicate team changes or scaling issues

- Implementation horror stories — multiple reviews citing 3-6 month delays on what was sold as a "4-week implementation"

- Contract/pricing complaints — "bait and switch," "surprise price increase," "locked in" language

- Departure of key features — reviews mentioning removed functionality or broken integrations

Any red flag warrants a direct conversation with the vendor. Bring the specific review themes to the negotiation table — it changes the power dynamic entirely.

Platform-specific analysis techniques

G2 analysis

G2 offers the richest structured data for procurement: - Grid positioning shows market presence vs. satisfaction - Comparison pages directly contrast vendors against each other - Implementation scores predict time-to-value - "Switching from" data reveals which vendors users are leaving and why

Focus on the "What do you dislike?" section — this is where procurement gold lives.

Capterra analysis

Capterra excels for SMB and mid-market evaluation: - "Cons" sections tend to be shorter but more specific than G2 - "Alternatives considered" reveals your vendor's true competitive set - Pricing satisfaction scores help predict renewal negotiation leverage - Customer support ratings as a standalone metric

TrustRadius analysis

TrustRadius provides the deepest technical analysis: - "trScore" weighting prioritises detailed, verified reviews - Feature-level ratings show exactly which capabilities work well - "Best for" / "Not best for" sections directly map to use-case fit - ROI and payback data helps build the business case

The competitive comparison matrix

When evaluating 3-5 shortlisted vendors, build a comparison matrix:

- Pull 20-30 relevant reviews per vendor (filtered by your criteria)

- Extract key themes per evaluation criterion

- Score each vendor on each criterion

- Calculate weighted total scores

- Identify where the top two vendors differentiate

This process takes 2-3 hours per vendor manually. Automated review analysis tools can compress this to minutes by extracting structured SWOT insights from hundreds of reviews simultaneously.

Using review analysis in vendor negotiations

Review intelligence gives procurement teams significant negotiation leverage:

On pricing: "Reviews consistently mention that implementation takes 3x longer than quoted. We want that reflected in the SOW timeline and payment schedule."

On SLAs: "TrustRadius reviews from your enterprise customers mention average support response times of 48 hours. We need a contractual SLA of 4 hours for critical issues."

On features: "G2 reviews show your reporting module is the weakest-rated feature. We need a roadmap commitment on improvements before signing."

On risk mitigation: "Multiple recent reviews mention data migration issues. We want a dedicated migration specialist included, not as an add-on."

Vendors respond differently when they know you have done your research. The conversation shifts from sales theatre to honest partnership discussion.

When review analysis should override other signals

Review analysis should carry veto weight in three scenarios:

- The reference paradox — vendor-provided references are glowing, but public reviews tell a different story. Trust the reviews. References are selected specifically to sell.

- The demo disconnect — the product looks beautiful in demos, but reviews consistently mention UX problems at scale. Reviews reflect daily usage reality.

- The pricing trap — the vendor is significantly cheaper than alternatives, but reviews reveal hidden costs (implementation fees, per-seat overages, required add-ons). Competitor analysis from reviews helps identify true total cost.

Ready to try AI-powered review analysis?

Get 2 free SWOT reports per month. No credit card required.

Start FreeRelated Articles

Discord communities are invisible to traditional review platforms. Learn how to systematically extract and analyze member sentiment from channels to detect engagement churn, identify friction points, and drive community growth.

Luxury Brand Review Analysis: Understanding High-End Customer Expectations and FeedbackLuxury brands operate with different customer expectations. Learn how to analyze reviews on specialty platforms, separate outcome from process feedback, and detect quality deterioration in high-margin segments.

Review Sentiment Analysis as Churn Prediction: Using Reviews as Leading Indicators for Customer LossChurn prediction using usage metrics is reactive. Learn how to use review sentiment shifts as leading indicators that predict customer churn 30–90 days in advance, enabling proactive retention intervention.