Subscription Box Review Analysis: How to Reduce Churn With Customer Feedback Intelligence

Subscription boxes face 40-60% annual churn rates — and the reasons are hiding in plain sight in customer reviews. Learn how to analyse subscriber feedback to predict cancellations, improve personalisation, and extend customer lifetime value.

The subscription box model has a churn problem that most operators know about but few solve systematically. Annual churn rates of 40–60% are common across the industry — meaning a subscription box company must replace nearly half its subscriber base every year just to maintain revenue, let alone grow.

The reasons subscribers cancel are rarely mysterious. They're written in plain language across Trustpilot, Reddit, app store reviews, and cancellation survey responses. The challenge isn't finding the reasons — it's analysing them at scale, detecting patterns before they become trends, and connecting specific feedback themes to specific operational changes that reduce churn.

The Subscription Box Feedback Ecosystem

Where Subscriber Reviews Live

Subscription box reviews are more fragmented than product reviews because they describe an ongoing relationship rather than a single purchase:

- Trustpilot — the primary review platform for subscription services. Reviewers describe their overall subscription experience, often updating reviews over time.

- Reddit — r/subscriptionboxes, r/BeautyBoxes, r/BarkBox, and category-specific subreddits contain detailed subscriber discussions that function as extended reviews.

- App stores — subscription boxes with apps (meal kits, fashion boxes) generate App Store/Google Play reviews about the digital experience.

- Social media — unboxing content on YouTube, TikTok, and Instagram contains sentiment data embedded in video format. Video review analysis captures this signal.

- Cancellation surveys — first-party data collected when subscribers cancel. The most direct churn signal, but the lowest volume (only cancelling subscribers provide it).

- Community forums — some subscription boxes run community platforms where subscribers discuss boxes, preferences, and complaints.

Review Timing Matters More for Subscriptions

A single-purchase product review is a point-in-time snapshot. A subscription review is a longitudinal assessment that changes over time. Understanding when reviews are written reveals different types of feedback:

Month 1–2 reviews — assess the initial experience: onboarding, first box quality, expectations vs reality. These are acquisition quality signals.

Month 4–6 reviews — assess the ongoing experience: variety, personalisation accuracy, perceived value trajectory. These are retention quality signals.

Cancellation-period reviews — written during or immediately after cancellation. These contain the most concentrated churn intelligence but are often the most emotionally negative.

Long-term subscriber reviews (12+ months) — the rarest and most valuable. These subscribers have high lifetime value and their feedback describes what keeps them: the retention formula.

The Five Churn Drivers in Subscription Box Reviews

1. Repetition / Lack of Novelty

Frequency in churn reviews: 35–45% Typical language: "Getting the same type of thing every month," "No variety," "Felt like I was getting repeats," "Novelty wore off after 3 months"

This is the #1 churn driver across every subscription box category. The entire value proposition of a subscription box is surprise and discovery. When that surprise diminishes, the subscriber's willingness to pay diminishes with it.

Analysis approach: Track "repetition" and "variety" mentions over subscriber tenure. If repetition complaints spike at month 4–5, that's when your curation algorithm starts recycling. Map specific product categories mentioned as repetitive to identify which inventory segments need expansion.

Operational fix: Implement "no-repeat" rules in curation algorithms. Track which product categories each subscriber has received and ensure minimum category variety across consecutive boxes. Flag subscribers approaching their "repetition threshold" (typically month 4) for manual curation review.

2. Personalisation Failures

Frequency in churn reviews: 25–35% Typical language: "Didn't match my profile," "I said I was allergic to X and got X," "Wrong size again," "They clearly don't read preferences"

Personalisation is the subscription box industry's core differentiator over retail — yet personalisation failures are the second-largest churn driver. When a subscriber has explicitly stated their preferences and receives products that violate those preferences, the trust damage is severe.

Analysis approach: Use aspect-based sentiment analysis to identify which personalisation dimensions fail most often. Is it size? Dietary restrictions? Style preferences? Skin type? Each requires a different fix.

Operational fix: Audit your preference-matching algorithm against actual box contents. Calculate a "preference compliance rate" — what percentage of items in each box match the subscriber's stated preferences? Target 90%+ compliance. Any box below 80% compliance should trigger a customer service outreach before the subscriber receives it.

3. Value Perception Decline

Frequency in churn reviews: 20–30% Typical language: "Not worth the price anymore," "Could buy this cheaper myself," "Quality dropped," "Started feeling like a waste of money"

Value perception in subscription boxes follows a predictable curve: high at the start (novelty + perceived savings), declining over months (novelty fades, subscriber starts calculating actual costs). This isn't necessarily a quality problem — it's a perception problem that accelerates as the initial excitement wears off.

Analysis approach: Track value-mention sentiment over subscriber tenure. If value sentiment is positive in month 1–3 reviews but negative in month 6+ reviews, the product hasn't changed — the perception curve has. Separately, track reviews that mention actual quality declines ("products feel cheaper lately," "smaller items than when I started") — these indicate real operational cost-cutting that's visible to subscribers.

Operational fix: For perception-driven value decline, introduce "milestone surprises" — higher-value items at month 3, 6, and 12 that reset the value perception. For real quality declines, reverse the cost-cutting. The math is simple: saving $2 per box by downgrading a product costs you the subscriber's remaining lifetime value when they cancel because "quality dropped."

4. Delivery Issues

Frequency in churn reviews: 15–20% Typical language: "Box arrived damaged," "Delivery was late again," "Items melted/spoiled in transit," "Takes too long to ship"

Delivery issues are particularly churn-driving for subscription boxes because they're recurring frustrations. A single late delivery from an online store is forgiven. A subscription box that's late 3 months in a row creates the perception of operational incompetence.

See What Your Reviews Really Say

Paste any product URL and get an AI-powered SWOT analysis in under 60 seconds.

Try It Free →Analysis approach: Track delivery complaint frequency by month and region. If delivery issues cluster geographically, it's a carrier/routing problem. If they cluster temporally (always late in summer = melting/spoilage), it's a packaging/product selection problem.

Operational fix: For chronic lateness, tighten ship-date commitments or switch carriers for problem regions. For damage, invest in packaging engineering. For spoilage, adjust product selection for seasonal temperature sensitivity. For each fix, measure whether the delivery theme's frequency declines in subsequent month's reviews.

5. Expectation Mismatch

Frequency in churn reviews: 15–25% Typical language: "Not what I expected from the marketing," "Thought I'd get X but got Y," "Website showed premium products but box was cheap," "Misleading about what's included"

First-box disappointment is the fastest path to cancellation. When the marketing creates expectations that the first box fails to meet, the subscriber cancels before they've had time to develop a relationship with the service.

Analysis approach: Compare your marketing claims (landing page copy, ad creative, influencer partnerships) against the themes in month-1 negative reviews. If your ads show luxury products but reviews say "drugstore quality," there's a dangerous expectations gap.

Operational fix: Either elevate the first box to match marketing (the "wow the new subscriber" strategy) or moderate marketing claims to set achievable expectations. The first approach has higher acquisition cost but lower churn. The second has lower acquisition cost but may reduce conversion. Test both and measure which maximises LTV.

Building a Churn Prediction Model From Reviews

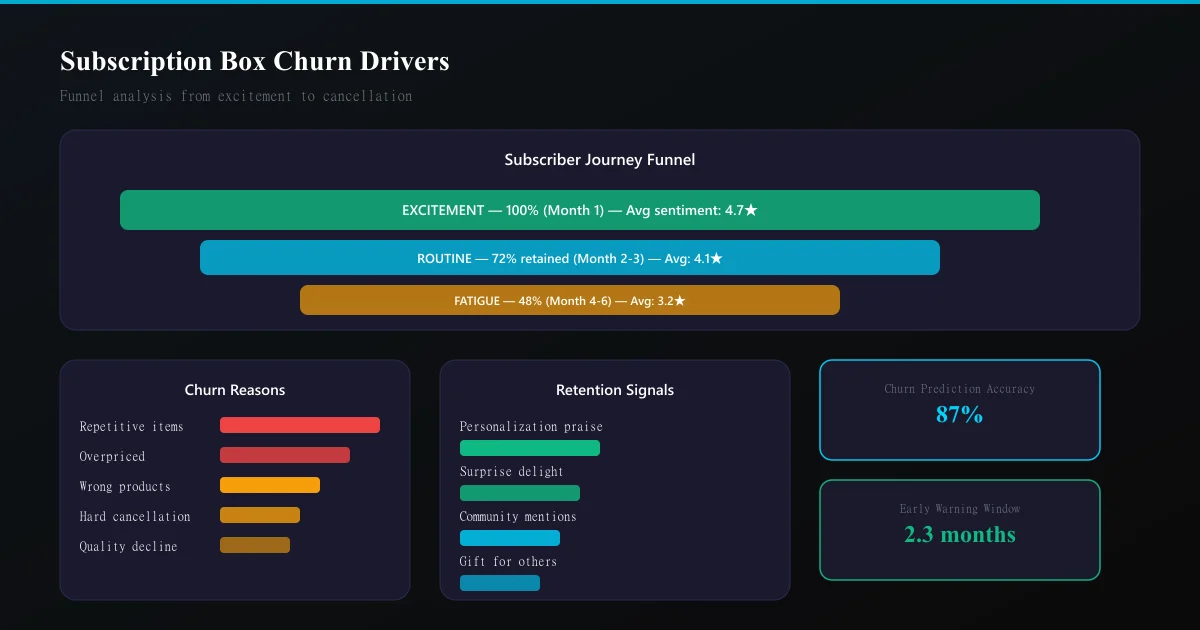

Reviews predict cancellation 30–60 days before the subscriber actually cancels. Here's how to build early warning signals:

The Sentiment Trajectory

Track each subscriber's review sentiment over time (if they've left multiple reviews or community posts): - Declining trajectory (positive → neutral → negative over 3+ touchpoints) = high churn risk - Stable positive = retention likely - Sudden negative (single very negative review after previous positives) = recoverable if you act fast

Theme-Based Risk Scoring

Assign churn-risk scores to specific review themes: - "Repetitive" + month 4+ = 80% churn probability within 60 days - "Wrong size/preference" + 2nd occurrence = 70% churn probability - "Not worth the price" + month 6+ = 65% churn probability - "Late delivery" + 3rd occurrence = 60% churn probability

When a subscriber's reviews trigger multiple risk themes, the combined probability is higher than any individual theme.

Integrating With Retention Actions

Connect churn predictions to automated retention interventions: - High-risk subscriber identified → trigger personalised email acknowledging the issue + offer (skip a month, upgrade a box, preference re-survey) - Repetition complaint → manually curate their next box with guaranteed novelty - Value complaint → include a premium bonus item in the next box - Personalisation failure → assign to a human curator for manual review of their profile

Review Analysis for Subscription Box Growth

Acquisition Optimisation

Positive subscriber reviews are your most powerful acquisition asset. Extract and deploy them:

- "Best month ever" reviews → social proof for landing pages (social proof tactics)

- Unboxing photos from reviews → UGC for ad creative

- Specific product praise → showcase those products in marketing

- Long-term subscriber testimonials → address the "will I get tired of it?" objection

Competitive Intelligence

Analyse competitor subscription box reviews to find your positioning advantage: - What do competitors' churned subscribers complain about? Position your box as solving those problems. - What do competitors' loyal subscribers praise? Ensure you match these table-stakes expectations. - What themes appear in competitor reviews that don't appear in yours? These might be features or categories you should add.

Use competitive review analysis methodology applied to subscription competitors.

Product Selection Intelligence

Reviews tell you exactly which product types generate excitement and which generate disappointment: - High-excitement products (frequently mentioned positively, photographed in unboxing content) → include more of these categories - Low-excitement products (rarely mentioned, or mentioned negatively) → reduce or eliminate from rotation - Controversial products (some subscribers love them, others hate them) → only include for subscribers whose profiles indicate affinity

Frequently Asked Questions

What's a good review rating for a subscription box? On Trustpilot, 4.0+ is competitive for subscription boxes. The industry benchmarks show significant variation: food/meal kit boxes average 3.8, beauty boxes average 4.1, and pet boxes average 4.2. Below 3.5 on Trustpilot signals serious operational issues.

How do I get more subscribers to leave reviews? Prompt at the right moment — after the subscriber has received and opened their box (not before). Include a review request card inside the physical box (it's the only marketing material they'll definitely see). Send a follow-up email 3 days after estimated delivery with a direct review link.

Can review analysis actually predict which subscribers will cancel? Yes — with limitations. Reviews and community posts from identifiable subscribers provide early warning signals 30–60 days before cancellation. But most subscribers cancel silently without leaving feedback. Combine review-based signals with behavioural data (login frequency, preference updates, support tickets) for a more complete churn prediction model.

Should I respond to negative subscription box reviews publicly? Yes — but with specific action, not generic apology. "We've noted that your last box didn't match your nut allergy preference. We've flagged your account for manual quality review on your next box and added a bonus item as an apology." This demonstrates competence AND recovers the subscriber.

What's the optimal time to request a review from a subscriber? After their 3rd box. Before the 3rd box, the subscriber hasn't formed a complete opinion. After the 6th box, response rates drop significantly (survey fatigue). The 3rd box is the sweet spot — enough experience to review meaningfully, early enough that they're still engaged enough to respond.

Ready to try AI-powered review analysis?

Get 2 free SWOT reports per month. No credit card required.

Start FreeRelated Articles

Discord communities are invisible to traditional review platforms. Learn how to systematically extract and analyze member sentiment from channels to detect engagement churn, identify friction points, and drive community growth.

Luxury Brand Review Analysis: Understanding High-End Customer Expectations and FeedbackLuxury brands operate with different customer expectations. Learn how to analyze reviews on specialty platforms, separate outcome from process feedback, and detect quality deterioration in high-margin segments.

Review Sentiment Analysis as Churn Prediction: Using Reviews as Leading Indicators for Customer LossChurn prediction using usage metrics is reactive. Learn how to use review sentiment shifts as leading indicators that predict customer churn 30–90 days in advance, enabling proactive retention intervention.