Reddit Sentiment Analysis for Product Feedback: Mining Unfiltered Customer Intelligence From Communities

Reddit is where product builders and early adopters gather to speak freely. Learn how to systematically analyse Reddit threads, comments, and community sentiment to identify feature requests, pain points, and competitive threats before your support team even hears about them.

# Reddit Sentiment Analysis for Product Feedback: Mining Unfiltered Customer Intelligence From Communities

Your product reviews on Trustpilot are managed. Your App Store feedback is filtered through the rating system. Your customer support tickets follow a template. But on Reddit? Users speak in their own voice, uncensored and unfiltered.

Reddit represents 5-10% of online product discussions for most categories. For niche verticals (developer tools, SaaS, gaming, cryptocurrency), it can exceed 20-30% of all accessible feedback. And it is the feedback your competitors are already analysing.

The problem is scale. A single subreddit devoted to your category generates hundreds of discussions weekly. A manual review approach dies after the first 50 comments. You need a systematic method to turn Reddit into a real-time product intelligence feed.

This guide shows you how to build one.

Why Reddit sentiment matters differently than other platforms

Reddit conversations have structural properties that make sentiment analysis uniquely valuable:

- Community reputation economy — users upvote honest criticism, downvote promotional spam. Signal-to-noise ratio is high compared to Twitter/X

- Threaded discussion depth — a single product complaint spawns 20-50 follow-up comments from users with similar experiences, revealing patterns that isolated reviews hide

- Anonymity breeds honesty — users discuss switching away from products, comparing vendors, and criticizing flaws with candour they would never use in verifiable reviews

- Historical permanence — unlike Twitter threads that disappear, Reddit discussions archive and surface in Google results years later, influencing perception of your entire category

- Demographic clustering — specific subreddits concentrate your actual target audience (e.g., r/startups, r/webdev, r/SaaS), not general Twitter followers

A sentiment analysis approach to reviews gives you polarity (positive, negative, neutral). Reddit sentiment analysis gives you causation — you learn not just that users are unhappy, but why, in their own words, with community validation.

The Reddit landscape for product feedback

Tier 1: Dedicated product subreddits

r/[YourProduct], r/[YourProductAlternatives] — These are ground zero. Users actively debate your product, competitors, pricing, and missing features. Category subreddits like r/productivity, r/emailclients, r/projectmanagement also surface relevant discussions.

Tier 2: Industry and use-case communities

r/startups, r/marketing, r/webdev, r/datascience — Your product users congregate here and discuss pain points indirectly. A marketing automation user on r/marketing complaining about "tools that don't integrate with Shopify" is product feedback in disguise.

Tier 3: Complaint and review aggregators

r/crappydesign, r/assholedesign, r/softwaregore — These spaces collect and ridicule poorly designed products. If your product appears frequently, you have a UX problem at scale.

Systematic Reddit sentiment analysis: the framework

Step 1: Identify high-signal subreddits and keywords

Not all Reddit discussions are relevant. Build a monitoring scope:

| Subreddit tier | Signal quality | Example | Update frequency |

|---|---|---|---|

| Brand-specific | Highest | r/[YourProduct], r/[ProductDiscussions] | Daily |

| Category-focused | High | r/SaaS, r/webdev, r/productivity | 3x weekly |

| Problem-focused | Medium | r/startupproblems, r/freelance | Weekly |

| Broad industry | Low | r/business, r/technology | Monthly sampling |

For each subreddit, identify keywords that surface your product and competitors: - Product names and aliases ("Slack" vs "Slackbot vs Teams") - Problem statements ("how to track project progress," "best email tool") - Comparison threads ("[Product] vs [Competitor]") - Complaint signals ("anyone else hate X?" "X broke my workflow")

Step 2: Collect and filter discussions

Use Reddit's search API or third-party tools (Pushshift, PRAW) to pull: - Post titles and URLs - Post scores (upvotes - downvotes) - Number of comments - Comment text and scores - Author reputation (account age, karma)

Filter for signal: - Post score > 10 — community validated as relevant - Comment count > 5 — genuine discussion, not stray feedback - Recency < 90 days — recent sentiment, not historical complaints - High-karma authors — established users, less spam

Step 3: Sentiment polarity classification

Assign each discussion and comment to a polarity bucket:

Positive sentiment — Users recommend your product, highlight advantages, share success stories - "This tool saved me 5 hours a week" - "Best investment we made for the team" - "Just switched from X, no regrets"

Negative sentiment — Users criticise features, pricing, support, or quality - "Feature requests gone unanswered for 6 months" - "Pricing increase made us switch to X" - "Customer support is nonexistent"

Neutral sentiment — Factual comparisons, feature questions, technical discussions - "Does this tool integrate with Zapier?" - "How does X compare to Y in terms of price?" - "The latest update changed the sidebar layout"

Mixed sentiment — Both praise and criticism in the same discussion - "Love the core product but the new pricing tier is a dealbreaker" - "Works well for small teams but doesn't scale"

Step 4: Extract high-value discussion patterns

Once you have sentiment polarity, cluster discussions by topic:

See What Your Reviews Really Say

Paste any product URL and get an AI-powered SWOT analysis in under 60 seconds.

Try It Free →- Feature request clusters — Multiple users requesting the same capability signals demand

- Switching discussions — "We used X but switched to Y because..." reveals your competitive weaknesses

- Pricing sentiment — Concentration of negative sentiment around pricing indicates willingness to switch

- Support quality complaints — Response time, helpfulness, ticket resolution speed

- Integrations and workflows — Missing API capabilities, broken third-party connections

- Performance and reliability — Outages, slowness, bugs affecting multiple users

For each cluster, calculate: - Cluster size — how many comments/discussions address this topic - Sentiment polarity ratio — percentage positive, negative, neutral - Trend direction — is sentiment improving or worsening month-over-month - Urgency indicator — how recently complaints emerged

Step 5: Convert sentiment to product decisions

Not all negative sentiment is actionable. Prioritise using this matrix:

| Sentiment | Mention count | Product impact | Action |

|---|---|---|---|

| Negative | High (10+) | Switching risk | Priority feature/fix |

| Negative | Medium (5-9) | Retention risk | Backlog candidate |

| Negative | Low (1-3) | Niche concern | Monitor for growth |

| Positive | High (10+) | Marketing asset | Amplify messaging |

| Mixed | High (10+) | Segmentation signal | Target improvement for segment |

Reddit sentiment vs. other feedback sources: the comparison

Reddit sentiment strength vs weakness

Strengths: - Real-world product usage context (not theoretical feedback from surveys) - Peer validation through voting system (upvotes filter out outlier complaints) - Competitor comparisons in native context (users debate X vs Y directly) - Trend visibility (emerging problems surface before support ticket volume rises)

Weaknesses: - Selection bias toward engaged power users and early adopters - Over-representation of niche use cases and complaint-driven discussions - Temporal clustering (new problem triggers Reddit cascade, creating false impression of prevalence) - Time lag from occurrence to Reddit discussion (2-4 weeks typical)

The combination of Reddit sentiment + in-app review analysis + support ticket analysis gives you coverage across casual users, engaged users, and support-dependent segments.

Tools and implementation

Reddit data sources

Direct API access: - PRAW (Python Reddit API Wrapper) — Official Python library for Reddit API, requires user authentication but reliable - Reddit Search API — Free, queryable up to 6 months back, rate-limited - Pushshift Archive — Community-maintained subreddit history archive (check current status)

Third-party aggregators: - Webmention monitors — Track mentions across Reddit alongside blogs and news - Social listening platforms (Brandwatch, Mention, Hootsuite) — Aggregated Reddit monitoring alongside Twitter/news - Product intelligence tools (G2 Crowd, Capterra integrations) — Some aggregate Reddit discussions

Sentiment analysis methods

Rule-based: - Keyword presence ("love," "hate," "switch to," "save time") - Simple scoring (count of positive vs negative words) - Fast and interpretable, but misses context ("I don't hate this" = positive, not negative)

LLM-based: - Use Claude API to classify sentiment with context - ~$0.01-0.05 per discussion - High accuracy, maintains context ("not the worst" = mixed, not positive)

Hybrid: - Rule-based filtering for obvious sentiment, LLM for ambiguous cases - 30-50% cost reduction compared to LLM-only, maintains accuracy

Turning Reddit sentiment into recurring intelligence

Weekly sentiment dashboard

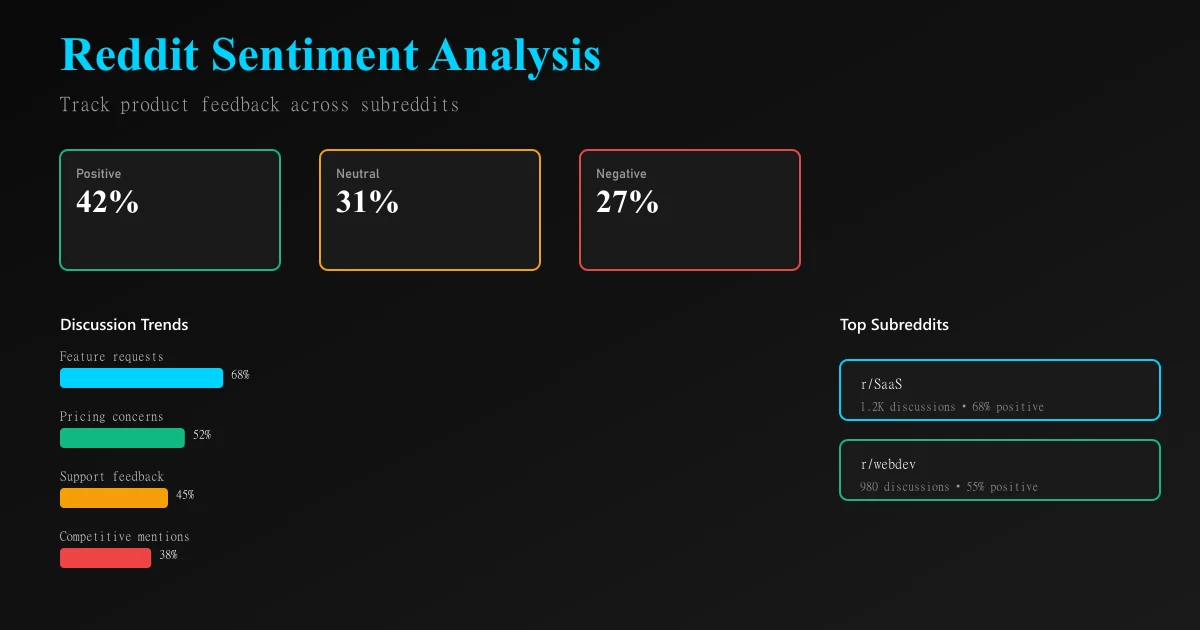

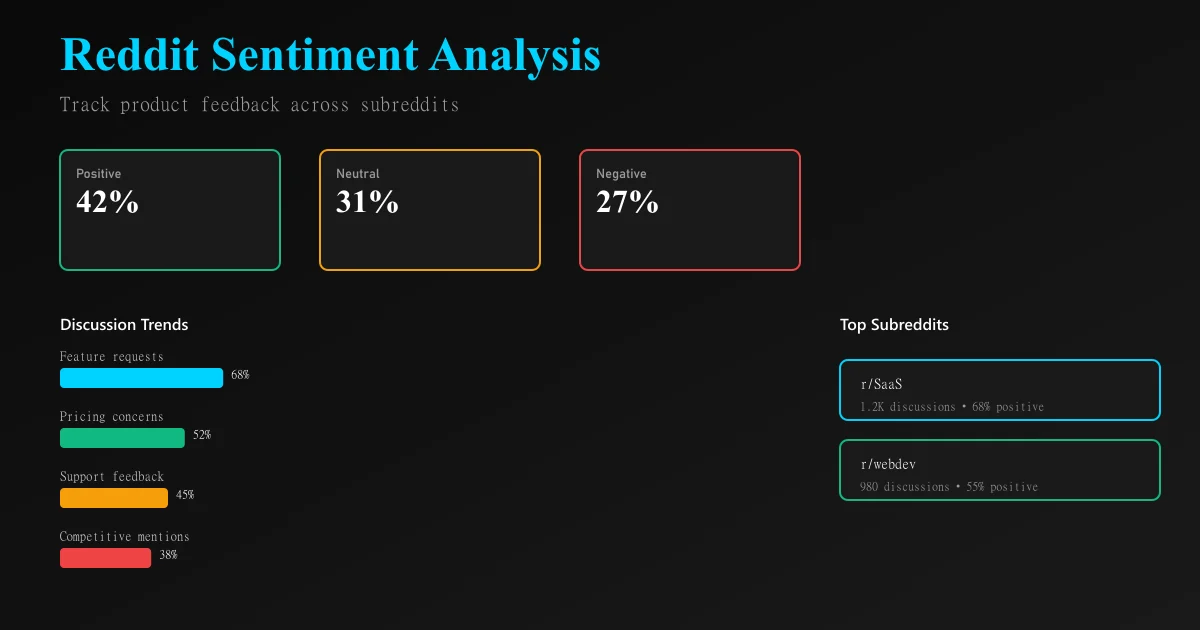

Monitor these metrics: - Sentiment polarity trend — % positive, negative, neutral this week vs. last week - Topic velocity — new discussion clusters emerging - Competitive mentions — which competitors are gaining mindshare - Feature request acceleration — are specific feature requests growing in mentions

Monthly product sync

Slot 90 minutes monthly to review high-signal Reddit discussions and cross-reference against: - Product roadmap feedback loops - Support ticket escalations - Churn analysis from cancellation reviews - Pricing sentiment trends

Competitive intelligence update

Track competitor sentiment trends: are they losing customers to dissatisfaction, or gaining? Are users switching from them to you or vice versa?

Common Reddit sentiment analysis mistakes

Mistake 1: Treating all negative sentiment as product defects Not every complaint is a bug. Users complain about pricing, their own workflows, feature absence, competitor superiority, and other non-product issues. Before acting, validate that the issue is widespread and within your control.

Mistake 2: Assuming Reddit users represent your customer base Early adopters and power users congregate on Reddit. Casual users do not. If your growth depends on mainstream adoption, Reddit sentiment may skew toward advanced use cases and feature requests you should deprioritize.

Mistake 3: Overweighting recent threads A product issue triggers a Reddit discussion cascade. 50 similar complaints land within days. This creates the false impression that the problem affects 50% of users when it actually affects 0.5% who happened to discover the thread. Wait for temporal decay before escalating.

Mistake 4: Neglecting thread history A negative sentiment peak three months ago may indicate a fixed issue. A slow decline in mentions may indicate a resolved problem. Always cross-reference sentiment trends against your release history.

Mistake 5: Ignoring positive sentiment Reddit sentiment analysis often focuses on problems. Users praising your product are providing marketing intelligence and feature differentiation insights. Amplify what Reddit says you do well.

Ready to try AI-powered review analysis?

Get 2 free SWOT reports per month. No credit card required.

Start FreeRelated Articles

Discord communities are invisible to traditional review platforms. Learn how to systematically extract and analyze member sentiment from channels to detect engagement churn, identify friction points, and drive community growth.

Luxury Brand Review Analysis: Understanding High-End Customer Expectations and FeedbackLuxury brands operate with different customer expectations. Learn how to analyze reviews on specialty platforms, separate outcome from process feedback, and detect quality deterioration in high-margin segments.

Review Sentiment Analysis as Churn Prediction: Using Reviews as Leading Indicators for Customer LossChurn prediction using usage metrics is reactive. Learn how to use review sentiment shifts as leading indicators that predict customer churn 30–90 days in advance, enabling proactive retention intervention.