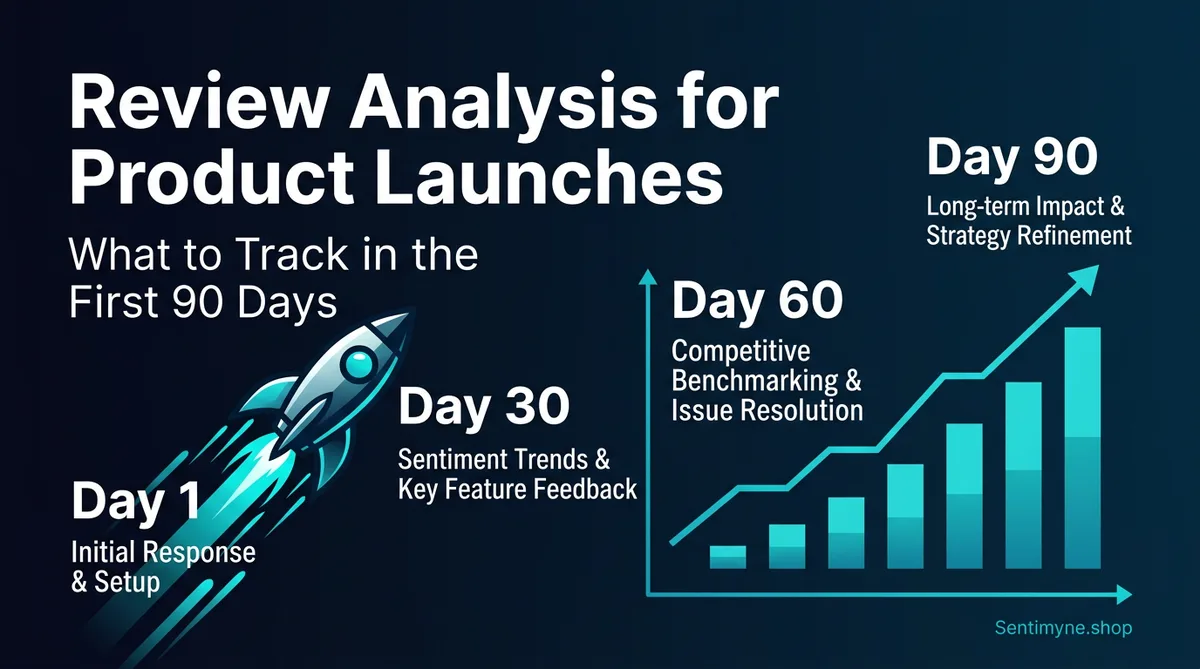

Review Analysis for Product Launches: What to Track in the First 90 Days

A complete 90-day framework for monitoring and analyzing reviews after a product launch. Learn what to track in each phase, when to pivot vs. stay the course, and how AI review analysis accelerates post-launch decisions.

The first reviews after a product launch are some of the most consequential words ever written about your business. They set the trajectory for everything that follows — search rankings, conversion rates, customer expectations, and team morale.

A product that accumulates 15 positive reviews in its first two weeks builds momentum that compounds for months. A product that attracts 5 angry one-star reviews in the same window can spiral into a death cycle where low ratings discourage purchases, which reduces review volume, which keeps the rating low.

92% of consumers hesitate to purchase a product with fewer than 4 stars, according to BrightLocal's 2025 consumer survey. For a new product with a small review base, a handful of early negative reviews can tank your average and suppress sales before you've had a chance to build momentum.

The first 90 days after launch aren't just important — they're existential. And the brands that approach post-launch review monitoring with a structured framework dramatically outperform those that wing it.

This guide gives you that framework: week by week, what to watch, what to do, and when to make the hard calls.

Why the First 90 Days Matter

The Algorithmic Impact

On platforms like Amazon, Google, and the App Store, early reviews carry disproportionate weight in ranking algorithms. Amazon's A9 algorithm factors in review velocity (how quickly reviews accumulate), recency, and the trend of ratings over time.

A product that launches with a burst of 4.5+ star reviews signals to the algorithm that it's a quality product deserving of visibility. A product that launches with mixed or negative reviews gets depressed in search results — making it harder to generate the sales needed to improve the review base.

The first 30 reviews essentially set your algorithmic floor. Recovering from a poor start requires significantly more effort than maintaining a strong one.

The Social Proof Snowball

Early reviews create a self-reinforcing cycle. Positive early reviews attract more buyers, who leave more reviews, which attracts more buyers. Negative early reviews suppress purchases, which reduces review volume, which locks in a low rating.

Research from the Journal of Marketing shows that the first 10 reviews have up to 10x more influence on a product's long-term rating trajectory than reviews #100-#110. Early reviews shape expectations, influence how subsequent customers perceive the product, and even affect how they write their own reviews.

The Feedback Window

The first 90 days is also when customer feedback is most actionable. Early adopters tend to be more engaged, more articulate, and more invested in the product's success. Their feedback is often specific enough to drive immediate product improvements — if you're paying attention.

After 90 days, the review base becomes large enough that individual reviews have less impact on the overall picture. The themes are set. The narrative is established. Changing course becomes harder.

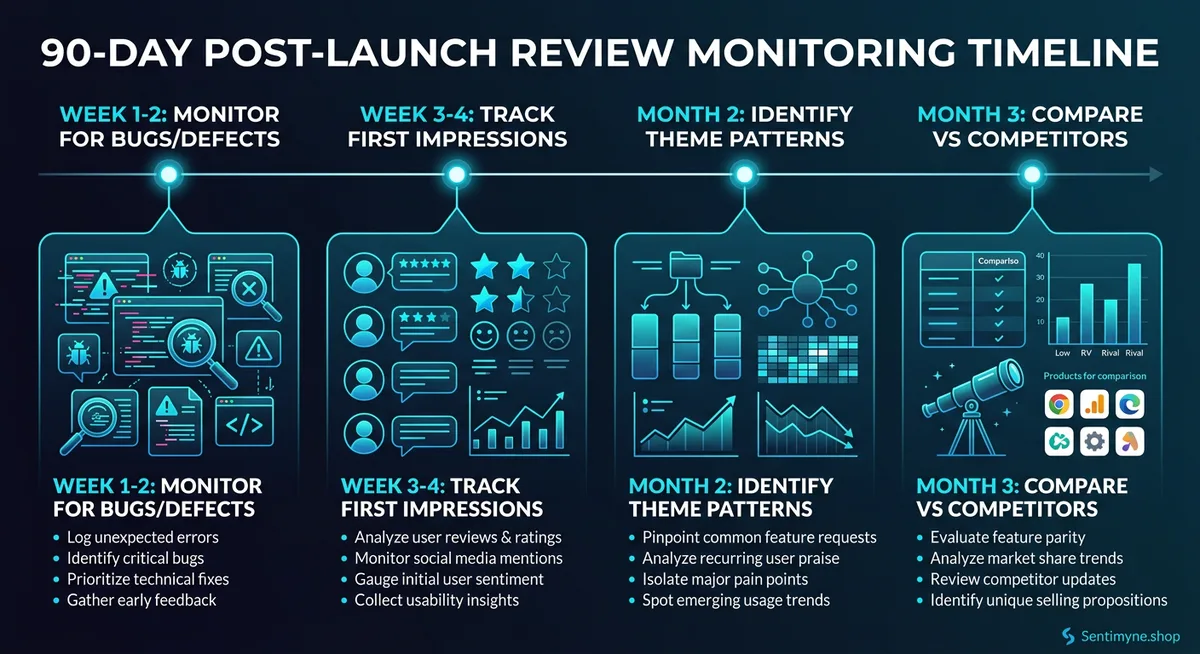

Week 1-2: Defect Detection and Rapid Response

The first two weeks are about survival. Your primary goals: catch any critical issues before they become widespread, and respond to every single review personally.

What to Monitor

Critical defect signals: - Any mention of the product being broken, defective, or not working as described - Shipping damage reports (packaging may need improvement) - Setup or installation problems (documentation may be unclear) - Compatibility issues (did you miss a use case in testing?)

First impression themes: - What do customers mention first? The first thing a customer talks about reveals their primary expectation - Unboxing experience — does reality match the marketing? - Time-to-value — how long before they experience the core benefit?

What to Do

Respond to every review. Yes, every single one. In the first two weeks, your review base is small enough that this is feasible, and the impact is enormous. Customers who see the brand actively engaging with early reviews are more likely to leave their own review — and more forgiving when issues arise.

For negative reviews: - Respond within 24 hours - Acknowledge the specific issue - Provide a direct resolution (replacement, refund, fix instructions) - Follow up to confirm the issue was resolved

For positive reviews: - Thank the reviewer specifically for what they highlighted - Ask if they'd be willing to share photos or additional detail (builds richer social proof)

Escalation protocol: If more than 2 reviews in the first week mention the same defect, escalate immediately to the product team. A pattern emerging in the first 5-10 reviews likely represents a systemic issue that will affect a significant percentage of customers.

Red Flags

- 3+ reviews mentioning the same defect → potential product recall or urgent fix needed

- Average rating below 3.5 after 10 reviews → activate emergency response plan

- Reviews mentioning "not as advertised" → review your product listing and marketing claims immediately

Week 3-4: First Impression Analysis

By week 3, you should have 15-30 reviews (depending on sales volume). This is enough data to start identifying meaningful patterns beyond individual complaints.

What to Monitor

Theme emergence: - What are the top 3 positive themes? These become your marketing amplification targets - What are the top 3 negative themes? These become your immediate improvement targets - Are any themes surprising — either positively or negatively?

Expectation alignment: - Does customer language match your marketing language? If you're positioning as "easy to use" but reviews say "steep learning curve," there's a dangerous disconnect - Are customers using the product for the use case you intended? Sometimes customers find unexpected applications — which could be an opportunity or a problem

Comparison mentions: - Which competitors are reviewers comparing you to? This reveals your true competitive set, which may differ from your assumed one - How do customers describe the switching experience? What did they use before, and what prompted them to try your product?

What to Do

Run your first structured analysis. With 15-30 reviews, you have enough data for a meaningful Sentimyne SWOT analysis. This gives you a baseline to measure against at 60 and 90 days.

Document your baseline metrics: - Average rating across all platforms - Top 5 themes (positive and negative) - Review volume by platform - Sentiment distribution (% of 5-star, 4-star, 3-star, 2-star, 1-star)

Adjust your marketing based on review language. If customers consistently describe a benefit you hadn't emphasized, update your product listing, ads, and social content to reflect how real users talk about your product.

Month 2: Pattern Recognition and Iteration

See What Your Reviews Really Say

Paste any product URL and get an AI-powered SWOT analysis in under 60 seconds.

Try It Free →By month 2, you should have 40-100 reviews. This is where pattern analysis becomes statistically meaningful, and where you can start making data-driven product decisions.

What to Monitor

Theme evolution: - Are the themes from Week 3-4 holding, or have new themes emerged? - Is the negative theme frequency increasing or decreasing? (If your team has been making fixes, you should see improvement) - What's the sentiment trend? Is the average rating improving, declining, or stable?

Segment analysis: - Do different customer segments leave different types of reviews? (Power users vs. beginners, different use cases, different platforms) - Is there a platform-specific pattern? (e.g., Amazon reviews focus on physical quality while G2 reviews focus on features)

Pre-launch vs. reality: - Compare the themes in your reviews against your pre-launch assumptions. What did you get right? What surprised you? - Are customers finding the value proposition you intended, or are they discovering a different one?

What to Do

Run a comparative SWOT analysis. Use Sentimyne to generate a fresh SWOT and compare it to your Week 3-4 baseline. The delta reveals: - Whether your fixes are working (declining negative themes) - Whether new issues are emerging (new weakness themes) - Whether your marketing adjustments are landing (changing strength themes)

Prioritize product improvements. By month 2, you should have enough data to prioritize confidently:

| Priority | Criteria |

|---|---|

| P0 (This week) | Mentioned in 20%+ of negative reviews, causes returns/refunds |

| P1 (This sprint) | Mentioned in 10-20% of negative reviews, impacts satisfaction |

| P2 (Next sprint) | Mentioned in 5-10% of reviews, nice-to-have improvement |

| P3 (Backlog) | Mentioned in <5% of reviews, minor friction |

Optimize review generation. If your review volume is below target, implement the strategies from our review generation guide. The mid-launch window is critical — you need enough reviews to establish algorithmic credibility before the initial launch momentum fades.

Month 3: Competitive Benchmarking and Strategic Decisions

By month 3, your review base should be 80-200+ reviews. The initial launch phase is over. Now it's about competitive positioning and long-term strategy.

What to Monitor

Competitive benchmarking: - How does your SWOT compare to competitors' SWOTs? (Run competitor analyses using Sentimyne) - Where are you winning? Where are you losing? - Are customers who switched from competitors satisfied, or are they experiencing a different set of problems?

Trend analysis: - Plot your average rating over the 90 days. Is it trending up, flat, or declining? - Are early negative themes resolving (indicating successful product iteration)? - Are new themes emerging that weren't present in Month 1?

Market positioning signals: - What language do customers use to describe your product category? (This should inform your SEO and content strategy) - Where does your product sit in the customer's mental competitive landscape? - Are customers recommending your product to specific use cases or audiences? (This reveals your natural positioning)

What to Do

Produce a 90-day review intelligence report that synthesizes everything you've learned:

- Executive summary: Overall sentiment, key themes, trajectory

- Strength amplification plan: How to double down on what customers love

- Weakness remediation plan: Status of fixes, remaining priorities

- Competitive positioning: Where you stand vs. competitors based on review data

- Product roadmap inputs: Feature requests and gaps identified through reviews

- Marketing optimization: Language, messaging, and positioning adjustments based on customer feedback

Share the report broadly. Product, marketing, sales, customer success, and leadership should all see this document. Review data is one of the few truly cross-functional intelligence sources — it's relevant to every team.

Setting Up Alerts and Automation

Manual review monitoring doesn't scale. Here's how to automate your post-launch monitoring:

Daily Automated Checks

- New review notifications across all platforms

- Automatic flagging of 1-star and 2-star reviews for immediate response

- Keyword alerts for critical terms (defect, broken, return, refund, not working)

Weekly Automated Reports

- Review volume by platform

- Average rating trend

- New theme detection

- Competitor review volume comparison

Monthly Deep Analysis

- Full SWOT analysis refresh via Sentimyne

- Comparison against previous months

- Competitive benchmarking update

- Recommendations for product and marketing teams

When to Pivot vs. Stay the Course

The hardest decision in the first 90 days is knowing when negative feedback represents a fixable issue versus a fundamental product-market fit problem. Here's a framework:

Stay the Course When:

- Negative themes are specific and fixable. "The app crashes when I try to export" is a bug. Bugs get fixed. If the core value proposition is landing and the complaints are about execution, you're on the right track.

- The negative-to-positive ratio is improving. Even if you launched with issues, a trend showing fewer complaints over time means your iteration is working.

- Positive reviews describe the exact value proposition you intended. Customers understand what you built and appreciate it — they just want you to polish it.

- Competitors have the same complaints. If every product in your category gets criticized for the same thing, the issue is industry-wide, not product-specific.

Consider Pivoting When:

- Customers describe a different value proposition than you intended. If you built a project management tool but reviews praise it as a "great note-taking app," your market is telling you something. Listen.

- The same fundamental complaint appears in 40%+ of reviews and isn't fixable with a patch. If customers fundamentally disagree with your approach, no amount of iteration fixes a design philosophy mismatch.

- Review volume is extremely low despite adequate marketing. Low review volume means low purchase volume, which may indicate a positioning or market problem deeper than product quality.

- Competitors are solving the same problem better and customers say so explicitly. If reviews consistently say "just use [Competitor] instead," you need to find a different angle.

The 90-day rule of thumb: If your average rating hasn't climbed above 4.0 stars after 90 days of active monitoring, improvement, and response — and you have 50+ reviews — it's time for a serious strategic reassessment.

Building Your Post-Launch Review Stack

Here's the toolkit for comprehensive post-launch review monitoring:

- Sentimyne for AI-powered SWOT analysis of your reviews and competitors' reviews — generates structured, actionable intelligence from hundreds of reviews in 60 seconds

- Platform notification settings — Enable email alerts for new reviews on every platform

- Review response templates — Pre-written templates for common positive and negative themes, customized for each response

- Internal Slack/Teams channel — A dedicated channel where new reviews are posted automatically for team visibility

- Monthly reporting template — A standardized format for your 30/60/90-day review intelligence reports

The investment in post-launch review monitoring pays for itself many times over. The brands that treat the first 90 days as a structured intelligence-gathering process build products that get better every month — and review trajectories that compound their success over time.

Ready to try AI-powered review analysis?

Get 2 free SWOT reports per month. No credit card required.

Start FreeRelated Articles

A comprehensive 15-item review analysis checklist organized by launch phase. Covers pre-launch baseline, Week 1 monitoring, Month 1 analysis, and Month 2-3 strategic actions for successful product launches.

How to Run a Win/Loss Analysis Using Customer Reviews (B2B Playbook)Traditional win/loss analysis relies on expensive interviews with 10-15% response rates. Customer reviews on G2, Capterra, and Trustpilot contain the same buyer signals at scale — for free. Here's the playbook for turning public review data into win/loss intelligence.

How to Analyse Video Product Reviews on YouTube & TikTok at Scale3.4 million video product reviews were posted across YouTube, TikTok and Instagram in a single 5-month period. Learn how to extract structured sentiment, brand mentions, and competitive intelligence from video reviews using AI transcription and NLP.