Review Analysis Checklist for Product Launches: 15 Things to Monitor

A comprehensive 15-item review analysis checklist organized by launch phase. Covers pre-launch baseline, Week 1 monitoring, Month 1 analysis, and Month 2-3 strategic actions for successful product launches.

You have spent months building the product. The marketing site is polished. The launch email is drafted. Your team is excited. But have you planned what happens when the first reviews start coming in?

Most product teams treat reviews as an afterthought during launches. They check the star rating occasionally, respond to a few negative reviews, and vaguely hope for the best. Meanwhile, the reviews are telling them everything they need to know — which features are landing, which ones are confusing, what messaging is resonating, and what is driving returns or cancellations.

The first 30 days of reviews set the tone for your product's market perception, influence search rankings, and determine whether fence-sitters convert or bounce. This guide provides a 15-item checklist organized into four launch phases.

Why Launch Reviews Deserve Special Attention

They carry disproportionate weight. The first 50-100 reviews establish your baseline rating, which algorithms on Amazon, G2, and the App Store use to determine visibility. A 4.2-star launch has a fundamentally different trajectory than a 3.6-star launch.

They come from your most invested customers. Early adopters and first-day buyers are your most enthusiastic audience. If they find issues, mainstream customers will find them ten times more.

They reveal expectation gaps. Your marketing made promises. Your product delivers reality. Launch reviews tell you whether those match.

"The first 30 days of reviews after launch are your real product spec. Everything you thought you built gets rewritten by customer reality."

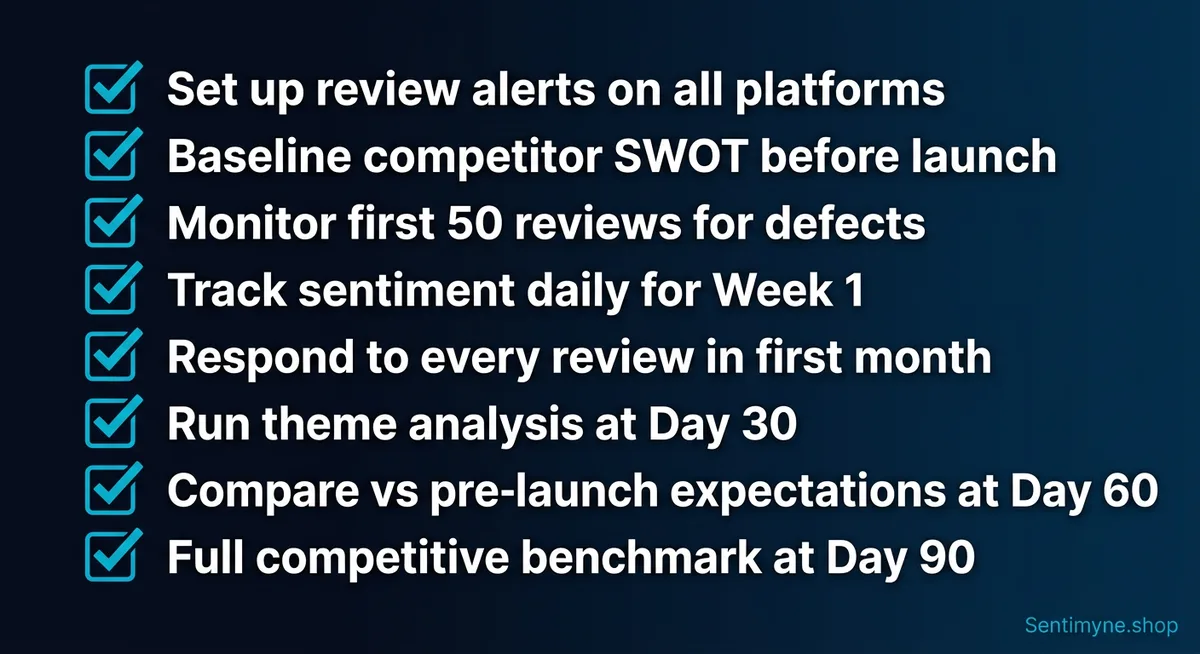

The Complete 15-Item Checklist

Pre-Launch (1-2 Weeks Before) - [ ] Run baseline competitor SWOT analyses - [ ] Set up review monitoring and alerts across all platforms - [ ] Prepare review response templates for common scenarios - [ ] Identify and prioritize key review platforms

Week 1 (Days 1-7) - [ ] Monitor every review for product defects and critical bugs - [ ] Respond to every single review (positive and negative) - [ ] Track sentiment daily and compare to competitor baselines - [ ] Flag urgent issues for immediate engineering escalation

Month 1 (Days 8-30) - [ ] Run comprehensive theme analysis on accumulated reviews - [ ] Compare actual review themes to pre-launch expectations - [ ] Adjust marketing messaging based on review language - [ ] Identify and execute quick wins from customer feedback

Month 2-3 (Days 31-90) - [ ] Conduct full competitive benchmark using review data - [ ] Build feature roadmap priorities from review intelligence - [ ] Share comprehensive launch review insights across the company

---

Pre-Launch: Setting the Foundation

1. Run Baseline Competitor SWOT Analyses

You need to know what "good" looks like in your category before your own reviews arrive. Without a competitive baseline, you cannot tell whether 20% of reviews mentioning "slow onboarding" is normal for your category or a specific problem with your product.

Select 3-5 competitors and run SWOT analyses on their reviews. Using Sentimyne, you can generate a complete SWOT for each competitor in approximately 60 seconds. A 4-competitor baseline takes under 5 minutes.

2. Set Up Review Monitoring and Alerts

Set up monitoring on every relevant platform: your website, app stores, Amazon, G2/Capterra, Trustpilot, and social media. Configure immediate alerts for 1-2 star reviews, daily digests for 3 stars, and weekly summaries for 4-5 stars.

3. Prepare Review Response Templates

Prepare templates for five scenarios: positive reviews (reference specific praise), mixed reviews (acknowledge criticism), negative product issues (apologize with next steps), expectation mismatches (clarify without condescension), and bug reports (confirm investigation with support channel). Templates are starting points — personalize every response.

4. Identify and Prioritize Key Review Platforms

A B2B SaaS tool prioritizes G2 and Capterra. A consumer product prioritizes Amazon and Trustpilot. Rank by where competitors have reviews, where customers research, and where reviews appear in Google search. Select your top 2-3 for daily attention during Week 1.

Week 1: Active Monitoring

5. Monitor Every Review for Product Defects

Read every review as it arrives and create a launch issues tracker. Any issue mentioned in 3+ reviews within 48 hours should be escalated. Distinguish between bugs (broken functionality), UX issues (confusing functionality), and expectation gaps (works but does not match expectations).

6. Respond to Every Single Review

Your responses to early reviews signal to all future reviewers how seriously you take feedback. Target: 1-2 star reviews within 4 hours, 3 stars within 24 hours, 4-5 stars within 48 hours. A thoughtful response posted 6 hours later beats a generic "thanks" posted in 30 minutes.

7. Track Sentiment Daily

Track daily: new review count per platform, average rating versus competitor baseline, sentiment distribution, top themes, and any new theme appearing for the first time.

8. Flag Urgent Issues for Engineering

Not all issues are equal. Use these escalation criteria:

- Critical (fix within 24 hours): Data loss, security issues, crashes affecting 10%+ of users

- High (fix within 72 hours): Major feature bugs, payment or billing issues, unusable performance

- Medium (fix within 1-2 weeks): UX confusion, minor bugs with workarounds

- Low (add to backlog): Cosmetic issues, edge case bugs, nice-to-have improvements

Month 1: Pattern Analysis

9. Run Comprehensive Theme Analysis

See What Your Reviews Really Say

Paste any product URL and get an AI-powered SWOT analysis in under 60 seconds.

Try It Free →After 2-4 weeks, you have enough data to identify statistically meaningful patterns.

| Theme | Mentions | Sentiment | Action |

|---|---|---|---|

| Ease of setup | 45 | +0.65 | Promote in marketing |

| Core feature quality | 38 | +0.52 | Protect and maintain |

| Mobile experience | 32 | -0.28 | Invest in improvement |

| Documentation gaps | 24 | -0.42 | Fix with content |

| Loading speed | 15 | -0.55 | Fix urgently |

10. Compare Themes to Pre-Launch Expectations

Month 1 data validates or invalidates your pre-launch assumptions. Look for surprises: a "minor" feature becoming the most praised aspect, a "core" feature barely mentioned, customers using the product for unanticipated use cases, or a "simple" onboarding step described as confusing.

11. Adjust Marketing Messaging

When 30 reviews describe your product as "the simplest way to do X" and your homepage says "the most powerful platform for X," your messaging is misaligned. Extract the 10 most common positive phrases customers use and adopt their language where it is stronger than your copy.

12. Execute Quick Wins

Month 1 reviews always contain 2-3 improvements that are fast to implement and high-impact. Customers confused by a setting? Add a tooltip — ship it in a day. Cannot find a feature? Add it to the navigation. Asking about a specific use case? Write a help article and publish it today. These quick wins build momentum, show customers you are listening, and improve review sentiment going forward.

Month 2-3: Strategic Actions

13. Conduct Full Competitive Benchmark

With a solid month of review data, benchmark yourself against competitors on equal footing. Re-run SWOT analyses on competitors and your own product. Build the competitive comparison table. Identify where you outperform expectations and where you fall short.

Sentimyne makes this practical — running analyses on your product and four competitors takes under 10 minutes total, with comprehensive data from 12+ platforms for each product.

14. Build Feature Roadmap from Review Intelligence

Your pre-launch roadmap was built on assumptions. Now you have real customer data. Validate existing priorities: is the next planned feature actually being requested? Add new priorities from the most frequent feature requests. Reprioritize based on which improvements would address the highest-volume negative themes.

| Priority | Improvement | Review Evidence | Impact | Effort |

|---|---|---|---|---|

| P0 | Fix loading speed | 15 mentions, -0.55 sentiment | High | Medium |

| P1 | Improve documentation | 24 mentions, -0.42 sentiment | Moderate | Low |

| P1 | Basic mobile support | 32 mentions, -0.28 sentiment | High | High |

| P2 | Add integration X | 12 mentions, top request | Moderate | Medium |

15. Share Insights Company-Wide

Create a Launch Review Report covering: launch health summary (overall sentiment, rating trajectory, volume vs. expectations), what customers love (top 5 strengths with supporting quotes), what needs improvement (top 5 weaknesses with action plans), customer language guide (for marketing and sales teams), competitive position post-launch, roadmap implications, and review response statistics.

Distribution plan: 15-minute all-hands summary. 45-minute product and engineering deep-dive. Marketing messaging brief with customer language. Sales enablement update with competitive positioning. Support training on common issues and response templates.

How Sentimyne Powers Each Phase

Sentimyne supports every phase of this checklist by generating SWOT analyses from product reviews across 12+ platforms in approximately 60 seconds.

Pre-Launch: Build competitive baselines in minutes instead of weeks. Understand what customers expect in your category before Day 1.

Week 1: Use rapid analysis to check sentiment trends daily. Spot emerging patterns early while they are still fixable.

Month 1: Generate comprehensive theme analyses from accumulated reviews across all platforms. Compare your results against competitor baselines.

Month 2-3: Run quarterly competitive benchmarks sustainably. Update your roadmap with data from review intelligence instead of anecdote and assumption.

The free tier (2 reports/month) supports basic pre-launch competitor analysis. The Pro plan at $29/month gives you the unlimited analyses needed for daily and weekly monitoring during launch. The Team plan at $49/month is ideal for sharing launch insights across product, marketing, sales, and leadership teams.

"We launched our product with this exact checklist. Week 1, we caught a critical UX issue that 7 early reviews mentioned — fixed it before most customers even saw it. Month 1, we discovered customers loved a feature we almost cut from the launch. Month 3, our competitive benchmark showed we had leapfrogged two competitors on customer satisfaction."

Frequently Asked Questions

When should I start this checklist?

Start the pre-launch phase 2-4 weeks before your launch date. The competitor baseline and platform setup work needs to be complete before Day 1 so you can immediately contextualize incoming reviews. If you are still in development, it is never too early to run competitor SWOT analyses — they inform product decisions and set realistic expectations for what launch reviews might look like in your category.

What if we are launching in a new category with no direct competitors?

Benchmark against adjacent products that solve similar problems or serve similar audiences, even if they are not direct competitors. If you are launching a new type of collaboration tool, benchmark against existing collaboration tools, project management software, and communication platforms. The goal is establishing customer expectation baselines for universal themes like onboarding quality, pricing fairness, and support responsiveness.

How do I handle fake or incentivized reviews during launch?

If you offered early access, beta testing, or discounts in exchange for reviews, tag those reviews separately in your analysis. They will skew more positive than organic reviews and should not be mixed into your core sentiment metrics. For competitor fake reviews, look for statistical anomalies — sudden bursts of 5-star reviews with similar language patterns, or reviews from accounts with no other review history. Flag suspicious reviews to the platform when appropriate.

What is the minimum number of reviews needed at each phase?

During Week 1, even 10-15 reviews are worth analyzing qualitatively — each one represents a concentrated data point from your most engaged users. For Month 1 theme analysis, aim for at least 50 reviews for pattern reliability. By Month 2-3, you want 100 or more reviews for statistically meaningful competitive benchmarking. If your review volume is lower than these targets, extend the timeline for each phase rather than skipping phases entirely.

Should I change my product based on the first week of reviews?

Be cautious about making major product changes based on Week 1 data alone. Early reviews come from your most enthusiastic and sometimes most demanding users, who may not represent your broader target market. Use Week 1 data exclusively for bug fixes and critical issues. Wait for Month 1 theme analysis before making feature-level decisions based on review patterns. The exception is if Week 1 reviews reveal a fundamental product flaw like crashes, data loss, or security vulnerabilities — fix those immediately regardless of sample size.

Ready to try AI-powered review analysis?

Get 2 free SWOT reports per month. No credit card required.

Start FreeRelated Articles

A complete 90-day framework for monitoring and analyzing reviews after a product launch. Learn what to track in each phase, when to pivot vs. stay the course, and how AI review analysis accelerates post-launch decisions.

How to Run a Win/Loss Analysis Using Customer Reviews (B2B Playbook)Traditional win/loss analysis relies on expensive interviews with 10-15% response rates. Customer reviews on G2, Capterra, and Trustpilot contain the same buyer signals at scale — for free. Here's the playbook for turning public review data into win/loss intelligence.

How to Analyse Video Product Reviews on YouTube & TikTok at Scale3.4 million video product reviews were posted across YouTube, TikTok and Instagram in a single 5-month period. Learn how to extract structured sentiment, brand mentions, and competitive intelligence from video reviews using AI transcription and NLP.