Indie Hacker Feedback Analysis: Mining Product Hunt and Indie Hackers Comments for SWOT Insight

Indie founders get brutal, valuable feedback from communities like Product Hunt and Indie Hackers. Learn how to systematically extract and organize this feedback into actionable SWOT analysis.

# Indie Hacker Feedback Analysis: Mining Product Hunt and Indie Hackers Comments for SWOT Insight

Product Hunt launches and Indie Hackers discussions generate 200-1,000 comments per product launch. Each comment contains unfiltered, brutally honest feedback. Makers who ignore these comments are missing their most valuable focus group.

Yet most indie founders treat launch comments as noise. They count upvotes, celebrate featured status, then move on. They miss the patterns hidden in the comment threads: features users want, pain points competitors miss, positioning gaps, and product direction clarity gaps.

This guide shows indie founders how to systematically extract SWOT insight from community feedback in 1-2 hours of analysis per launch.

Why maker community feedback is uniquely valuable

1. Brutal honesty from informed audience

Product Hunt and Indie Hackers users are makers themselves. They're not marketing-influenced or loyalty-biased. They'll tell you exactly what's broken and why. "This feature is underwhelming" beats any survey.

2. Competitive context included

Commenters naturally mention alternatives: "This does X, but Zapier already does this better." You get free competitive intelligence with no research effort.

3. Feature request clustering is instant

Five different people requesting the same feature = clear signal. One person = noise. Comments make clustering obvious.

4. Sentiment is explicit, not masked

Casual customers soften criticism. Makers say "This is solving the wrong problem" or "Pricing is 3x higher than it should be." Directness lets you take action.

5. Founder credibility is checked

If someone critiques your product, you can usually see their other products/comments (post history). Expert criticism carries different weight than casual dismissal.

The maker feedback landscape

Product Hunt

When: Launch day (48 hours of peak activity), then sustained traffic for 7-30 days

Volume: 200-2,000 comments per launch, depending on category and hype

Audience: Early adopters, makers, tool hunters, startup community

Feedback style: Mix of hype, detailed critique, feature requests, competitive comparisons

Signal quality: HIGH — curated audience, real users, transparency of identity

Bias: Skews toward early-adopter preferences (advanced users, integration-first, power users). Casual users underrepresented.

Indie Hackers

When: Launch thread (7-14 days of active discussion), then longer decay

Volume: 100-500 comments, depending on category

Audience: Indie founders, bootstrapped business builders, side-project makers, solopreneurs

Feedback style: Tactical feedback ("how are you monetizing?"), business model critique, founder-to-founder advice

Signal quality: HIGH — founder peers, business-model-aware critique, sustainable growth mindset

Bias: Focuses on monetization, business model, long-term viability over features.

Twitter/X #buildinpublic

When: Throughout launch day and subsequent weeks

Volume: 50-500 replies, depends on follower count and virality

Audience: Mix of maker community and general audience

Feedback style: Casual, short-form, often supportive but less detailed

Signal quality: MEDIUM — mix of expert and casual feedback, harder to filter signal

Use: Secondary channel; monitor for viral criticism or praise.

Systematic indie hacker feedback analysis framework

Step 1: Define your feedback collection scope

By platform: - Product Hunt comments (primary: 48-hour launch window + ongoing) - Indie Hackers launch thread (primary: 7-14 day discussion window) - Twitter replies to launch tweet (secondary: virality signals) - Email feedback from direct signups (tertiary: highly motivated users)

By feedback source: - Direct feature requests ("I'd love if you added...") - Competitive context ("This is nice, but Zapier already does...") - Problem validation ("I had this exact problem last month") - Business model questions ("How do you plan to monetize?") - Positioning critique ("You're positioning this as [X], but I'd use it for [Y]") - Technical critique ("Would this work with [integration]?")

Step 2: Extract feedback systematically

Manual extraction for smaller launches (< 300 comments):

Open Product Hunt / Indie Hackers thread and go through each comment (sorted by "best" first):

| Comment sentiment | What to record | Example action |

|---|---|---|

| Positive with detail | Core strength + who found it valuable | "This solves problem X perfectly for [use case]" = one strength, one audience validation |

| Negative with detail | Specific weakness + suggested fix | "Missing [feature] makes it only 50% useful for my workflow" = one weakness, one improvement direction |

| Question | What's unclear about your product | "How does this compare to [alternative]?" = positioning clarity gap |

| Feature request | Who wants it, how often requested | "Need [feature] for [use case]" = prioritize if multiple requests |

| Switching language | Why they'd abandon you | "I'd use this instead of [competitor] if..." = competitive threat, improvement needed |

See What Your Reviews Really Say

Paste any product URL and get an AI-powered SWOT analysis in under 60 seconds.

Try It Free →Faster extraction (< 1 hour, if overwhelmed with volume):

- Sort comments by "best" (algos surface valuable ones)

- Read top 50 comments only (diminishing returns after)

- Note: every feature request, every competitive mention, every "problem validation"

- Skip: pure hype comments, off-topic discussions

Tool option for volume management:

Copy Product Hunt comments into spreadsheet (PH allows export), use Claude or ChatGPT to classify sentiment and extract themes. Cost: ~$0.10-0.30 per launch.

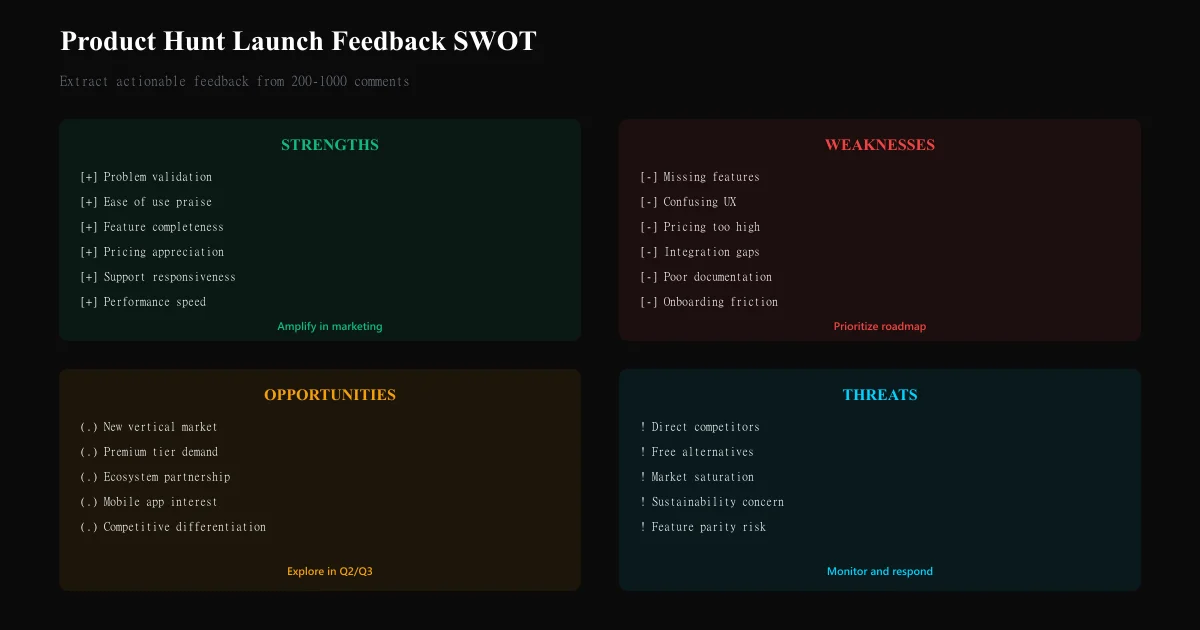

Step 3: Categorize feedback into SWOT quadrants

Group extracted feedback into:

STRENGTHS — What's working

| Strength theme | Example comment | How to amplify |

|---|---|---|

| Problem validation | "I've been looking for exactly this for 2 years" | Feature this use case in marketing |

| Ease of use | "Setup took 5 minutes, incredibly intuitive" | Lead with simplicity in positioning |

| Feature completeness | "Does X, Y, and Z out of the box" | Detail these in product tour |

| Pricing fairness | "This price point is reasonable for the value" | Maintain pricing, avoid creep |

| Speed/performance | "Works instantly, no lag whatsoever" | Benchmark and maintain performance |

WEAKNESSES — What's not working

| Weakness theme | Example comment | How to address |

|---|---|---|

| Missing feature | "Love it, but no export to CSV" | Roadmap priority if multiple requests |

| Friction in workflow | "Takes too long to set up integrations" | UX improvement, pre-built integrations |

| Pricing barrier | "Great tool but $50/mo is 2x what I'd pay" | Pricing tier review or freemium option |

| Integration gap | "Doesn't talk to Zapier" | Technical roadmap priority |

| Positioning confusion | "I'm not sure what problem this solves" | Marketing clarity improvement |

OPPORTUNITIES — What could be next

| Opportunity | Example comment | Growth potential |

|---|---|---|

| New vertical | "This would be amazing for [industry]" | Vertical-specific marketing, feature emphasis |

| Feature gap in category | "Competitors have [feature], you don't" | Competitive roadmap priority |

| Monetization model | "I'd pay more for [advanced tier]" | Premium tier research |

| Platform expansion | "Would love mobile version" | Platform roadmap consideration |

| Ecosystem play | "Would be perfect if integrated with [ecosystem]" | Partnership or native integration |

THREATS — What could kill you

| Threat | Example comment | Risk level | Mitigation |

|---|---|---|---|

| Direct competitor superiority | "Zapier does this better and cheaper" | HIGH | Feature roadmap, pricing audit |

| Alternative solution | "I'll just use [no-code tool] instead" | MEDIUM | Positioning clarity, ease-of-use focus |

| Market saturation | "There are 10 tools like this already" | MEDIUM | Differentiation, specific niche focus |

| Business model skepticism | "How is this sustainable at this price?" | MEDIUM | Transparent about margins, long-term vision |

| Free alternative | "I can build this in [no-code tool] for free" | HIGH | Time-to-value, specialization focus |

Step 4: Identify feature request clustering

Track which features mentioned in multiple comments:

| Feature request | Count of requests | Sentiment intensity | Urgency signal | Action |

|---|---|---|---|---|

| CSV export | 7 | Medium ("would be nice") | LOW | Backlog |

| Zapier integration | 12 | High ("essential for my use case") | MEDIUM | Near-term roadmap |

| Mobile app | 4 | Medium | LOW | Future consideration |

| Custom fields | 6 | High ("blocking for my workflow") | MEDIUM | Prioritize |

| User permissions/teams | 8 | High ("need this for work team") | HIGH | Prioritize |

Decision rule: Feature with 5+ requests = validate demand, add to roadmap. Feature with 1-2 requests = monitor but don't prioritize yet.

Step 5: Competitive analysis from comments

Extract competitive mentions:

| Competitor | What they excel at (per comments) | What they're weak at (per comments) | Your advantage |

|---|---|---|---|

| Zapier | Integrations, ecosystem | Price, complexity, learning curve | Simplicity, affordability, specific use case |

| [Competitor A] | Fast setup, UI | Limited integrations, pricing | Your superior integration story |

| [Competitor B] | Feature completeness | Support responsiveness, UX | Your customer service or UX ease |

This is free competitive intelligence. Use it for positioning.

Step 6: Synthesize into 90-day roadmap priorities

Based on feedback analysis:

Tier 1 (Do next, 30 days): - Feature requests with 5+ mentions OR "blocking" language - Weakness fixes that multiple commenters cite as deal-breaker - Competitive response (if someone says "I'd use you instead of Zapier if [feature]")

Tier 2 (Backlog, 60-90 days): - Feature requests with 3-4 mentions - Strength amplification (features to highlight in marketing) - Opportunity exploration (new verticals, pricing tiers)

Tier 3 (Future, 90+ days): - Single feature requests - Long-tail nice-to-haves - Threat monitoring (has competitor threat grown since launch?)

Indie hacker feedback vs. enterprise customer feedback

| Source | Feedback style | Actionability | Bias |

|---|---|---|---|

| Maker community (PH, IH) | Tactical, specific, brutally honest | High (concrete feature requests, specific pain points) | Early adopter preference; over-weight technical users |

| Enterprise customer calls | Strategic, abstract, polite | Medium (need to translate to features) | Enterprise-specific needs; miss consumer use cases |

| Support tickets | Problem-focused, reactive | Medium-High (addresses current pain) | Only problems customers care enough to report |

| User surveys | Broad but shallow | Low (abstract preferences) | Biased toward survey-takers, generic questions |

Best practice for indie makers: Treat maker community feedback as your primary signal. It's real-time, actionable, and from your target audience.

Building your indie feedback dashboard

Post-launch (Week 1)

- Collect all Product Hunt comments (download CSV)

- Extract feedback into spreadsheet or Notion (30-45 mins)

- Categorize into SWOT (30 mins)

- Identify top 3 feature requests and top 1 threat (15 mins)

- Total time: 1.5-2 hours

Post-launch (Week 2)

Collect Indie Hackers comments and repeat extraction.

Monthly review

- Track feature requests over time (is demand consistent across launches/updates?)

- Monitor competitive threat mentions (are competitors growing stronger in user perception?)

- Validate which shipped features addressed community feedback (did feedback correlate with revenue impact?)

Common indie feedback analysis mistakes

Mistake 1: Only reading top-voted comments Best comments aren't always most liked. Upvotes correlate with hype, not insight. Read chronologically and by "best" algorithm mix.

Mistake 2: Confusing feature requests with feature necessity 10 people wanting feature X ≠ they'll stay if you build it. Understand why they want it. Are they solving it differently? Will it actually drive retention?

Mistake 3: Ignoring competitive mentions Every "I'd use you if you had X like [competitor]" is free competitive intelligence. These are switching criteria. Prioritize them.

Mistake 4: Over-investing in single commenter feedback One detailed critique ≠ signal. Clustering matters. Wait for 2-3 independent mentions before prioritizing.

Mistake 5: Not following up with commenters Some comments are questions in disguise. DM commenters who asked detailed questions. You'll learn use cases you didn't anticipate.

Ready to try AI-powered review analysis?

Get 2 free SWOT reports per month. No credit card required.

Start FreeRelated Articles

Discord communities are invisible to traditional review platforms. Learn how to systematically extract and analyze member sentiment from channels to detect engagement churn, identify friction points, and drive community growth.

Luxury Brand Review Analysis: Understanding High-End Customer Expectations and FeedbackLuxury brands operate with different customer expectations. Learn how to analyze reviews on specialty platforms, separate outcome from process feedback, and detect quality deterioration in high-margin segments.

Review Sentiment Analysis as Churn Prediction: Using Reviews as Leading Indicators for Customer LossChurn prediction using usage metrics is reactive. Learn how to use review sentiment shifts as leading indicators that predict customer churn 30–90 days in advance, enabling proactive retention intervention.