SaaS Review Analysis: The Complete Guide to Software Review Intelligence

Master SaaS review analysis across G2, Capterra, TrustRadius, Product Hunt, and more. Learn frameworks for onboarding sentiment, feature satisfaction, pricing perception, competitive positioning, and product roadmap intelligence from software reviews.

Software reviews are the highest-signal feedback any SaaS company can access. Unlike consumer product reviews where someone rates a pair of shoes after wearing them once, SaaS reviews come from people who spend 40 hours a week inside your product. They have opinions about your onboarding flow, your API documentation, your pricing tiers, and how your customer success team handled their last ticket. That level of detail is extraordinary — and most SaaS companies barely scratch the surface of what their reviews are telling them.

The B2B software review ecosystem has exploded. G2 alone hosts over 2.4 million verified reviews across 170,000+ software products. Capterra, TrustRadius, Product Hunt, and a constellation of vertical-specific platforms add millions more. Each review is a miniature case study written by someone who chose your product, implemented it, used it daily, and then took the time to document their experience.

Ignoring this data is like running a SaaS company with your analytics dashboard turned off. This guide covers how to extract real intelligence from software reviews — the kind that feeds product roadmaps, sharpens sales decks, and reveals exactly where competitors are vulnerable.

Why SaaS Reviews Are Uniquely Valuable

Not all reviews carry equal weight. SaaS reviews have specific characteristics that make them dramatically more useful than reviews in most other industries.

High Intent, High Detail

A person reviewing a SaaS product has typically gone through a vendor evaluation, a sales process, an implementation, and weeks or months of daily usage. They are reviewing from deep experience, not a surface-level impression. The average G2 review runs 120-180 words — far longer than the typical Amazon product review — and many include structured assessments of specific features, support quality, and ROI.

This means SaaS reviews contain information you would normally need to conduct formal customer interviews to obtain. The difference is scale: instead of 15 customer interviews per quarter, you have access to hundreds or thousands of detailed accounts.

Comparative by Nature

SaaS buyers are comparison shoppers. Most software reviews explicitly mention competitors, describe migration experiences, and rank products against alternatives. On G2, reviewers are prompted to list which products they evaluated and which they switched from. This makes SaaS reviews a natural source of competitive intelligence that simply does not exist in most industries.

Roughly 47% of G2 reviews mention at least one competing product by name, making every review potentially a win/loss data point.

Decision-Influencing at Scale

The numbers are stark:

| Buying Stage | Percentage Using Reviews | Primary Platform |

|---|---|---|

| Initial research | 92% | G2, Google |

| Shortlist creation | 84% | G2, Capterra |

| Final vendor selection | 67% | G2, TrustRadius |

| Renewal evaluation | 41% | G2, Reddit |

| Expansion decisions | 38% | Internal + G2 |

According to G2's own research, 84% of B2B buyers consult review sites during their purchasing process, and 72% say reviews are more trustworthy than vendor-provided content. For enterprise deals above $50K ARR, TrustRadius reports that reviews influence 62% of purchase decisions.

"In B2B SaaS, your reviews are not just social proof. They are the unfiltered pitch your customers deliver to prospects on your behalf — and you have no control over the script."

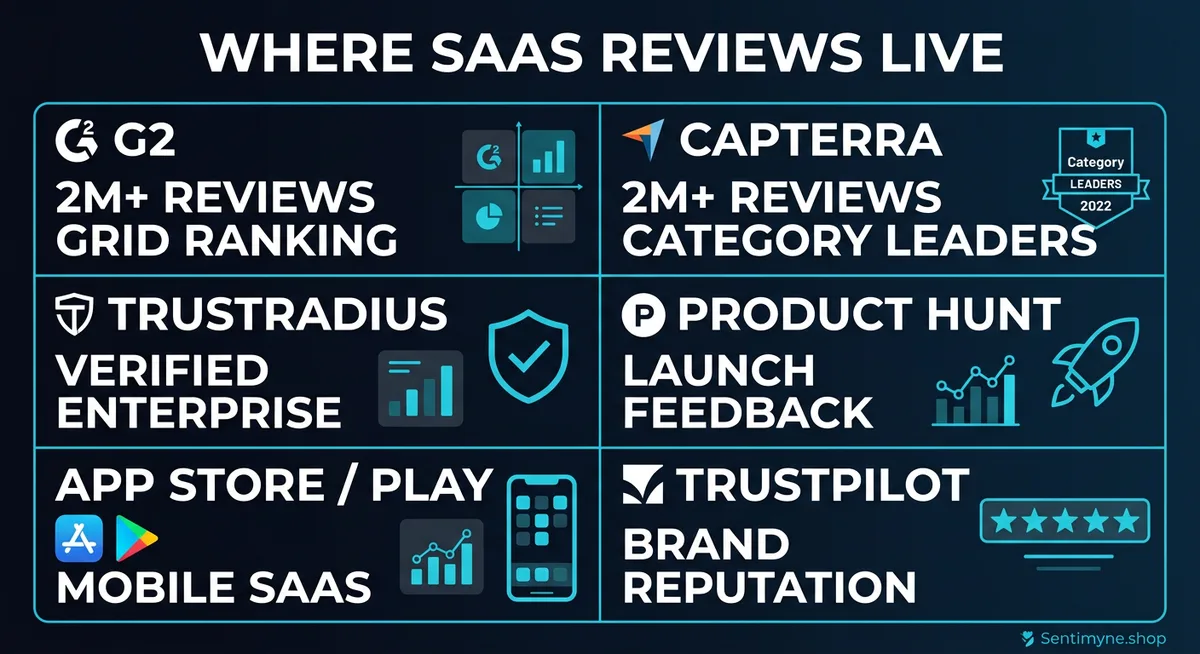

Where SaaS Reviews Live: Platform-by-Platform Analysis

Understanding the ecosystem is the first step toward systematic analysis. Each platform attracts different reviewer demographics and surfaces different types of feedback.

G2 — The Market Leader

G2 is the largest B2B software review platform with over 2.4 million reviews. Its structured review format asks users to describe what they like, what they dislike, and what problems the product solves. Reviews are verified through LinkedIn authentication, making them relatively trustworthy.

What G2 reviews reveal best: - Feature-level satisfaction and gaps - Competitive switching patterns - Segment-specific feedback (by company size, industry, role) - Implementation difficulty - Support quality perception

G2's grid positioning (Leaders, High Performers, Contenders, Niche) is directly derived from review data, making it a high-stakes platform for competitive positioning.

Capterra — SMB-Focused Intelligence

Capterra skews toward small and mid-market buyers. Its reviews tend to be shorter and more focused on ease of use, pricing, and quick-win features. If your ICP is SMB, Capterra reviews are often more relevant than G2.

What Capterra reviews reveal best: - Ease-of-use sentiment for non-technical buyers - Price sensitivity signals - Quick-start vs. complex setup feedback - Feature comparison for smaller teams

TrustRadius — Enterprise Depth

TrustRadius focuses on enterprise buyers and requires longer, more detailed reviews. The average TrustRadius review runs 400+ words — nearly triple G2's average. These reviews often discuss procurement processes, enterprise integrations, and long-term ROI.

What TrustRadius reviews reveal best: - Enterprise buying criteria and procurement friction - Integration ecosystem requirements - Long-term product evolution sentiment - Vendor relationship quality - ROI and business impact narratives

Product Hunt — Launch and Early Adoption Signals

Product Hunt reviews are different in kind. They capture first impressions from tech-savvy early adopters, often on launch day. While not representative of mature product sentiment, they reveal how your product lands with technically sophisticated audiences and what generates initial excitement or skepticism.

App Store and Google Play — Mobile and Extension Feedback

For SaaS products with mobile apps or browser extensions, app store reviews capture a specific subset of the user experience. These reviews tend to focus on bugs, UX friction, offline capability, and notification preferences — details that rarely surface in G2 reviews.

Trustpilot and Reddit — Unfiltered Opinions

Trustpilot captures customer sentiment from users who may not frequent B2B review sites. Reddit threads in subreddits like r/SaaS, r/startups, and industry-specific communities contain candid comparisons that are less filtered than formal review platforms.

The SaaS Review Analysis Framework

Analyzing SaaS reviews requires a structured approach. Raw reviews are unstructured text — to extract value, you need a consistent framework applied across all platforms.

Dimension 1: Onboarding Sentiment

Onboarding is where SaaS products are most vulnerable. First impressions during setup and initial use disproportionately influence the overall review score. Research from Wyzowl indicates that 86% of users say they would be more likely to stay loyal to a product that invests in onboarding content.

When analyzing onboarding sentiment, look for:

- Time to first value — How quickly do reviewers report getting useful output?

- Setup complexity — Are users complaining about configuration, data migration, or integration setup?

- Documentation quality — Do reviewers mention help docs, tutorials, or onboarding calls?

- Hand-holding vs. self-serve — Are users who got dedicated onboarding support happier than those who self-served?

Analysis approach: Filter reviews that mention keywords like "setup," "onboarding," "getting started," "implementation," "migration," and "first week." Categorize sentiment for each into positive, neutral, or negative. Track over time — deteriorating onboarding sentiment often precedes churn spikes by 2-3 months.

Dimension 2: Feature Satisfaction

Feature-level sentiment is the most granular intelligence SaaS reviews provide. Reviewers frequently call out specific features they love or hate, giving product teams direct feedback at a level of specificity that NPS surveys never achieve.

How to extract feature sentiment:

- Build a taxonomy of your product's features (reporting, integrations, automation, UI, API, etc.)

- Tag each review mention to the relevant feature category

- Calculate sentiment distribution per feature

- Compare against competitor feature sentiment

The resulting data often looks like this:

| Feature Area | Positive Mentions | Negative Mentions | Net Sentiment |

|---|---|---|---|

| Reporting | 342 | 89 | +74% |

| Integrations | 287 | 156 | +46% |

| Automation | 198 | 42 | +79% |

| UI/UX | 421 | 203 | +52% |

| API | 95 | 118 | -11% |

| Mobile app | 67 | 189 | -48% |

This kind of table, built from review data, tells a product team more about where to invest than most internal analytics dashboards.

Dimension 3: Support Quality Perception

Support quality is the second most mentioned topic in SaaS reviews after features. The way your support team handles tickets directly shapes how users perceive the entire product. A mediocre product with excellent support often reviews better than a superior product with slow support.

Key signals to track: - Response time satisfaction - Resolution quality (first-contact resolution vs. escalation chains) - Channel preferences (chat vs. email vs. phone) - Named agent recognition (specific team members called out by name — a strong positive signal) - Tier-based support gaps (free users mentioning slow support while paid users praise it)

Dimension 4: Pricing Perception

SaaS pricing reviews are uniquely revealing because they often include specific tier information. When a reviewer writes "the Pro plan at $49/month is worth it for the reporting alone," that is a pricing validation data point. When they write "we had to upgrade to Enterprise just to get SSO, which feels like a cash grab," that is a packaging failure signal.

See What Your Reviews Really Say

Paste any product URL and get an AI-powered SWOT analysis in under 60 seconds.

Try It Free →Pricing signals in reviews: - Value-for-money sentiment by tier - Feature gating frustration (specific features locked behind expensive tiers) - Per-seat vs. flat-rate preference signals - Free-to-paid conversion sentiment - Competitor pricing comparisons

Dimension 5: Switching Signals

The most strategically valuable content in SaaS reviews describes switching — both switching to your product and switching away from it. These narratives contain the exact reasons customers chose you over alternatives (or chose alternatives over you).

What to look for: - "Switched from [competitor]" mentions — why they left and what triggered the evaluation - "Considering switching to [competitor]" mentions — early churn warning signals - Migration difficulty descriptions — how hard was it to move data and retrain teams - Feature gap comparisons — what competitors offer that you do not

"Every SaaS review that mentions a competitor by name is a free win/loss interview. Most companies pay consultants thousands of dollars for insights that are sitting in their G2 profile right now."

Competitive Positioning From Software Reviews

Review data is one of the most underused sources of competitive intelligence in SaaS. While most companies rely on analyst reports and sales team anecdotes, reviews provide direct, unfiltered comparisons from actual users.

Building a Competitive Review Matrix

Create a matrix that maps your product against top competitors across key dimensions:

Step 1: Identify your top 5-7 competitors based on review platform category pages.

Step 2: For each competitor, extract and categorize reviews that mention your product (and vice versa).

Step 3: Map competitive strengths and weaknesses:

| Dimension | Your Product | Competitor A | Competitor B | Competitor C |

|---|---|---|---|---|

| Ease of use | 4.4 | 4.1 | 4.6 | 3.8 |

| Feature depth | 4.2 | 4.5 | 3.9 | 4.3 |

| Support quality | 4.6 | 3.7 | 4.2 | 4.0 |

| Value for money | 4.1 | 3.5 | 4.3 | 3.9 |

| Integration ecosystem | 3.8 | 4.4 | 3.7 | 4.1 |

Step 4: Identify positioning opportunities. If your support scores are 0.9 points higher than the market average but your integration scores lag by 0.6 points, you know exactly where to invest and where to differentiate.

Win/Loss Intelligence From Reviews

Every review that describes a switching decision is a data point in your win/loss analysis. Aggregate these across platforms and you build a picture of:

- Why customers choose you: The top 3-5 reasons cited by switchers-to-you

- Why customers leave: The top 3-5 reasons cited by switchers-from-you

- Competitive blind spots: Features or qualities competitors emphasize that you do not even track

This intelligence feeds directly into sales enablement. When your sales team knows that 34% of customers who switch from Competitor A cite "reporting flexibility" as the primary reason, that becomes a battle card talking point backed by real data.

Using Review Data for Product Roadmap Decisions

Product teams often struggle to prioritize feature requests. Internal data shows what users do, but reviews show what users want and why. The combination is powerful.

The Review-Driven Prioritization Framework

- Extract feature requests from negative reviews and "what could be improved" sections

- Quantify demand by counting how many reviews mention each request

- Assess competitive pressure by checking if competitors already offer the requested feature

- Estimate revenue impact by correlating feature gaps with churn mentions and switching signals

- Prioritize using a weighted score of demand volume, competitive pressure, and revenue impact

A practical example: if 89 reviews mention needing a Slack integration, 23 mention switching to a competitor that has one, and 12 of those are from enterprise accounts, you have a quantified case for prioritizing Slack integration that no PM can argue with.

Sales Enablement From Review Intelligence

Review data powers sales conversations in specific ways:

- Objection handling: Know the top 5 objections before the prospect raises them

- Competitive battle cards: Use verbatim reviewer quotes (anonymized) as third-party validation

- Case study leads: Reviewers who write glowing reviews are prime case study candidates

- Pricing defense: When a prospect says "Competitor X is cheaper," you can cite review data showing where that competitor's users report dissatisfaction

Building a Continuous Review Intelligence Program

One-time review analysis is useful. Continuous monitoring is transformative.

Monthly Review Cadence

Establish a monthly review intelligence cycle:

- Week 1: Pull all new reviews across platforms

- Week 2: Categorize and analyze themes, sentiment shifts, and competitive mentions

- Week 3: Distribute insights to product, support, sales, and marketing teams

- Week 4: Track response rates, review generation metrics, and sentiment trends

Alert-Based Monitoring

Set up alerts for: - Star rating drops below a threshold - Competitor mentions in your reviews - Specific keywords related to known issues - Review velocity changes (sudden increase or decrease)

Review Response Strategy

Responding to SaaS reviews is not optional. On G2, businesses that respond to reviews see a 12% higher conversion rate from profile views to demo requests. On Capterra, responded reviews increase profile engagement by 18%.

Response priorities: - All 1-2 star reviews within 48 hours - Reviews mentioning specific issues within 72 hours - Positive reviews mentioning features you want to amplify within one week - Competitive comparison reviews with factual corrections within 48 hours

Multi-Platform SaaS Review Analysis With Sentimyne

Analyzing reviews across G2, Capterra, TrustRadius, Product Hunt, app stores, and Trustpilot manually is not sustainable. Each platform has its own interface, export limitations, and categorization quirks. By the time you have manually read and categorized reviews from six platforms, the data is already stale.

Sentimyne aggregates reviews from 12+ platforms and delivers unified sentiment analysis in 60 seconds. For SaaS companies specifically, this means:

- Cross-platform feature sentiment — See how users on G2 feel about your reporting versus how Capterra reviewers perceive it

- Competitive switching analysis — Automatically extract and categorize competitor mentions across all platforms

- Trend detection — Spot sentiment shifts in onboarding, support, or pricing before they become churn events

- SWOT generation — Automated SWOT analysis built from real reviewer language, not internal assumptions

The free tier gives you 2 analyses per month — enough to benchmark your product and one competitor. The Pro plan at $29/month supports continuous monitoring for teams that want review intelligence as part of their regular operating rhythm.

For SaaS companies managing reviews across multiple platforms, the alternative to automated analysis is spreadsheets, browser tabs, and an intern who quits after two weeks. Sentimyne is the more reliable option.

Frequently Asked Questions

How often should SaaS companies analyze their reviews?

Monthly is the minimum cadence for meaningful analysis. High-growth SaaS companies or those in competitive categories should monitor weekly. The key is consistency — a quarterly review dump misses the trend data that makes review intelligence actionable. Set up automated monitoring to flag significant changes and conduct deep-dive analysis monthly.

Which SaaS review platform matters most for competitive intelligence?

G2 is the most comprehensive for competitive intelligence because of its structured format, verified reviews, and explicit competitor comparison prompts. However, TrustRadius provides deeper enterprise feedback, and Capterra is stronger for SMB sentiment. The most accurate competitive picture comes from analyzing all three together — relying on a single platform creates blind spots.

Can review analysis predict SaaS churn before it happens?

Yes, with caveats. Deteriorating review sentiment typically precedes measurable churn by 2-4 months. Specific signals include increasing mentions of "considering alternatives," declining support satisfaction scores, and negative sentiment around recent product changes. Review analysis is not a replacement for product analytics, but it captures qualitative churn signals that usage data misses — particularly frustration that does not yet show up in login frequency.

How do you separate genuine SaaS reviews from fake or incentivized ones?

Look for specificity. Genuine reviews mention specific features, workflows, and use cases. Incentivized reviews tend to be short, generic, and clustered around the same submission date. G2 and TrustRadius both use verification processes (LinkedIn authentication, work email verification) that reduce fake reviews. When analyzing competitor reviews, be skeptical of sudden rating spikes with vague, similar-sounding positive reviews — that pattern often indicates a review generation campaign.

What is the ROI of a systematic SaaS review analysis program?

The ROI compounds across teams. Product teams report 23% faster feature prioritization when using review data to validate roadmap decisions. Sales teams using review-derived battle cards see 15-20% higher win rates in competitive deals. Marketing teams using review language in messaging see 12% higher ad click-through rates. The direct cost of review analysis is minimal compared to the customer research, competitive analysis, and market intelligence it replaces.

Ready to try AI-powered review analysis?

Get 2 free SWOT reports per month. No credit card required.

Start FreeRelated Articles

Learn how to extract competitive intelligence from G2 reviews for SaaS. Discover what B2B buyers care about, how to analyze competitor positioning, and how product and sales teams use G2 insights.

How to Run a Win/Loss Analysis Using Customer Reviews (B2B Playbook)Traditional win/loss analysis relies on expensive interviews with 10-15% response rates. Customer reviews on G2, Capterra, and Trustpilot contain the same buyer signals at scale — for free. Here's the playbook for turning public review data into win/loss intelligence.

How to Analyse Video Product Reviews on YouTube & TikTok at Scale3.4 million video product reviews were posted across YouTube, TikTok and Instagram in a single 5-month period. Learn how to extract structured sentiment, brand mentions, and competitive intelligence from video reviews using AI transcription and NLP.