Review Data Privacy: GDPR, CCPA & Legal Considerations for Review Analysis

Navigate the legal landscape of review data analysis including GDPR, CCPA, platform terms of service, and review scraping legality. Covers privacy-first best practices for businesses using review intelligence tools.

Review analysis operates in a legal space that most businesses never think about until a lawyer asks an uncomfortable question. You are collecting text written by identifiable individuals about their experiences with your business, aggregating it from third-party platforms, running it through AI systems, and using the resulting insights to make business decisions. Every step in that chain touches data privacy law.

The good news is that review analysis, done correctly, is entirely lawful and ethically sound. The bad news is that "done correctly" involves understanding several overlapping regulatory frameworks, platform terms of service, and evolving legal precedent. Getting it wrong can mean GDPR fines up to 4% of global revenue, CCPA penalties of $7,500 per intentional violation, or platform bans that cut off your data access entirely.

This article covers what you need to know — not as legal advice (consult a lawyer for that), but as a practical guide for businesses that want to analyze review data responsibly and in compliance with applicable law.

The Fundamental Question: Are Reviews Public or Private Data?

This is the question that determines most of the legal analysis. The answer is: it depends on the platform, the jurisdiction, and what you do with the data.

The Public Data Argument

Reviews posted on public platforms (Google, Yelp, Trustpilot, Amazon) are visible to anyone with an internet connection. The reviewer chose to publish their opinion publicly. There is no expectation of privacy for content deliberately shared with the world.

This argument has legal support. The hiQ Labs v. LinkedIn Supreme Court case (2022) established that accessing publicly available data does not constitute unauthorized access under the Computer Fraud and Abuse Act. While this case specifically addressed LinkedIn profile data, the principle extends to publicly posted reviews.

The Personal Data Counterargument

Under GDPR and similar privacy regulations, even publicly available data can constitute personal data if it relates to an identifiable individual. A review that includes the reviewer's name, location, and specific experience details is personal data regardless of whether the reviewer chose to make it public.

This distinction matters because personal data triggers regulatory obligations — lawful basis for processing, data subject rights, and potential notification requirements — even when the data is publicly accessible.

The Practical Resolution

For most review analysis use cases, the practical position is:

- Reading and analyzing publicly posted reviews is lawful in most jurisdictions, provided you have a legitimate purpose and handle the data appropriately.

- Aggregating review data for business insights (sentiment trends, theme analysis, competitive intelligence) is generally a legitimate interest under GDPR and a permissible business purpose under CCPA.

- Storing and profiling individual reviewer data moves into more regulated territory and requires more careful compliance consideration.

- The key principle is proportionality — analyze what you need, store only what is necessary, and focus on aggregate insights rather than individual tracking.

GDPR Implications for Review Analysis

The General Data Protection Regulation applies to any business that processes personal data of EU/EEA residents, regardless of where the business is located. If you analyze reviews from European customers, GDPR applies to you.

Reviews as Personal Data Under GDPR

Under Article 4(1), personal data is "any information relating to an identified or identifiable natural person." A review is personal data when it includes:

- The reviewer's name or username (even if it is a pseudonym that could be combined with other data to identify them)

- Location information

- Specific details about their experience that could identify them ("I visited the store on March 3rd and spoke with the manager about my custom order")

- A profile picture or avatar

Practical implication: Almost all reviews on platforms that display reviewer names constitute personal data under GDPR. This means GDPR obligations apply to how you process that data.

A related operational reality: a growing subset of reviewers actively avoids creating the PII trails that make this analysis easier. Privacy-first consumers route purchases through pseudonymous or low-KYC payment rails — directories like Kardd catalog the no-KYC crypto debit card options that have become common in this segment — which means a reviewer who left a detailed review under a pseudonym may not be linkable to their transaction history at all. For analysts, this makes confident cross-platform reviewer deduplication harder than it was five years ago, and it means GDPR's "identifiable natural person" test is genuinely fuzzier at the edges than the text of the regulation suggests.

Lawful Basis for Processing Review Data

GDPR Article 6 requires a lawful basis for processing personal data. For review analysis, the most applicable bases are:

Legitimate Interest (Article 6(1)(f))

This is the most commonly used basis for review analysis. Under legitimate interest, you can process personal data when: - You have a legitimate business purpose (improving products, understanding customer needs) - The processing is necessary for that purpose (you cannot achieve the same insight without processing the data) - The individual's rights do not override your interest (the impact on privacy is proportionate to the benefit)

A Legitimate Interest Assessment (LIA) for review analysis typically concludes that: - Analyzing publicly posted reviews serves a legitimate business purpose - The impact on reviewers' privacy is minimal since they voluntarily published the content - The processing is proportionate when focused on aggregate insights rather than individual profiling

Public Interest or Publicly Available Data

Some GDPR interpretations recognize that processing data made manifestly public by the data subject (like publishing a review on a public platform) has a lower regulatory burden. The reviewer's act of publishing constitutes a form of consent to their opinion being read — and analysis is a form of reading.

Data Subject Rights

Even when processing is lawful, GDPR grants rights to data subjects (reviewers) that you must respect if exercised:

| Right | What It Means for Review Analysis |

|---|---|

| Right of Access (Art. 15) | If a reviewer asks, you must tell them what data you hold about them |

| Right to Erasure (Art. 17) | A reviewer can request deletion of their data from your systems |

| Right to Object (Art. 21) | A reviewer can object to processing based on legitimate interest |

| Right to Restriction (Art. 18) | A reviewer can request you stop processing while a complaint is resolved |

Practical implications: - If you store individual review data with reviewer identifiers, you need a process for handling data subject requests. - If you only process aggregate data (sentiment scores, theme frequencies) without retaining individual-level data, these rights are easier to comply with since the data is effectively anonymized. - Response timeframe: You must respond to data subject requests within 30 days.

Data Minimization and Purpose Limitation

GDPR Articles 5(1)(b) and 5(1)(c) require that you: - Only process data for the specified purpose (review analysis for business improvement, not for, say, targeted advertising to individual reviewers) - Only collect and retain the minimum data necessary

Best practices: - Process reviews for aggregate insights, not individual profiling - Do not retain reviewer personal data beyond what is necessary for analysis - Do not use review data for purposes unrelated to the original analysis intent - Set data retention policies — delete raw review data after analysis is complete if you only need the insights

CCPA Requirements for Review Analysis

The California Consumer Privacy Act (and its amendment, the CPRA) applies to businesses that collect personal information of California residents and meet certain thresholds (annual revenue over $25M, data on 100,000+ consumers, or derive 50%+ of revenue from selling personal information).

CCPA and Publicly Available Information

CCPA has a notable exemption for "publicly available information," defined as information lawfully made available from government records or information that a consumer has made available to the general public. Reviews posted on public platforms arguably fall within this exemption.

However, the exemption has limits: - It applies only to the information as originally published — not to new insights derived from combining or analyzing it - Once publicly available data is combined with non-public data, the combined dataset may no longer qualify as publicly available - The CPRA narrowed this exemption, adding that publicly available information must be used for a purpose compatible with the context in which it was originally provided

CCPA Consumer Rights

If CCPA applies to your review analysis activities:

- Right to Know — Consumers can request what personal information you have collected and how it is used

- Right to Delete — Consumers can request deletion of their personal information

- Right to Opt-Out — Consumers can opt out of the "sale" of their personal information (relevant if you share review data with third parties)

- Right to Non-Discrimination — You cannot penalize consumers for exercising their rights

Practical CCPA Compliance for Review Analysis

- Include review data processing in your privacy policy's description of data collection practices

- Ensure your "Do Not Sell My Personal Information" link accounts for any review data sharing

- If you respond to reviews, do not retaliate against consumers who exercise CCPA rights

- Maintain records of review data processing activities

Platform Terms of Service: What Each Major Platform Allows

Legal compliance goes beyond privacy regulations. Each review platform has terms of service that govern how their data can be accessed and used. Violating these terms can result in account suspension, API access revocation, or legal action.

Google Business Profile

Google's Terms of Service generally permit businesses to read and respond to their own reviews. Automated scraping of Google reviews at scale is against Google's ToS, though using the Google Business Profile API for your own business's reviews is permitted within API usage limits.

What is allowed: - Reading and responding to your own reviews through the Business Profile interface - Using the Business Profile API to access your reviews programmatically - Manually reading competitor reviews on Google Maps

What is restricted: - Mass scraping of Google reviews using bots or automated tools - Redistributing Google review content on other platforms without attribution

Trustpilot

Trustpilot provides a Business API that allows verified businesses to access their own reviews. Their terms explicitly prohibit automated scraping of the consumer-facing website.

What is allowed: - Accessing your own reviews through the Trustpilot Business API - Embedding Trustpilot reviews on your website using their widgets - Manually reading competitor reviews

What is restricted: - Scraping reviews from the public Trustpilot website - Using review content in ways that misrepresent Trustpilot's ratings

Amazon

Amazon's terms are strict. The Marketplace API provides access to reviews for sellers' own products. Scraping Amazon reviews programmatically is prohibited under Amazon's Conditions of Use.

What is allowed: - Reading reviews on your own product listings - Using the Amazon Product Advertising API (with limitations)

What is restricted: - Automated scraping of Amazon review pages - Reproducing Amazon review content at scale

G2 / Capterra / TrustRadius

B2B review platforms generally offer API access for verified businesses to access their own reviews and, in some cases, limited competitive data through paid plans.

See What Your Reviews Really Say

Paste any product URL and get an AI-powered SWOT analysis in under 60 seconds.

Try It Free →What is allowed: - Accessing your own reviews through platform APIs or dashboards - Using competitive intelligence features offered through paid platform subscriptions

What is restricted: - Scraping reviews programmatically without API authorization - Republishing competitor review content

Yelp

Yelp's terms prohibit scraping. They offer a Fusion API with limited review data access. Yelp has been notably aggressive in enforcing their terms against data scrapers.

"Understanding platform terms of service is as important as understanding privacy law. A legally compliant analysis using illegally obtained data still creates business risk."

Review Scraping Legality: The Current Landscape

The legality of web scraping — including review scraping — has been evolving through case law, particularly in the United States.

Key Legal Precedents

hiQ Labs v. LinkedIn (2022) The Supreme Court vacated a lower court ruling and sent the case back for further proceedings, but the Ninth Circuit had ruled that scraping publicly available data does not violate the Computer Fraud and Abuse Act (CFAA). This case is widely cited as supporting the legality of accessing public web data, though it is not a blanket authorization for all scraping.

Key takeaways: - Accessing publicly available data is generally not "unauthorized access" under CFAA - However, circumventing technical barriers (like CAPTCHAs or login walls) may constitute unauthorized access - Platform terms of service violations may create breach-of-contract claims even if not CFAA violations

Van Buren v. United States (2021) The Supreme Court narrowed the CFAA's scope, ruling that "exceeding authorized access" applies to accessing areas of a computer system that are off-limits, not to misusing data you are authorized to access. This ruling is generally favorable for web scraping of publicly accessible data.

Clearview AI cases (ongoing) Multiple jurisdictions have challenged AI companies that scrape publicly available photos from social media. While this involves facial recognition rather than review analysis, the legal principles about scraping public data for AI training and analysis are relevant. Several European DPAs have fined Clearview AI under GDPR, suggesting that "publicly available" does not automatically mean "free to process."

The Practical Scraping Position

For review analysis specifically, the legal landscape suggests:

- API access is always safer than scraping. Use official APIs whenever available.

- Scraping your own reviews from platforms is low-risk. You have a clear legitimate interest in analyzing feedback about your own business.

- Scraping competitor reviews is a gray area. It may not violate the CFAA (for public data), but it may violate platform ToS, creating breach-of-contract risk.

- Scraping at scale with bot activity may trigger CFAA concerns if it overwhelms servers or circumvents access controls.

- Combining scraped data with other personal information increases privacy risk significantly.

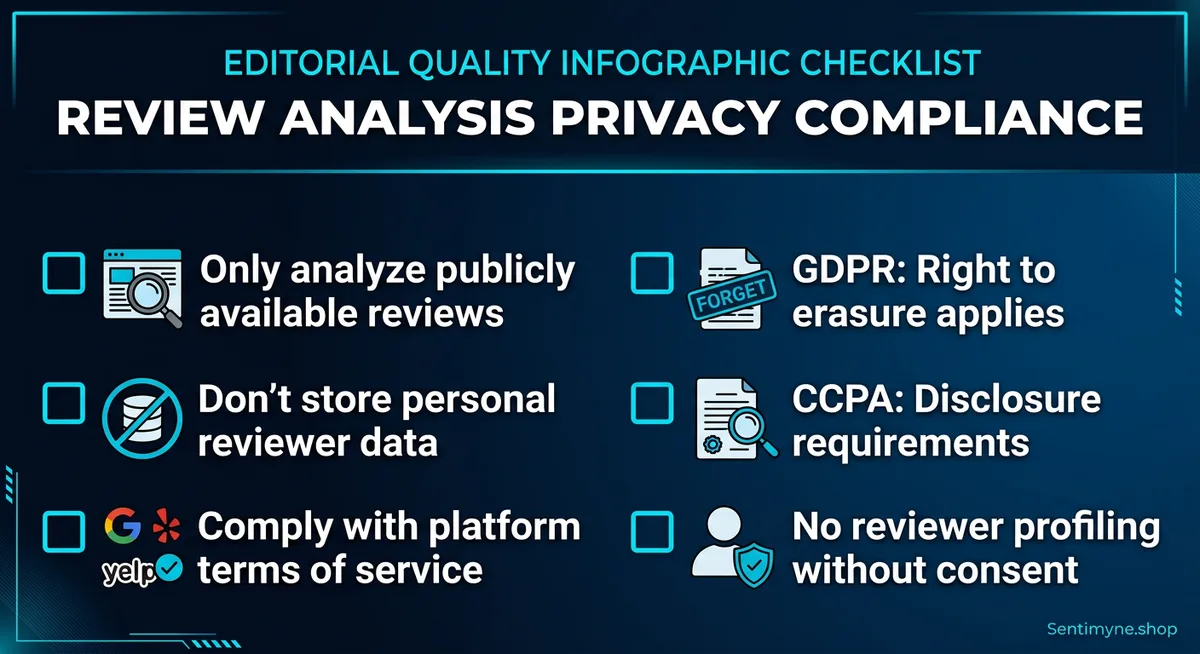

Best Practices for Privacy-Compliant Review Analysis

Regardless of your specific regulatory obligations, these best practices reduce legal risk and demonstrate good faith:

1. Analyze Publicly Available Data Only

Stick to reviews that reviewers voluntarily posted on public platforms. Do not attempt to access private feedback, internal survey data from other companies, or reviews behind authentication walls.

2. Do Not Store Personally Identifiable Information

If your goal is understanding customer sentiment themes, you do not need to store reviewer names, email addresses, or profile URLs. Store the review text and metadata needed for analysis (date, platform, rating) and discard PII.

If you must retain some identifying information (for example, to respond to specific reviews), minimize retention to what is strictly necessary and set automatic deletion schedules.

3. Focus on Aggregate Insights, Not Individual Profiling

The difference between "42% of reviews mention slow shipping" and "John Smith in Portland complained about slow shipping on March 3rd" is the difference between legitimate business intelligence and individual surveillance. Focus on the former.

4. Maintain a Processing Record

GDPR Article 30 requires records of processing activities. Even if GDPR does not apply to you, maintaining a simple record of what review data you process, from which platforms, for what purpose, and how long you retain it demonstrates compliance readiness.

Processing record template:

| Field | Example Entry |

|---|---|

| Data category | Customer reviews (public) |

| Source platforms | Google, Trustpilot, G2, Amazon |

| Purpose | Sentiment analysis and business improvement |

| Legal basis | Legitimate interest |

| Data subjects | Customers who posted public reviews |

| Retention period | Raw data: 90 days. Aggregate insights: indefinite |

| Security measures | Encrypted storage, access controls, audit logging |

| Third-party sharing | Review analysis tool (data processor) |

5. Choose Tools That Act as Data Processors

Under GDPR, when you use a third-party tool for review analysis, that tool is a "data processor" acting on your behalf. You need a Data Processing Agreement (DPA) in place that specifies: - What data the processor handles - The purpose and duration of processing - Security measures in place - Data deletion upon contract termination - Sub-processor disclosure

Reputable review analysis tools provide DPAs as standard. If a tool does not offer one, that is a red flag.

6. Be Transparent in Your Privacy Policy

Your privacy policy should disclose that you collect and analyze customer reviews from public platforms. This does not require a lengthy legal paragraph — a simple statement that you monitor and analyze publicly posted reviews to improve your products and services satisfies most transparency requirements.

7. Do Not Manipulate or Misrepresent Reviews

While not strictly a privacy issue, review manipulation (fake reviews, incentivized reviews, selective display) creates legal exposure under consumer protection laws, FTC guidelines, and platform terms. Review analysis should be separate from review generation — analyze what exists, do not manufacture what you wish existed.

8. Respect Data Subject Requests

If a reviewer contacts you and asks you to stop processing their review data or to delete it from your systems, comply. Even if you believe you have a valid legal basis to retain it, the reputational risk of refusing a privacy request almost always outweighs the analytical value of one data point.

International Considerations

Privacy law varies significantly by jurisdiction. Key frameworks beyond GDPR and CCPA:

| Jurisdiction | Key Law | Notable Requirements |

|---|---|---|

| EU/EEA | GDPR | Strictest framework; extraterritorial reach |

| California | CCPA/CPRA | Publicly available data exemption (with limits) |

| Brazil | LGPD | Similar to GDPR; covers publicly available data |

| Canada | PIPEDA / Quebec Law 25 | Consent-focused; publicly available data provisions |

| UK | UK GDPR + Data Protection Act 2018 | Post-Brexit GDPR equivalent |

| Australia | Privacy Act 1988 | Generally available data less regulated |

| Japan | APPI | Consent required for certain processing |

| South Korea | PIPA | Strict consent requirements |

If you operate internationally, your review analysis practices should comply with the strictest applicable framework — which is usually GDPR. Designing your program for GDPR compliance typically ensures compliance with most other frameworks as well.

Sentimyne's Privacy-First Approach

Sentimyne is designed with data privacy as a foundational principle, not an afterthought:

- Public data only — Sentimyne analyzes reviews from publicly accessible platforms. It does not access private feedback, internal databases, or authenticated-only content.

- No PII storage — Analysis focuses on extracting themes, sentiment, and strategic insights rather than building profiles of individual reviewers.

- Aggregate outputs — The SWOT analysis, sentiment scores, and theme clusters that Sentimyne produces are aggregate business intelligence, not individual-level data.

- Minimal data retention — Review data is processed for analysis and results are delivered. The tool is designed around ephemeral processing rather than long-term data warehousing.

- Processor compliance — As a data processor under GDPR, Sentimyne handles data in accordance with standard data processing obligations.

This privacy-first design means businesses can use Sentimyne for review intelligence — across all 12+ supported platforms — without creating significant privacy compliance burden. The free plan (2 analyses per month) lets you verify the privacy approach before committing, and the Pro plan at $29/month maintains the same privacy standards at unlimited scale.

The Future of Review Data Privacy

Privacy regulation is tightening globally, not loosening. Several trends will affect review analysis in the coming years:

- AI-specific regulation — The EU AI Act and similar frameworks may impose additional requirements on AI systems that process personal data, including review analysis tools.

- Platform data portability — The EU Digital Markets Act requires large platforms to offer data portability, which may simplify legitimate review data access.

- Cookie and tracking convergence — As browser-level tracking declines, businesses will rely more on first-party and publicly available data like reviews, increasing regulatory attention on these data sources.

- Automated decision-making scrutiny — GDPR Article 22 restricts automated decision-making with legal effects. If review analysis directly drives decisions about individual customers (pricing, service levels), additional safeguards may apply.

Building your review analysis program on privacy-first principles now protects you against regulatory tightening later. The cost of retrofitting compliance is always higher than building it in from the start.

Frequently Asked Questions

Is it legal to analyze reviews that customers post on public platforms?

Generally yes, provided you comply with applicable privacy regulations and platform terms of service. Publicly posted reviews are accessible to anyone, and analyzing them for business improvement constitutes a legitimate interest under GDPR and is typically permissible under CCPA's publicly available information provisions. The key is to focus on aggregate insights rather than individual profiling and to use authorized access methods (APIs rather than scraping where possible).

Do I need consent from reviewers before analyzing their reviews?

In most cases, no. Under GDPR, legitimate interest provides a lawful basis for analyzing publicly posted reviews without individual consent. The reviewer's act of publishing on a public platform is itself an indication that they intend the content to be read and considered. However, if you plan to contact reviewers individually based on analysis results, or to use their data for marketing purposes beyond business improvement, additional consent requirements may apply.

Can I store customer review data in my own database?

Yes, but with caveats. Store only what is necessary for your analysis purpose (data minimization). Set retention limits so raw review data is deleted when no longer needed. Avoid storing personally identifiable reviewer information unless you have a specific legitimate need. If you store EU resident data, ensure your storage infrastructure meets GDPR requirements for security and, if applicable, data transfer restrictions.

What happens if a reviewer asks me to delete their data under GDPR?

You must comply within 30 days. Delete the reviewer's data from your systems and confirm the deletion. If you have shared the data with processors (like a review analysis tool), notify them to delete it as well. If your analysis has already produced aggregate insights that do not identify the individual, those aggregate results can typically be retained since they are no longer personal data.

Is scraping competitor reviews from platforms legal?

This is a gray area. Under US law (post-hiQ v. LinkedIn), accessing publicly available data generally does not violate the CFAA. However, scraping may violate platform terms of service, creating breach-of-contract exposure. Under GDPR, you need a legitimate interest basis for processing the data and must comply with data minimization and purpose limitation principles. The safest approach is to use official API access where available and to focus on your own reviews rather than mass-collecting competitor data.

Ready to try AI-powered review analysis?

Get 2 free SWOT reports per month. No credit card required.

Start FreeRelated Articles

Navigate the legal landscape of review scraping with this comprehensive guide. Covers the hiQ vs LinkedIn precedent, CFAA implications, platform-specific rules for Amazon, Google, Yelp, and Trustpilot, plus ethical best practices and legal alternatives for collecting review data.

How to Run a Win/Loss Analysis Using Customer Reviews (B2B Playbook)Traditional win/loss analysis relies on expensive interviews with 10-15% response rates. Customer reviews on G2, Capterra, and Trustpilot contain the same buyer signals at scale — for free. Here's the playbook for turning public review data into win/loss intelligence.

How to Analyse Video Product Reviews on YouTube & TikTok at Scale3.4 million video product reviews were posted across YouTube, TikTok and Instagram in a single 5-month period. Learn how to extract structured sentiment, brand mentions, and competitive intelligence from video reviews using AI transcription and NLP.