Reddit & Social Media Review Mining: Extract Unfiltered Product Intelligence

Learn how to mine Reddit, Twitter/X, Facebook Groups, TikTok, YouTube, and Discord for unfiltered product feedback. Covers techniques for extracting honest reviews from social platforms, handling unstructured data, and combining social intelligence with structured review analysis.

The most honest feedback about your product is not on your Google listing, your Yelp page, or your Amazon reviews. It is buried in a Reddit thread at 2 AM, written by someone with a throwaway account who has no incentive to be polite, no reason to exaggerate, and no relationship with your brand. They are just telling the truth as they experienced it.

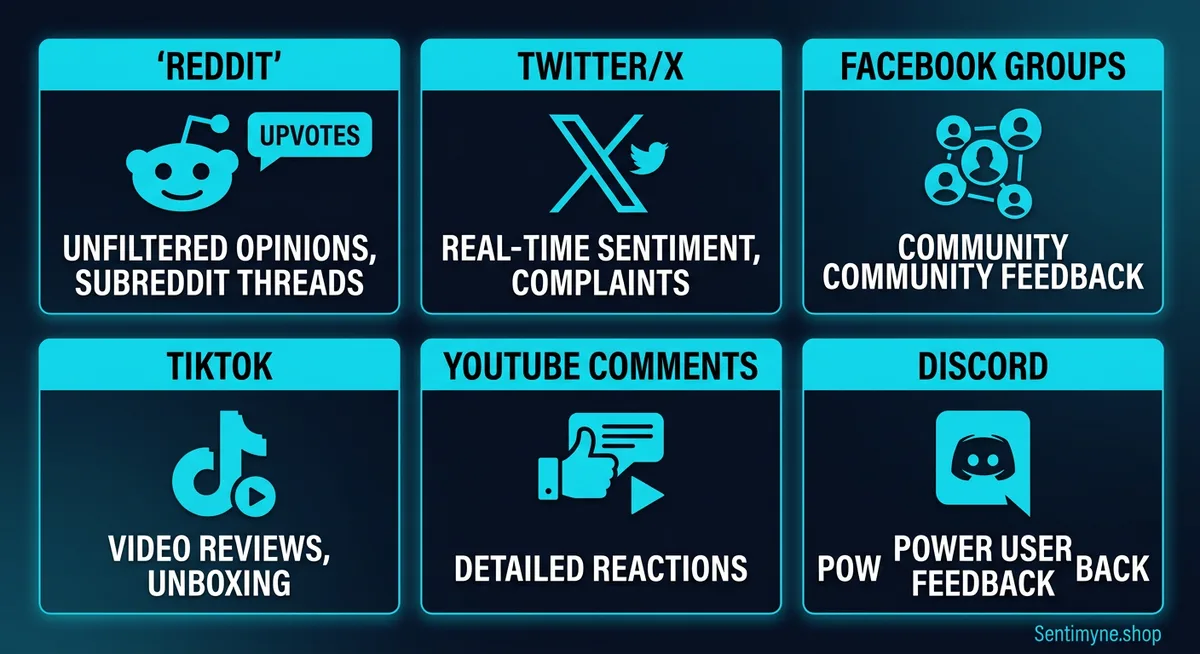

Social media platforms — Reddit, Twitter/X, Facebook Groups, TikTok comment sections, YouTube reviews, and Discord servers — contain a parallel universe of product feedback that operates under fundamentally different rules than structured review platforms. There are no star ratings. There are no character minimums. There is no moderation by the business. The feedback is raw, unfiltered, and often uncomfortably honest.

For product teams, marketers, and business strategists, this unfiltered intelligence is invaluable. A 2025 Sprout Social study found that 76% of consumers say they are more likely to buy from a brand that listens to social feedback, and 65% believe social media comments provide more truthful assessments than review platform ratings. Yet most businesses completely ignore social feedback in their review analysis workflows.

This guide covers how to systematically mine social platforms for product intelligence — where to look, how to extract signal from noise, and how to integrate social feedback with structured review analysis for a complete picture of customer sentiment.

Why Social Feedback Is the Most Honest Feedback

Understanding why social feedback differs from platform reviews is essential before building a mining strategy. The structural differences are not just cosmetic — they produce fundamentally different data.

Anonymity Removes Social Pressure

On Google, Yelp, and Amazon, reviewers typically use real names or identifiable accounts. This introduces social pressure that skews feedback in predictable ways:

- Positive bias — People are less likely to write harsh criticism under their real name

- Reciprocity pressure — Customers who received good service feel social obligation to leave positive reviews

- Retaliation fear — For local businesses especially, reviewers worry about being identified and treated differently on return visits

Reddit eliminates all of these pressures. Throwaway accounts are common. Users have no relationship with the business. There is no mechanism for the business to identify or retaliate against the reviewer. The result is feedback with significantly less positive bias than structured review platforms.

Research from Northwestern University's Kellogg School of Management found that anonymous reviews contain 34% more negative sentiment and 28% more specific criticism than attributed reviews of the same products. The criticism is not mean-spirited — it is simply more honest.

No Incentive Structures

Structured review platforms have incentive structures that distort feedback:

- Amazon Vine provides free products in exchange for reviews

- Yelp Elite status rewards prolific reviewers with social perks and event invitations

- Google Local Guides gamifies reviewing with points and badges

- Businesses directly solicit reviews from satisfied customers, creating selection bias

Social platforms have no such structures. Nobody gets points for posting about a product on Reddit. Nobody receives free merchandise for tweeting about their experience. The feedback is purely voluntary, which makes it a closer representation of actual customer sentiment.

Community Moderation Creates Quality Control

Reddit's subreddit moderation and upvote/downvote system creates a form of peer review that structured review platforms lack. When someone posts a misleading or shill-like review on Reddit, the community identifies and punishes it with downvotes, skeptical comments, and moderator action.

This community moderation is remarkably effective at filtering:

- Astroturfed positive reviews — Shill posts are routinely identified and called out

- Competitor attacks — Fake negative reviews are scrutinized and debunked

- Uninformed opinions — Comments from people who have not actually used the product are challenged by those who have

- Outdated information — Community members correct reviews based on old product versions

Where to Find Reviews on Social Platforms

Product feedback is scattered across social platforms in different formats and locations. Here is a comprehensive map.

Reddit: The Gold Mine

Reddit is the single richest source of unfiltered product feedback on the internet. The key is knowing which subreddits to mine and how to navigate them.

General product review subreddits: - r/BuyItForLife — Long-term product durability assessments - r/reviews — General product and service reviews - r/ProductReviews — Dedicated review discussions

Category-specific subreddits: - r/headphones, r/audiophile — Audio equipment - r/SkincareAddiction, r/MakeupAddiction — Beauty and personal care - r/HomeImprovement — Contractors, tools, home products - r/SaaS, r/startups — Software and business tools - r/personalfinance — Financial products and services - r/Fitness — Equipment, supplements, programs

"Has anyone tried [product]?" threads are the highest-value Reddit content. These are explicit requests for product feedback, and the responses are typically detailed, honest, and experience-based. They can be found by searching any relevant subreddit for "has anyone tried," "experience with," "is [product] worth it," or "thoughts on."

"[Product] review after X months" posts provide long-term usage data that structured review platforms rarely capture. Initial reviews are written within days of purchase; Reddit users post updates months or years later with durability and satisfaction data.

Twitter/X: Real-Time Sentiment

Twitter/X feedback is more fragmented and less detailed than Reddit, but it captures real-time sentiment spikes — especially around product launches, outages, or pricing changes.

Where to find feedback: - Direct mentions — @brand tweets expressing satisfaction or frustration - Hashtag searches — #[product] or #[brand] threads - Complaint threads — "Anyone else having issues with [product]?" posts - Quote tweets of brand announcements — The most honest reactions to product changes - Community Notes — Fact-checks on misleading brand claims

Twitter/X feedback characteristics: - Short-form (280 characters encourages concise, punchy opinions) - High emotional intensity (people tweet when they are delighted or furious, rarely when satisfied) - Real-time (captures sentiment during events as they happen) - Viral amplification potential (negative experiences can reach millions)

Facebook Groups: Niche Community Feedback

Facebook Groups are an underutilized source of product intelligence, particularly for consumer products, local services, and B2B tools.

High-value Group types: - "[City/Region] Recommendations" groups — Local service reviews from community members - Hobbyist and enthusiast groups — Deep product knowledge and comparison discussions - Professional groups — B2B software feedback from actual users - Brand community groups — Both official and unofficial brand communities

Facebook Group feedback tends to be more conversational and less structured than Reddit. Users ask for recommendations, share experiences, and warn others about problems. The social context (real names, mutual connections) means feedback is slightly less raw than Reddit but still more honest than platform reviews because there is no star rating system or business relationship.

TikTok: Visual Product Reviews

TikTok has become a major product review platform, particularly for consumer goods, beauty products, and food service.

Content formats: - "Honest review" videos — Creators testing and reviewing products on camera - "Get ready with me" + product mentions — Organic product feedback embedded in lifestyle content - Comment sections — Where the real opinions live (the video might be positive; the comments often tell the full story) - Duets and stitches — Reactive content that adds commentary to product reviews

TikTok feedback is heavily visual, which provides intelligence that text reviews cannot: actual product appearance versus marketing photos, real-world performance demonstrations, and unboxing experiences. The algorithmic feed also means TikTok reviews reach audiences that are not actively searching for them — creating awareness effects that traditional reviews do not achieve.

YouTube Comments: Deep-Dive Feedback

YouTube product reviews are some of the most detailed content available, and the comment sections beneath them are rich with corroborating or contradicting feedback from other users.

Highest-value YouTube sources: - Independent reviewer channels — Detailed, structured reviews with community discussion - "X months later" follow-up videos — Long-term usage assessments - Comparison videos — Direct competitive comparisons with user opinions in comments - Comment sections — Often contain more honest feedback than the video itself (especially when the reviewer received the product for free)

Discord: Real-Time Community Feedback

Discord servers are increasingly important for software products, gaming, and tech hardware. Many SaaS companies run official Discord servers where users provide real-time feedback, report bugs, and discuss features.

What Discord provides: - Real-time bug reports and complaints — Often surface issues before they appear in formal reviews - Feature request discussions — Unfiltered user wishlist items - Community sentiment shifts — Detectable through conversation tone changes over time - Power user insights — Discord communities tend to attract the most engaged users

How to Mine Reddit Threads for Product Intelligence

Reddit deserves a dedicated methodology because of its depth and volume. Here is a systematic approach to extracting product intelligence from Reddit.

Step 1: Identify Relevant Subreddits and Threads

Start by mapping every subreddit where your product, category, or competitors are discussed. Use Reddit's search function and Google site search (site:reddit.com "[your product]") to find threads.

Build a thread inventory:

| Thread Type | Example Search | Intelligence Value |

|---|---|---|

| Direct product reviews | "[Product name] review" | High — explicit experience reports |

| Comparison threads | "[Product] vs [competitor]" | Very high — relative positioning data |

| Recommendation requests | "best [category] for [use case]" | High — market perception and alternatives |

| Complaint threads | "[Product] issues" or "[Product] problem" | Very high — failure mode identification |

| Praise threads | "[Product] is amazing" or "love my [product]" | Moderate — positive differentiator identification |

| Long-term updates | "[Product] after 1 year" | Very high — durability and satisfaction longevity |

Step 2: Extract and Categorize Feedback

For each thread, extract individual feedback points and categorize them:

See What Your Reviews Really Say

Paste any product URL and get an AI-powered SWOT analysis in under 60 seconds.

Try It Free →- Feature feedback — What users like or dislike about specific features

- Quality assessment — Perceived build quality, durability, reliability

- Value perception — Worth the price, overpriced, or underpriced

- Use case fit — Which use cases the product excels at and which it fails at

- Competitor comparison — How it stacks up against named alternatives

- Support experience — Customer service quality, warranty claims, resolution speed

Step 3: Weight Feedback by Credibility Signals

Not all Reddit comments are equally valuable. Weight them based on:

- Account age and karma — Older accounts with consistent activity are more credible

- Upvote count — Highly upvoted comments represent community-validated opinions

- Specificity level — "The battery lasts 6.5 hours under heavy use" is more valuable than "battery is okay"

- Claimed ownership duration — "After 8 months of daily use" carries more weight than "just got it yesterday"

- Reply engagement — Comments that generate substantive discussion often contain the most important insights

Step 4: Identify Recurring Themes

After extracting feedback from 20-50 threads, themes will emerge. Track theme frequency and sentiment:

"The signal-to-noise ratio on Reddit is low — maybe 1 in 10 comments contains actionable intelligence. But that 1 comment often contains insights you will never find on any structured review platform. The key is having a system to extract it efficiently."

Social Sentiment vs. Platform Review Sentiment: Understanding the Gaps

One of the most valuable analytical exercises is comparing what people say on social platforms versus what they say on review platforms about the same product.

Typical Divergence Patterns

Pattern 1: Social is more negative than reviews This is the most common divergence. Social platforms skew negative because: - Frustrated customers seek community validation on social before (or instead of) leaving formal reviews - Review platforms filter extreme negative reviews (Yelp) or make reviewing difficult (some enterprise platforms) - Social posting has lower friction than formal reviewing

Pattern 2: Social captures different issues Formal reviews tend to focus on the transaction experience. Social feedback often captures: - Long-term quality degradation — "My [product] was great for 3 months, then started [failing]" - Customer support nightmares — Detailed service recovery stories that do not fit review format - Pricing frustration — Especially for SaaS products that raise prices or change tiers - Ethical and values concerns — Labor practices, environmental impact, corporate behavior

Pattern 3: Social sentiment leads review sentiment by 2-6 weeks Issues appear on Reddit and Twitter before they show up in formal review scores. Social monitoring acts as an early warning system for emerging problems.

Quantifying the Gap

For a product with 4.2 stars on Google and predominantly negative Reddit discussion, the true customer sentiment sits somewhere between. A reasonable framework:

- Platform reviews = Sentiment under social pressure + incentive structures + platform filtering

- Social feedback = Sentiment without filters + negativity bias + self-selection of frustrated users

- True sentiment = Weighted combination, with social feedback serving as a correction to platform optimism

Challenges of Social Review Mining

Social feedback is powerful but messy. Understanding the challenges helps you build systems that account for them.

Unstructured Data

Platform reviews have ratings, dates, and structured text. Social feedback is freeform — scattered across threads, embedded in conversations, mixed with off-topic content, and formatted inconsistently. Extracting clean intelligence requires either significant manual effort or natural language processing tools capable of handling conversational text.

Noise and Irrelevance

For every useful product comment on Reddit, there are dozens of tangential, joking, or uninformed comments. The signal-to-noise ratio varies by subreddit and thread quality but typically ranges from 5% to 20% actionable content.

Sarcasm and Irony

Social platforms, Reddit especially, are heavy on sarcasm. "Oh sure, the customer support was fantastic — if you enjoy waiting on hold for 3 hours" means the opposite of what naive sentiment analysis would detect. Any automated analysis of social feedback must account for sarcasm, which occurs in approximately 12-18% of product-related Reddit comments according to a 2024 Stanford NLP study.

Representativeness Bias

Social media users are not a representative sample of your customer base. Reddit skews younger, more tech-savvy, and more male. Twitter/X users tend to be more politically engaged and urban. TikTok skews younger and more female. Facebook Groups skew older and more suburban.

This means social feedback over-represents certain customer segments and under-represents others. A product that performs well on Reddit might still struggle with demographics that are not well-represented there.

Temporal Bias

Social feedback spikes around events: launches, outages, price changes, viral complaints. Between events, feedback volume drops dramatically. This creates a dataset that over-represents exceptional experiences (good and bad) and under-represents the everyday experience.

How Sentimyne Complements Social Listening

Social review mining provides the unfiltered, qualitative intelligence that structured platforms miss. But it lacks the structure, consistency, and quantitative rigor of platform reviews. The optimal approach combines both.

Sentimyne specializes in structured review analysis across 12+ platforms — Google, Yelp, Amazon, G2, Capterra, Trustpilot, TrustRadius, Product Hunt, and more. By analyzing your structured review data alongside your social listening efforts, you create a complete intelligence picture.

The Combined Workflow

Social monitoring identifies emerging issues first. A cluster of Reddit complaints about your latest update surfaces a problem before formal review scores drop. Social acts as your early warning system.

Sentimyne quantifies the impact across structured platforms. Once you identify an issue socially, Sentimyne's cross-platform analysis reveals whether the issue is appearing in formal reviews, how it is affecting your ratings, and how severe the impact is relative to other themes.

Together, they close the feedback loop:

| Intelligence Layer | Source | What It Provides |

|---|---|---|

| Early detection | Reddit, Twitter/X, Discord | First signals of emerging issues |

| Quantification | Sentimyne (Google, Yelp, G2, etc.) | Severity measurement, trend tracking |

| Competitive context | Sentimyne multi-platform SWOT | How issues compare to competitor performance |

| Qualitative depth | Reddit threads, YouTube comments | Root cause details and customer language |

| Strategic direction | Combined analysis | Prioritized action items with supporting data |

Sentimyne's SWOT analysis generates in approximately 60 seconds from any product URL, providing the structured foundation that makes social intelligence actionable. The free tier offers 2 analyses per month to establish your baseline, with Pro at $29/month for continuous monitoring.

What Social Mining Cannot Replace

Social feedback excels at early detection, qualitative depth, and honesty. But it cannot replace structured review analysis for:

- Quantitative trend tracking — Star ratings over time provide measurable trends that social mentions do not

- Competitive benchmarking — Structured platforms enable apples-to-apples comparison; social comparisons are anecdotal

- SEO and conversion impact — Google and Yelp reviews directly affect search rankings and purchase decisions; Reddit threads do not

- Systematic coverage — Structured platforms capture feedback from a broader customer base; social captures the vocal minority

The businesses that build the strongest customer intelligence programs use both. Social mining for depth and early detection. Structured analysis for measurement and accountability.

Frequently Asked Questions

Is it legal to mine Reddit and social media for product feedback?

Yes, mining publicly available content on Reddit, Twitter/X, and other social platforms for product intelligence is legal. Reddit's public API and publicly visible content are fair game for analysis. However, you should respect platform terms of service regarding automated scraping at scale, never attribute quotes to specific users without their consent in published materials, and avoid accessing private or gated content (closed Facebook Groups, private Discord servers) without appropriate access. If you are using automated tools, ensure they comply with the platform's API rate limits and terms.

How do I handle sarcasm and irony in social media sentiment analysis?

Sarcasm is the biggest challenge in social sentiment analysis. Manual review catches sarcasm effectively but does not scale. For automated analysis, look for tools that use context-aware NLP models trained on social media corpora — these models recognize linguistic markers of sarcasm (excessive punctuation, contrast phrases like "oh sure," and qualifier words). A practical hybrid approach is to use automated tools for initial filtering and then manually review any comments flagged as ambiguous. Expect sarcasm rates of 12-18% in Reddit product discussions, significantly higher than the 2-4% rate on structured review platforms.

How much social feedback do I need before drawing conclusions?

A minimum of 50-75 unique data points (individual comments or posts mentioning your product) across at least 15-20 separate threads provides a reasonable foundation for theme identification. Below that threshold, you risk drawing conclusions from a handful of vocal users. For competitive analysis, aim for similar volumes for each competitor. Remember that social feedback is inherently biased toward extreme experiences, so never use social data alone to make strategic decisions — always validate against structured review data.

What tools can I use to mine Reddit for product mentions?

Several approaches exist. Reddit's native search is basic but functional for manual research. Google's site:reddit.com search operator provides better results than Reddit's own search. For automated monitoring, tools like Brandwatch, Mention, and Sprout Social track Reddit mentions alongside other social platforms. For API-based custom solutions, Reddit's Data API allows programmatic access to public posts and comments. The key is building a consistent monitoring workflow rather than relying on sporadic manual searches — set up alerts for your brand name, product names, and competitor names across all relevant subreddits.

How do I prioritize social feedback when it contradicts my review platform ratings?

Start by assessing sample sizes — if you have 500 Google reviews averaging 4.3 stars and 15 Reddit comments that are negative, the Google data is more statistically reliable. However, examine what the Reddit comments say specifically. If they identify a concrete, recurring issue (e.g., "the product breaks after 3 months"), investigate that claim directly — even a small number of specific, detailed complaints can identify real problems. Use Sentimyne to check whether the issue appears in structured reviews at lower frequency. If social and structured data consistently diverge, the truth usually sits closer to the structured data for overall satisfaction, but social data is more reliable for identifying specific failure modes.

Ready to try AI-powered review analysis?

Get 2 free SWOT reports per month. No credit card required.

Start FreeRelated Articles

Compare the best sentiment analysis tools for social media monitoring in 2026, including Brandwatch, Sprout Social, Hootsuite, Brand24, Mention, and Sentimyne. Learn how platform-specific challenges affect accuracy, see feature-by-feature comparisons, and discover how review analysis complements social listening for complete brand intelligence.

How to Run a Win/Loss Analysis Using Customer Reviews (B2B Playbook)Traditional win/loss analysis relies on expensive interviews with 10-15% response rates. Customer reviews on G2, Capterra, and Trustpilot contain the same buyer signals at scale — for free. Here's the playbook for turning public review data into win/loss intelligence.

How to Analyse Video Product Reviews on YouTube & TikTok at Scale3.4 million video product reviews were posted across YouTube, TikTok and Instagram in a single 5-month period. Learn how to extract structured sentiment, brand mentions, and competitive intelligence from video reviews using AI transcription and NLP.