How to Build a Voice of Customer Program Powered by Review Intelligence

Learn how to build a Voice of Customer (VoC) program using review data as your primary intelligence source. Covers the complete VoC framework from collection to measurement, with practical templates for distributing insights across product, marketing, CX, and sales teams.

Voice of Customer programs have existed in some form since the first business asked "what do our customers think?" But for most of their history, VoC initiatives have been expensive, slow, and frustratingly limited in scope. A typical enterprise VoC program involves quarterly surveys with 12% response rates, a handful of focus groups with self-selected participants, and an analytics dashboard that three people in the organization actually check. The result is a partial, lagging picture of customer sentiment that arrives too late to drive meaningful decisions.

Reviews changed this equation entirely. Across Google, Trustpilot, G2, Amazon, Yelp, and dozens of other platforms, your customers are volunteering detailed, unstructured feedback about their experience — unprompted, unfiltered, and in their own words. They describe what they love, what frustrates them, what almost made them leave, and what they wish existed. This is Voice of Customer data at a scale and depth that no survey program can match.

The problem has never been a lack of customer voice. It has been the inability to systematically listen. Building a VoC program around review intelligence solves that problem — if you structure it correctly.

What Voice of Customer Actually Means (And What It Doesn't)

Voice of Customer is a research methodology for capturing customer expectations, preferences, and aversions. The term originated in quality management during the 1980s, popularized by Abbie Griffin and John Hauser at MIT. Their original definition focused on structured interviews and surveys designed to translate customer needs into product requirements.

Modern VoC has expanded far beyond that original scope. Today, it encompasses every channel through which customers express opinions about their experience — support tickets, social media posts, survey responses, sales call recordings, community forum threads, and reviews.

The VoC Hierarchy of Signal Quality

Not all VoC inputs are created equal. They differ across three critical dimensions:

| VoC Source | Volume | Authenticity | Actionability |

|---|---|---|---|

| Online reviews | Very high | High (unsolicited) | High |

| Support tickets | High | High (real issues) | Very high |

| NPS/CSAT surveys | Medium | Medium (prompted) | Medium |

| Social media mentions | High | Variable | Low-medium |

| Sales call recordings | Low | High | High |

| Focus groups | Very low | Low (performative) | Medium |

| Customer interviews | Very low | High | Very high |

| Community forums | Medium | High | Medium |

Reviews sit in a unique position — they combine high volume with high authenticity. Unlike surveys, reviews are unsolicited. Unlike support tickets, reviews describe the full experience rather than just problems. Unlike social media, reviews are written with intent to inform other buyers, which means they tend to be more detailed and balanced.

"The best VoC data comes from customers who chose to speak without being asked. Reviews are the largest corpus of unprompted customer opinion that exists for most businesses."

Why Most VoC Programs Fail

Before building a review-powered VoC program, it helps to understand why traditional approaches underperform:

- Survey fatigue produces garbage data. Response rates for customer surveys have declined from roughly 25% in 2015 to under 12% in 2025. The customers who do respond are disproportionately either very satisfied or very angry, creating a bimodal distribution that misrepresents the actual customer base.

- Insights die in dashboards. The most common VoC failure mode is generating insights that never reach decision-makers. Product teams build features without checking VoC data. Marketing writes copy that contradicts what customers actually value. The data exists but sits unused.

- No feedback loop. True VoC is cyclical — collect, analyze, act, measure, repeat. Most programs stop at "collect and analyze" and never close the loop to verify whether actions taken actually improved customer sentiment.

- Siloed ownership. When VoC lives exclusively in the CX or insights team, other departments treat it as someone else's project rather than a shared intelligence resource.

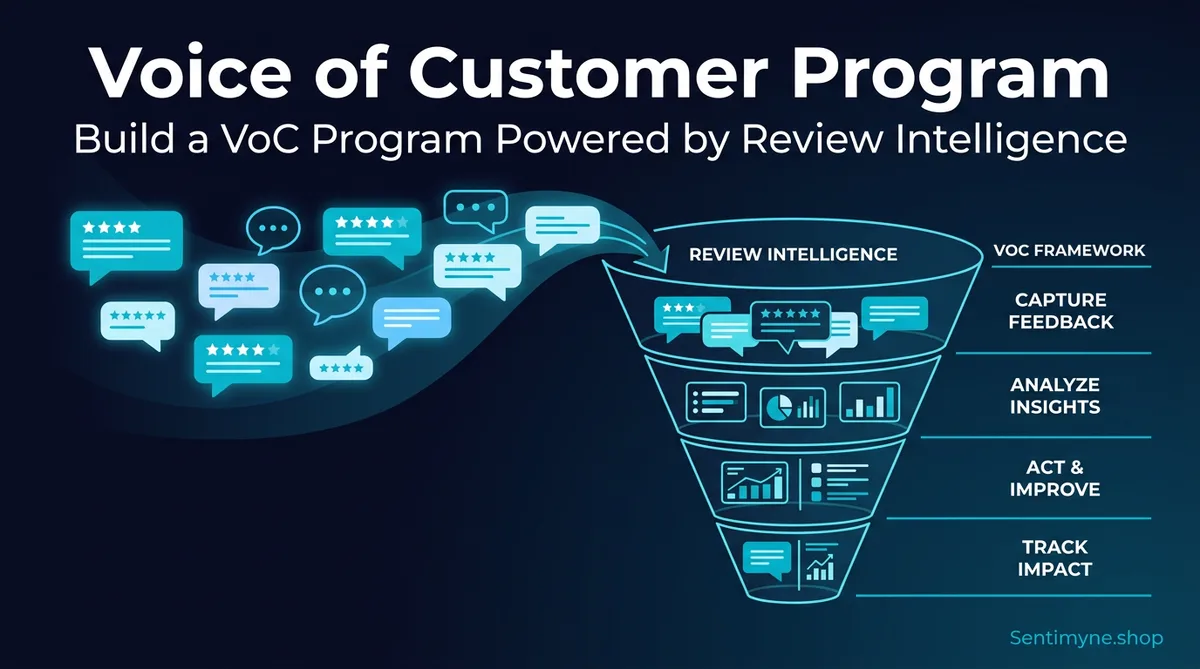

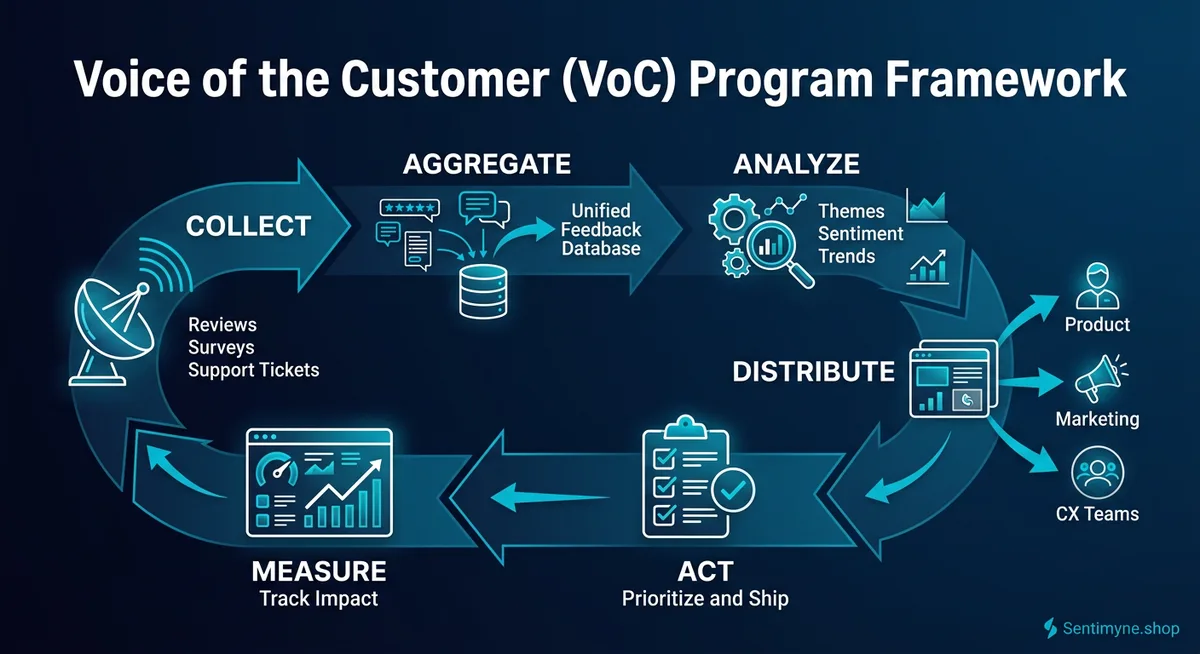

The VoC Framework: Collect, Aggregate, Analyze, Distribute, Act, Measure

A functional VoC program follows six stages. Skip any one and the entire system underperforms.

Stage 1: Collect — Identify and Connect All Review Sources

The first stage maps every platform where customers leave feedback and establishes a reliable collection mechanism.

Primary review sources for most businesses:

- Google Business Profile — The highest-volume source for local and consumer businesses. Google reviews influence 87% of consumers during purchase decisions.

- Trustpilot — Dominant in Europe and growing in North America. Strong for e-commerce and SaaS.

- G2 / Capterra / TrustRadius — Essential for B2B software. Structured review formats produce highly detailed feedback.

- Amazon — Critical for product-based businesses. Amazon reviews contain specific product performance data.

- Yelp — Still influential for restaurants, services, and local businesses despite controversies.

- Industry verticals — TripAdvisor (hospitality), Healthgrades (healthcare), Avvo (legal), Zillow (real estate), and dozens of others.

- App stores — Apple App Store and Google Play for any business with a mobile app.

- Social reviews — Facebook recommendations, Reddit mentions, X/Twitter feedback threads.

Beyond reviews, secondary VoC sources include:

- Support ticket themes and categories

- NPS and CSAT survey free-text responses

- Sales objection logs from CRM

- Churn interview notes

- Community forum threads

The goal in Stage 1 is comprehensive coverage. If customers are talking about you somewhere, you should be listening there.

Stage 2: Aggregate — Centralize Feedback Into One System

Raw reviews scattered across 12 platforms are useless for systematic analysis. Aggregation means pulling all feedback into a single, searchable, analyzable repository.

What good aggregation looks like:

- All reviews from all platforms in one place

- Standardized metadata (date, rating, platform, product/location, reviewer segment)

- Deduplication of cross-posted reviews

- Historical data preserved for trend analysis

- New reviews flowing in automatically, not through manual exports

This is where most manual VoC efforts collapse. A team can manually check five platforms weekly for a few months, but the process is tedious and inevitably gets deprioritized when other work demands attention.

Sentimyne solves the aggregation problem by pulling reviews from 12+ platforms into a single analysis dashboard. Instead of logging into a dozen different platforms and copying reviews into spreadsheets, the tool consolidates everything and makes it analysis-ready in under 60 seconds.

Stage 3: Analyze — Extract Themes, Sentiment, and Trends

Analysis transforms raw feedback into structured insights. This is the stage where most VoC programs either succeed or produce noise that nobody trusts.

Effective review analysis answers five questions:

- What are customers talking about? (Theme identification)

- How do they feel about it? (Sentiment scoring)

- Is it getting better or worse? (Trend detection)

- How does it compare to competitors? (Competitive benchmarking)

- What should we do about it? (Prioritized recommendations)

Analysis methods ranked by effectiveness:

- AI-powered theme clustering — Groups reviews by topic automatically, surfaces patterns invisible to manual reading. Modern NLP can process thousands of reviews in minutes and identify themes with nuance that keyword matching misses.

- Sentiment analysis at the aspect level — Not just "positive" or "negative" overall, but sentiment per topic. A product might score 4.5 stars overall but have deeply negative sentiment around one specific feature.

- SWOT extraction — Mapping review themes to Strengths, Weaknesses, Opportunities, and Threats produces outputs that leadership teams already understand and can act on.

- Manual reading — Still valuable for calibration and edge cases, but not scalable as a primary method.

"The shift from reading reviews to analyzing reviews is the difference between anecdote-driven decisions and data-driven decisions. Both use the same raw material. Only one produces reliable conclusions."

Stage 4: Distribute — Get Insights to the Right Teams

This is the most commonly skipped stage and the reason most VoC programs fail to create impact. Analysis that stays in the insights team's inbox is analysis that never influences decisions.

Distribution matrix — who needs what:

| Team | What They Need | Format | Frequency |

|---|---|---|---|

| Product | Feature requests, pain points, competitive gaps | Theme report with verbatims | Weekly |

| Marketing | Customer language, value drivers, objection patterns | Keyword clusters + quotes | Bi-weekly |

| CX/Support | Emerging issues, service complaints, process failures | Alert-based + weekly digest | Real-time + weekly |

| Sales | Competitive intelligence, win/loss themes, objection handling | Battle cards + trend reports | Monthly |

| Leadership | Overall sentiment trends, SWOT, strategic themes | Executive summary + dashboard | Monthly |

See What Your Reviews Really Say

Paste any product URL and get an AI-powered SWOT analysis in under 60 seconds.

Try It Free →Distribution formats that actually get read:

- Weekly VoC Digest — A one-page email with the top 3 themes, notable quotes, and sentiment trend arrows. Takes 2 minutes to read. Send to all stakeholders.

- Monthly Deep Dive — A 5-10 page analysis covering theme evolution, competitive shifts, and recommended actions. Present in a 30-minute meeting rather than sending as an attachment nobody opens.

- Real-Time Alerts — Automated notifications when negative sentiment spikes, a competitor is mentioned repeatedly, or a new theme emerges. Critical for CX and product teams.

- Quarterly Strategy Brief — A comprehensive review of VoC data mapped to business objectives, presented to leadership as input to strategic planning.

Stage 5: Act — Turn Insights Into Decisions

Distribution without action is just entertainment. The Act stage connects VoC insights to specific business decisions and tracks whether those decisions were implemented.

The VoC Action Framework:

- Quick wins (implement within 1 week) — Copy changes, FAQ updates, support script adjustments, response templates. These are low-effort changes that review data reveals immediately.

- Sprint items (implement within 1-2 sprints) — Feature tweaks, UI improvements, process changes, pricing page clarifications. Add these to product and marketing backlogs with VoC data as supporting evidence.

- Strategic initiatives (implement within 1-2 quarters) — New features, market repositioning, segment targeting changes, competitive response strategies. These require leadership approval and cross-functional effort.

- Monitoring items (watch, don't act yet) — Themes that appear in low volume or without clear direction. Track over time to see if they grow into actionable patterns.

Making action systematic:

Create a VoC Action Log — a simple spreadsheet or project board that tracks: - The insight (with supporting review quotes) - The recommended action - The assigned owner - The deadline - The expected impact - The actual outcome

Review this log in your monthly VoC meeting. The discipline of tracking actions and outcomes is what separates performative VoC programs from ones that actually move metrics.

Stage 6: Measure — Prove the Program's Impact

A VoC program that cannot demonstrate its value will lose budget and executive attention within two quarters. Measurement closes the loop and justifies continued investment.

Primary VoC program metrics:

- Sentiment trend — Is overall sentiment improving quarter over quarter? Track by platform, product, and theme.

- Theme resolution rate — When VoC identifies a problem, how often is it addressed? What percentage of flagged issues result in action?

- Time to insight — How quickly does a new theme go from appearing in reviews to being reported to stakeholders? Aim for under one week.

- Decision influence — How many product, marketing, or CX decisions explicitly cite VoC data as an input? Track this in your action log.

- Rating improvement — The bluntest metric but the most universally understood. A VoC program that works should eventually push average ratings upward.

Building Your VoC Cadence

Consistency matters more than perfection. A simple cadence executed reliably beats an ambitious one that gets abandoned.

Recommended VoC Cadence

Weekly (30 minutes): - Pull new reviews from all platforms - Run sentiment and theme analysis - Draft and send the Weekly VoC Digest - Flag any urgent issues for immediate action

Monthly (2-3 hours): - Compile the Monthly Deep Dive report - Review the VoC Action Log — check status of previous actions - Present findings to stakeholders in a 30-minute meeting - Update competitive intelligence based on competitor review trends

Quarterly (half day): - Produce the Quarterly Strategy Brief - Map VoC themes to business objectives and OKRs - Assess program effectiveness — are insights being used? Are actions producing results? - Adjust collection sources and distribution lists as needed

Staffing a VoC Program

For small teams (1-50 employees): One person owns VoC as 20% of their role. Use tools to automate collection and analysis. Focus the human effort on distribution and action tracking.

For mid-market (50-500 employees): A dedicated VoC analyst or a CX team member with VoC as a primary responsibility. Establish formal distribution channels and a monthly review meeting with product and marketing leads.

For enterprise (500+ employees): A VoC team of 2-4 people, potentially sitting within a Customer Insights or Market Research function. Formal SLAs for insight delivery, integration with product management tools, and executive dashboards.

At every scale, automation is critical. The collection and initial analysis stages should not consume human hours that are better spent on distribution, action, and measurement.

Sentimyne makes the collection-to-analysis pipeline nearly instantaneous. Feed it your review sources, and within 60 seconds you have themed, sentiment-scored, SWOT-organized intelligence ready for distribution. The free plan (2 analyses per month) is enough to pilot a basic VoC cadence. The Pro plan at $29/month supports the weekly cadence that serious VoC programs require.

Measuring VoC Program Impact on Revenue

Leadership does not care about sentiment scores in the abstract. They care about revenue, retention, and cost. Connect your VoC metrics to financial outcomes:

- Rating improvement → Conversion improvement. Research consistently shows that each 0.1-star improvement in average rating correlates with a 1.5-3% increase in conversion rate, depending on the industry and platform.

- Faster issue resolution → Lower churn. When VoC identifies emerging complaints early, CX teams can address them before they become churn drivers. Reducing churn by even 1-2 percentage points can represent significant revenue retention.

- Better product decisions → Higher NRR. Product teams that build what customers actually want (rather than what internal stakeholders assume they want) see higher adoption of new features and stronger net revenue retention.

- Marketing language alignment → Higher CTR. When marketing uses the exact language customers use in reviews, ad and email click-through rates improve measurably because the messaging resonates with actual customer mental models.

Common VoC Mistakes to Avoid

- Collecting without analyzing. Aggregating reviews into a spreadsheet and never analyzing them is worse than not collecting at all — it creates the illusion of a VoC program without any of the benefits.

- Analyzing without distributing. Insights trapped in one team's reports are insights that do not influence decisions across the organization.

- Distributing without acting. Sending reports that nobody reads or responds to erodes trust in the program and wastes analyst time.

- Acting without measuring. If you cannot demonstrate that VoC-driven actions produced results, leadership will eventually question the investment.

- Over-engineering the program. Start simple. A weekly email digest and a monthly meeting are enough to begin. Expand complexity only after the basic cadence is established and delivering value.

Frequently Asked Questions

How many reviews do I need to start a VoC program?

You can start with as few as 50-100 reviews if they are recent and representative. The key is having enough volume to identify recurring themes rather than one-off opinions. As your program matures, aim for continuous collection that brings in new reviews weekly.

Should my VoC program include data beyond reviews?

Yes, eventually. Reviews should be the foundation because of their volume and authenticity, but a mature VoC program incorporates support tickets, survey responses, sales call notes, and social mentions. Start with reviews, prove the model works, then expand sources.

How do I get other teams to actually use VoC insights?

Make the insights easy to consume and directly relevant to each team's goals. Product teams respond to feature request rankings with user quotes. Marketing responds to customer language patterns they can use in copy. Sales responds to competitive intelligence they can use in deals. Tailor the format, not just the content.

What tools do I need for a VoC program?

At minimum, you need a review aggregation and analysis tool, a distribution mechanism (email or Slack), and a tracking system for actions (spreadsheet or project board). Sentimyne handles the aggregation and analysis layer — pulling reviews from 12+ platforms and producing themed, sentiment-scored intelligence in 60 seconds.

How long before a VoC program shows measurable results?

Most programs begin surfacing actionable insights within the first month. Measurable business impact — improved ratings, reduced support volume, better conversion — typically appears within 2-3 months of consistent execution. The key is maintaining the cadence and tracking actions to outcomes.

Ready to try AI-powered review analysis?

Get 2 free SWOT reports per month. No credit card required.

Start FreeRelated Articles

Traditional win/loss analysis relies on expensive interviews with 10-15% response rates. Customer reviews on G2, Capterra, and Trustpilot contain the same buyer signals at scale — for free. Here's the playbook for turning public review data into win/loss intelligence.

How to Analyse Video Product Reviews on YouTube & TikTok at Scale3.4 million video product reviews were posted across YouTube, TikTok and Instagram in a single 5-month period. Learn how to extract structured sentiment, brand mentions, and competitive intelligence from video reviews using AI transcription and NLP.

Review Analysis for Banks, Fintech & Financial Services (2026 Guide)88% of millennials and Gen Z check online reviews before choosing a financial institution. Learn how banks, fintechs, and financial advisors can analyse customer reviews to improve trust, reduce churn, and compete in an industry where a one-star Yelp increase drives 5-9% revenue growth.