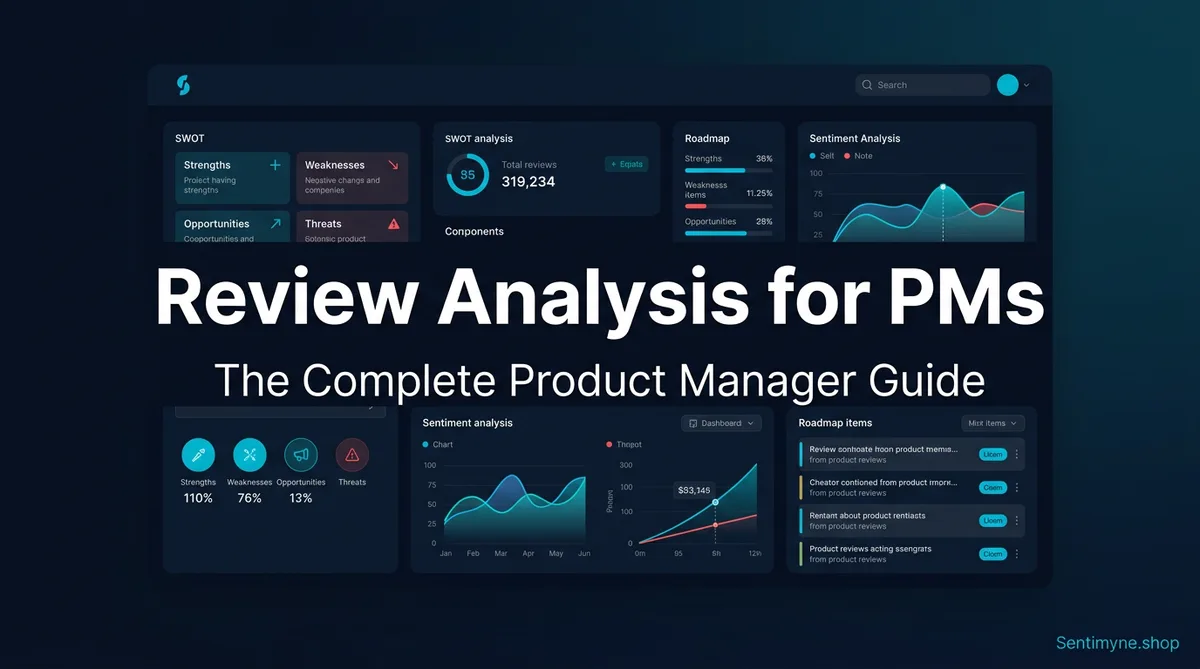

Review Analysis for Product Managers: The Complete Guide

The complete guide to review analysis for product managers. Learn the PM review workflow, how to translate review themes into Jira tickets, prioritize features from sentiment data, build engineering cases from review evidence, and integrate Sentimyne into your weekly workflow.

Customer reviews are the richest source of unstructured product feedback available to any product manager. They are unsolicited, detailed, emotionally honest, and continuously generated. Unlike surveys (which suffer from response bias), usage analytics (which show what but not why), or customer interviews (which are expensive and sample-limited), reviews capture what real users voluntarily chose to write about their experience.

Yet in most product organizations, reviews are treated as a customer success responsibility rather than a product intelligence asset. The CS team reads them, responds to the upset ones, and maybe flags a particularly bad one to the PM in Slack. That is not a strategy — it is damage control.

This guide is for product managers who want to systematically extract intelligence from reviews and integrate it into their existing workflows. It covers the weekly PM review workflow, translating review themes into actionable tickets, building business cases with review evidence, and maintaining the practice without burning out.

Why PMs Should Own Review Analysis

Before getting into the how, let us address the organizational question: why should product management own review analysis rather than leaving it with customer success, marketing, or a dedicated insights team?

Reviews Contain Product-Specific Signal

Customer success teams read reviews to identify support issues and maintain customer relationships. Marketing reads reviews for testimonials and social proof. Neither team is equipped to extract the product-specific signal that reviews contain: feature gaps, UX friction points, competitive comparisons, and unmet use cases.

A review that says "I wish this had a bulk export feature — I'm spending 20 minutes manually exporting each report" is a customer support issue to CS, a potential churn risk to marketing, but to a product manager it is a specific feature request with a quantified pain point (20 minutes per session) and a clear use case (bulk reporting). Only a PM has the context to translate that into a prioritized backlog item with acceptance criteria.

Reviews Are Unfiltered Demand Signals

Customer interviews and surveys are valuable, but they suffer from a fundamental limitation: you choose who to talk to and what to ask about. Reviews flip this dynamic — customers choose to write about what matters most to them, unprompted.

This makes reviews an unfiltered demand signal. The features and issues that appear most frequently in reviews are, by definition, the things customers care about most. No survey can replicate this because surveys introduce researcher bias in question design and respondent bias in selective participation.

The Competitive Dimension

As a PM, you are responsible for competitive positioning. Customer reviews — yours and your competitors' — are the most granular source of competitive intelligence available. A SWOT analysis of a competitor's reviews reveals exactly which features their users love, which ones frustrate them, and what they wish existed. This is intelligence that previously required expensive market research reports.

"I stopped reading competitive analysis decks from our market research vendor. They were 60 pages of obvious observations. I started running Sentimyne SWOT reports on our top 3 competitors' reviews instead. In 10 minutes I had more actionable competitive intelligence than the vendor delivered in a quarter." — VP of Product at a B2B SaaS company

The PM Review Workflow

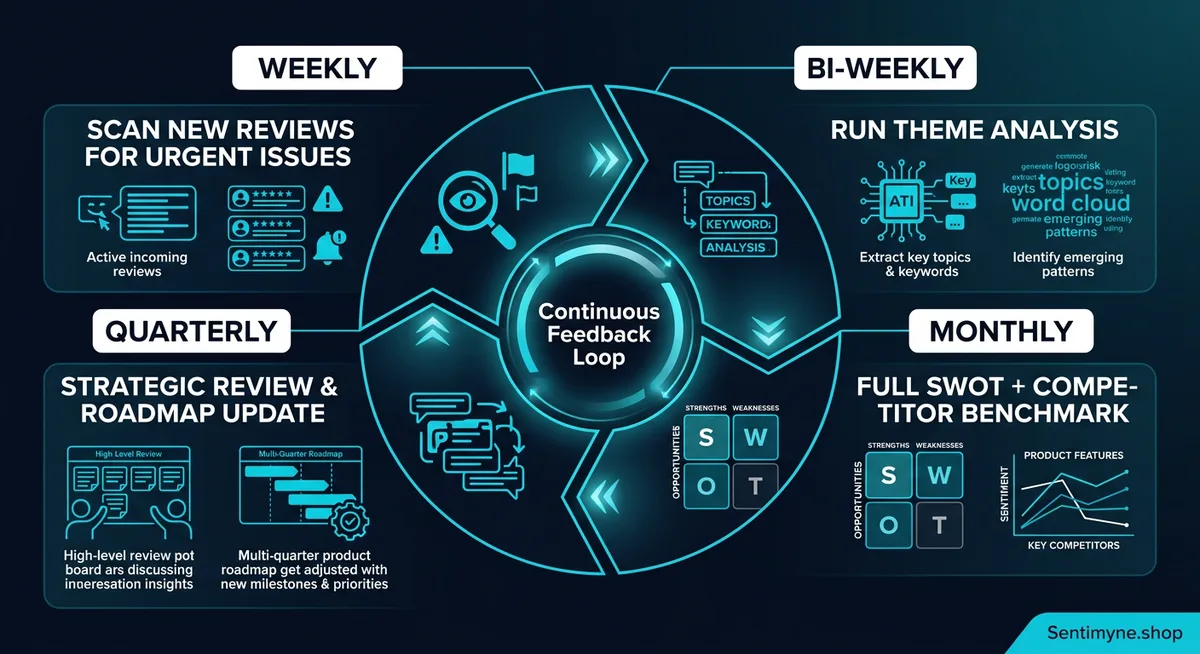

A sustainable review analysis practice operates on four cadences: weekly, bi-weekly, monthly, and quarterly. Each cadence has a specific purpose and output.

Weekly: The 15-Minute Scan

Time commitment: 15 minutes every Monday morning Purpose: Catch emerging issues before they compound Output: Quick triage notes in your team Slack channel

The weekly scan is not deep analysis — it is pattern detection. Skim the last 7 days of reviews looking for:

- New complaints that were not present last week (potential regression from a release)

- Spike in volume on any specific topic (something happened)

- Competitive mentions (users comparing your product to alternatives)

- Positive surprise (features getting unexpected praise — potential positioning opportunity)

You are not trying to categorize every review. You are trying to catch signals that need immediate attention. If you spot something concerning, flag it to engineering with specific review links.

Pro tip: Set up a saved search or RSS feed for your product's reviews across major platforms. This reduces the time to scan from 15 minutes to 5 minutes because you are not manually navigating to each platform.

Bi-Weekly: Theme Identification

Time commitment: 30-45 minutes every other Friday Purpose: Identify recurring themes and track theme evolution Output: Updated theme tracking spreadsheet

Every two weeks, conduct a slightly deeper analysis to identify and track themes. This is where you move from "I see individual complaints" to "I see patterns."

Your theme tracking sheet should include:

| Theme | First Appeared | Current Volume | Sentiment | Trend | Linked Tickets |

|---|---|---|---|---|---|

| Bulk export missing | 2026-01-15 | 23 mentions/month | Negative | Stable | PROD-1847 |

| Mobile app crashes | 2026-02-28 | 41 mentions/month | Negative | Increasing | PROD-2103 |

| API speed improvement | 2026-03-01 | 15 mentions/month | Positive | New | — |

| Onboarding confusion | 2025-11-01 | 18 mentions/month | Negative | Declining | PROD-1592 |

The power of theme tracking is seeing trends over time. A theme that appeared 4 weeks ago and is increasing in volume is a growing problem that demands roadmap attention. A theme that was increasing but is now declining after a fix shipped validates that the fix worked.

Monthly: SWOT Analysis

Time commitment: 1 hour on the first Monday of each month Purpose: Generate a comprehensive SWOT report for product planning Output: SWOT document shared with product, engineering, and leadership

The monthly SWOT analysis is the centerpiece of review-driven product management. It provides a structured view of your product's position based on the most current customer feedback.

Strengths — Features, capabilities, and experiences that customers consistently praise. These are your competitive moats and should be protected and promoted.

Weaknesses — Recurring complaints, bugs, and UX issues. Each weakness should map to a specific improvement initiative with a clear owner.

Opportunities — Feature requests, unmet needs, and competitive gaps. These are validated demand signals that should inform your roadmap.

Threats — Emerging negative trends, competitive advantages mentioned by reviewers, and systemic issues that could drive churn if left unaddressed.

Running this analysis manually takes 2-3 hours if you have significant review volume. Sentimyne generates it in 60 seconds by analyzing reviews from 12+ platforms simultaneously and categorizing them into a SWOT framework automatically.

Quarterly: Strategic Review

Time commitment: 2-3 hours at the end of each quarter Purpose: Connect review insights to business strategy and OKRs Output: Review intelligence brief for leadership and the quarterly roadmap

The quarterly review is where you zoom out. Compare this quarter's SWOT to last quarter's:

- Which weaknesses were addressed? Did sentiment improve?

- Which opportunities were pursued? How did customers respond?

- Are any threats escalating despite mitigation efforts?

- Have new strengths emerged from shipped improvements?

This quarterly comparison is the most powerful artifact a PM can bring to a roadmap planning meeting. It connects customer voice directly to strategic decisions with empirical evidence.

Translating Review Themes Into Jira Tickets

Identifying themes is only half the job. The other half is turning those themes into actionable engineering work. Here is a framework for translating review insights into Jira tickets (or whatever project management tool you use).

The Review-to-Ticket Template

For each review theme that warrants engineering attention, create a ticket with these fields:

Title: [Review Theme] — [Specific Issue] Example: "Bulk Export — Users unable to export more than 10 records at once"

Description: - User problem: What users are experiencing (in their words, with direct review quotes) - Frequency: How many reviews mention this theme in the last 30/60/90 days - Sentiment impact: What this theme contributes to overall negative sentiment - User demand: What users are asking for (specific feature behavior) - Business impact: Estimated impact on retention, conversion, or NPS

Review Evidence Section: Include 3-5 representative review quotes with source, date, and star rating:

"I would rate this 5 stars if I could export more than 10 records. Right now I'm spending 20 minutes doing manual exports every week." — G2, March 2026, 3 stars

"The export limit is a dealbreaker for my team. We're evaluating alternatives that don't have this restriction." — Capterra, February 2026, 2 stars

"Love the analytics but the export functionality feels like it was designed for individual use, not teams. We need bulk export badly." — Trustpilot, March 2026, 3 stars

Acceptance Criteria: Based on review analysis, define what "fixed" looks like from the user's perspective.

Prioritization Using Review Data

Use review data to strengthen your prioritization framework. Most PMs use some variation of RICE (Reach, Impact, Confidence, Effort). Review data enhances three of those four dimensions:

- Reach: Theme mention volume directly quantifies how many users are affected

- Impact: Sentiment severity indicates how much the issue affects user satisfaction

- Confidence: The number of independent reviewers mentioning the same theme increases confidence that the issue is real and widespread

- Effort: (Still estimated by engineering — reviews don't help here)

| Theme | Reach (mentions/mo) | Impact (avg sentiment) | Confidence (platforms) | Effort (eng estimate) | RICE Score |

|---|---|---|---|---|---|

| Bulk export | 23 | -0.65 (high negative) | 4 platforms | 2 sprints | 89 |

| Mobile crashes | 41 | -0.82 (critical) | 2 platforms | 3 sprints | 112 |

| Dark mode | 15 | -0.21 (mild negative) | 3 platforms | 1 sprint | 47 |

| API speed | 12 | +0.45 (positive trend) | 2 platforms | 4 sprints | 22 |

The mobile crash issue scores highest because of its combination of high frequency, severe sentiment, and manageable effort. Without review data, this prioritization would be based on gut feeling or the loudest internal stakeholder.

Building the Case for Engineering Resources

One of the hardest parts of being a PM is convincing engineering leadership to allocate resources to fix "soft" problems — issues that show up in qualitative feedback but not in dashboards full of hard metrics. Review evidence changes this dynamic.

See What Your Reviews Really Say

Paste any product URL and get an AI-powered SWOT analysis in under 60 seconds.

Try It Free →The Evidence Package

When requesting engineering resources for a review-driven initiative, present this package:

1. Quantified Problem Statement "42 reviews in the last 60 days specifically mention export limitations as a reason for giving us less than 4 stars. These 42 reviews represent an estimated 0.3-point drag on our overall rating."

2. Revenue Impact Estimate "Based on our conversion data, a 0.3-point rating improvement translates to approximately X% higher trial-to-paid conversion. At current traffic levels, that represents $Y in additional annual revenue."

3. Competitive Context "3 of our top 5 competitors offer unlimited bulk export. 8 reviews in the last quarter specifically mention competitor X's export feature as a reason they're considering switching."

4. Review Evidence Include the 5 most compelling review quotes. Choose reviews that are specific, articulate, and from verified users.

5. Proposed Solution and Effort "Engineering estimates 2 sprints to implement bulk export. Based on review sentiment data, we expect this to reduce export-related complaints by 70-80% within 60 days of launch."

This package is significantly more persuasive than "customers are complaining about exports." It transforms qualitative feedback into a business case with quantified impact and competitive urgency.

Collaborating With Marketing on Review-Derived Messaging

Review analysis is not just a product intelligence tool — it generates marketing intelligence as well. The language customers use in positive reviews is often more compelling than anything your marketing team writes in a brainstorm.

Mining Reviews for Messaging Angles

Positive reviews reveal what customers value most about your product, expressed in their own language. This is messaging gold.

Steps to extract marketing-ready messaging:

- Collect the 50 most recent 4-5 star reviews

- Identify the top 5 most-mentioned positive attributes

- Extract the exact phrases customers use to describe each attribute

- Share these phrases with marketing for use in:

Example extraction:

| What Marketing Says | What Reviewers Say | Which Is More Compelling? |

|---|---|---|

| "Powerful analytics suite" | "I finally understand my data without being a data scientist" | Reviewer language |

| "Enterprise-grade security" | "The only tool my compliance team approved without pushback" | Reviewer language |

| "Easy onboarding" | "Had my whole team using it in under an hour" | Reviewer language |

The reviewer language wins because it is specific, outcome-oriented, and credible. Marketing should not plagiarize reviews — they should adopt the framing and specificity that reviews demonstrate.

Review-Derived Social Proof

Beyond messaging, reviews provide direct social proof content. Work with marketing to:

- Create a "What Our Users Say" section using real review quotes (with permission where required)

- Build case studies around detailed positive reviews

- Use review themes to structure comparison pages ("Why teams choose us over X")

- Create content that addresses common negative themes proactively (shows transparency and builds trust)

Tracking Shipped Improvements Against Review Sentiment

The feedback loop is not complete until you verify that shipped improvements actually moved the needle. This is where most PM review practices fail — they identify issues and ship fixes but never close the loop to confirm the fix worked from the customer's perspective.

The Post-Ship Sentiment Check

After shipping an improvement that was driven by review feedback, wait 30-45 days for new reviews to accumulate, then check:

- Theme volume change — Did mentions of the issue decrease?

- Theme sentiment change — Did sentiment around the topic improve?

- Overall rating impact — Did the fix contribute to a measurable rating improvement?

- New related themes — Did the fix introduce any new complaints?

| Metric | Pre-Ship (Jan-Feb) | Post-Ship (Mar-Apr) | Change |

|---|---|---|---|

| "Export" negative mentions | 23/month | 4/month | -83% |

| "Export" sentiment score | -0.65 | +0.31 | +0.96 shift |

| Overall product rating | 4.1 | 4.3 | +0.2 |

| New "export" complaints | N/A | "Export format options limited" (3 mentions) | New minor theme |

This data tells a clear story: the fix worked (83% reduction in complaints, sentiment flipped from negative to positive), it contributed to a measurable rating improvement (+0.2 stars), and there is a minor follow-up issue (export format options) that can be addressed in a future sprint.

This kind of evidence is extraordinarily powerful in product reviews and retrospectives. It demonstrates that the team's work had a direct, measurable impact on customer satisfaction.

How Sentimyne Fits Into a PM's Weekly Workflow

A complete manual review analysis for a product with 200+ monthly reviews across multiple platforms takes 4-6 hours per week. That is not sustainable for most PMs who are already stretched across roadmap planning, stakeholder management, and feature specification.

Sentimyne compresses the analysis work into minutes:

Weekly scan (Monday, 5 minutes): Paste your product URL into Sentimyne and generate a fresh SWOT. Compare it to last week's report — any new weaknesses or threats that appeared in the last 7 days need immediate attention.

Bi-weekly themes (alternate Fridays, 10 minutes): Review the Sentimyne SWOT's detailed theme breakdown. Update your theme tracking sheet with any volume or sentiment changes.

Monthly SWOT (first Monday, 15 minutes): Generate a comprehensive Sentimyne analysis and archive it. Compare to the previous month's archived report. Share the comparison with your team and leadership.

Quarterly strategic review (end of quarter, 1 hour): Compare 3 months of archived Sentimyne SWOTs side-by-side. Identify which weaknesses were resolved, which opportunities were captured, and which threats escalated. Use this to inform the next quarter's roadmap.

The free plan (2 reports/month) supports the monthly SWOT cadence. The Pro plan at $29/month supports the full weekly workflow. The Team plan at $49/month adds collaboration features for product teams where multiple PMs need shared access to review intelligence.

"Sentimyne replaced a 6-hour weekly process with a 30-minute weekly process. We ship better product because our review analysis is now consistent rather than something we do when we have time — which was never." — Senior PM at a Series B startup

Common Mistakes PMs Make With Review Analysis

Mistake 1: Only Reading Negative Reviews

Positive reviews contain as much strategic value as negative ones. They tell you what to protect, what to market, and what competitive advantages customers perceive. A PM who only reads negative reviews develops a skewed view of the product.

Mistake 2: Treating All Reviews Equally

A detailed 3-star review from a verified power user contains more signal than a 1-star review that says "doesn't work." Weight reviews by specificity and reviewer credibility, not just star rating.

Mistake 3: Reacting to Individual Reviews Instead of Themes

A single review asking for a feature is an anecdote. Twenty reviews asking for the same feature is a theme. PMs who react to individual reviews create chaotic backlogs. PMs who track themes create focused roadmaps.

Mistake 4: Not Closing the Feedback Loop

If you ship a fix based on review data but never check whether the reviews improved afterward, you are flying blind. Always verify that your fix solved the user-facing problem, not just the engineering ticket.

Mistake 5: Keeping Review Insights Siloed

Review intelligence benefits product, engineering, marketing, sales, and customer success. Share your monthly SWOT with all stakeholders. The marketing team will find messaging angles you missed. The sales team will find objection-handling material. CS will find FAQ content.

Frequently Asked Questions

How many reviews does my product need before systematic analysis is worthwhile?

You need at least 100 reviews across all platforms to identify reliable themes. Below that threshold, individual opinions dominate the data and patterns may not be statistically representative. At 200+ reviews, theme identification becomes robust. At 500+, you can segment by user type, plan tier, or time period with confidence. If your product has fewer than 100 reviews, focus on generating more reviews through post-purchase prompts and in-app requests before investing heavily in analysis infrastructure.

Should the PM or customer success team own review responses?

Customer success should own the operational response — acknowledging issues, providing workarounds, and maintaining customer relationships. The PM should own the intelligence extraction — identifying themes, prioritizing issues, and translating feedback into product decisions. Both teams should have visibility into each other's work. A shared Slack channel or weekly sync where CS flags emerging themes and PM shares planned fixes works well.

How do I convince skeptical engineering leads that review data should influence the roadmap?

Start with a single high-impact example. Find a review theme that aligns with a known issue, quantify it (X mentions per month, Y-star impact on rating), estimate the revenue impact, and present it alongside the engineering estimate. When the fix ships and the reviews improve, use that success as precedent. Most engineering leads are not opposed to review-driven development — they are opposed to unstructured, anecdotal feedback. Systematic review analysis addresses that objection directly.

What is the difference between review analysis and NPS analysis?

NPS gives you a single number (how likely to recommend, 0-10) with an optional text field. Review analysis covers the full spectrum of customer feedback across multiple platforms, including detailed descriptions of features, experiences, and comparisons. NPS tells you the score. Review analysis tells you why. They are complementary — NPS provides a trackable metric, review analysis provides the context and specificity needed to act on it.

Can review analysis replace customer interviews and user research?

No — it complements them. Reviews tell you what customers care about and how they feel, but they do not let you ask follow-up questions, probe deeper motivations, or test new concepts. Use review analysis to identify themes and prioritize which topics deserve deeper investigation through interviews and research. This combination is more efficient than either approach alone because your interview time focuses on validated, high-priority topics rather than exploratory guesswork.

Ready to try AI-powered review analysis?

Get 2 free SWOT reports per month. No credit card required.

Start FreeRelated Articles

Traditional win/loss analysis relies on expensive interviews with 10-15% response rates. Customer reviews on G2, Capterra, and Trustpilot contain the same buyer signals at scale — for free. Here's the playbook for turning public review data into win/loss intelligence.

How to Analyse Video Product Reviews on YouTube & TikTok at Scale3.4 million video product reviews were posted across YouTube, TikTok and Instagram in a single 5-month period. Learn how to extract structured sentiment, brand mentions, and competitive intelligence from video reviews using AI transcription and NLP.

Review Analysis for Banks, Fintech & Financial Services (2026 Guide)88% of millennials and Gen Z check online reviews before choosing a financial institution. Learn how banks, fintechs, and financial advisors can analyse customer reviews to improve trust, reduce churn, and compete in an industry where a one-star Yelp increase drives 5-9% revenue growth.