Review Analysis Glossary: 50+ Terms Every Professional Should Know

Master the language of review analysis with this comprehensive glossary of 50+ terms. Covers sentiment analysis terminology, NLP concepts, business metrics, and technical terms used in customer feedback analysis — organized by category with clear, practical definitions.

Review analysis sits at the intersection of natural language processing, business intelligence, and customer experience management. Each of these fields brings its own vocabulary, and the convergence creates a terminology landscape that can feel impenetrable to newcomers — and occasionally confusing even for experienced practitioners.

This glossary defines 50+ terms used in review analysis, organized by category. Each definition explains what the term means, why it matters, and how it applies to practical review analysis work. Whether you are evaluating review analysis tools, reading research papers, or building an internal review intelligence program, these are the terms you will encounter.

Sentiment Analysis Terms

These terms describe the core concepts behind understanding the emotional tone and opinion expressed in review text.

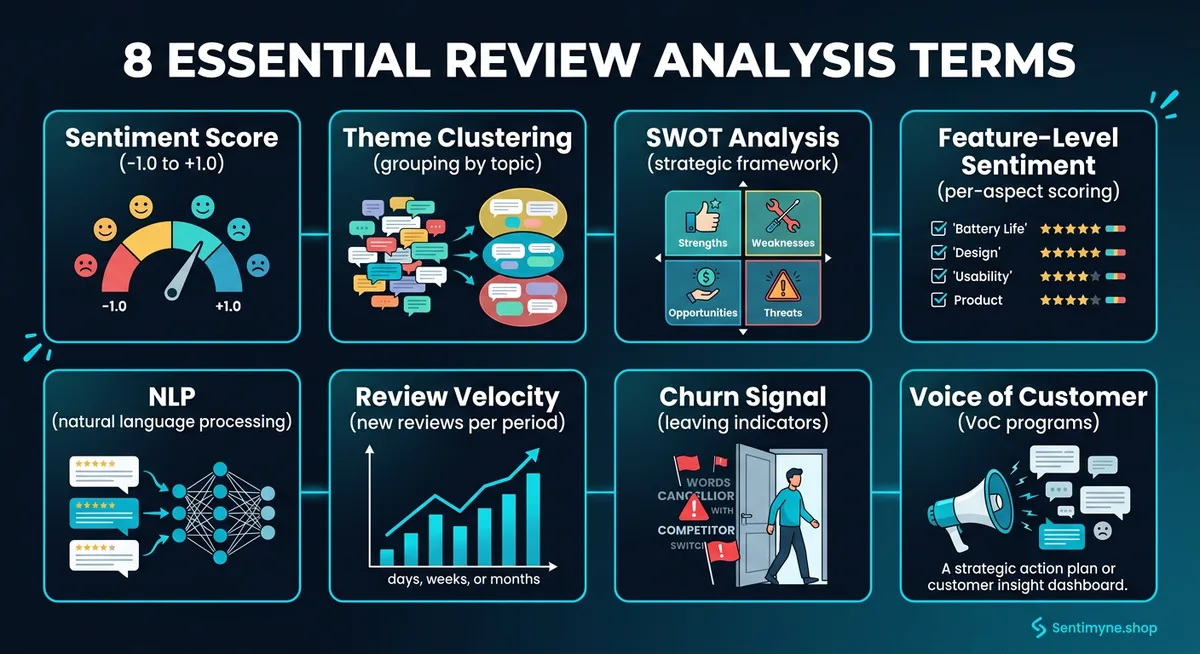

Sentiment Score

A numerical value representing the emotional tone of a piece of text, typically expressed on a scale from -1 (strongly negative) to +1 (strongly positive), or as a percentage from 0% to 100%. Sentiment scores are the most fundamental output of any review analysis system. A product with an average sentiment score of 0.72 across 10,000 reviews is performing well; one scoring 0.31 needs attention.

Polarity

The directional quality of sentiment — positive, negative, or neutral. Polarity is the simplest form of sentiment classification. While sentiment scoring provides granularity (how positive or negative), polarity provides the binary or ternary classification that most business reporting requires. "This product is fantastic" has positive polarity. "This product broke after a week" has negative polarity. "I received the product on Tuesday" is neutral.

Subjectivity

A measure of how opinion-based versus fact-based a statement is. In review analysis, subjectivity scoring helps distinguish between factual claims ("the battery lasts 4 hours") and opinions ("the battery life is terrible"). High-subjectivity text is where sentiment analysis is most reliable and relevant. Low-subjectivity text often contains useful product information but is less meaningful for sentiment measurement.

Sentiment Drift

The gradual change in sentiment over time for a product, brand, or feature. Tracking sentiment drift reveals whether customer perception is improving or deteriorating, which is more actionable than a static sentiment score. A product with a 4.2-star average but declining sentiment over the past six months is in a fundamentally different position than one with a 4.0-star average and rising sentiment.

Feature-Level Sentiment

Sentiment analysis applied to specific product features or attributes rather than the review as a whole. A single review might express positive sentiment about a product's design, negative sentiment about its price, and neutral sentiment about its packaging. Feature-level sentiment breaks a holistic review into component evaluations. This is also called attribute-level sentiment.

Aspect-Based Sentiment Analysis (ABSA)

A more formal term for feature-level sentiment analysis that describes the complete methodology: identifying aspects (features, attributes, or topics) mentioned in text and determining the sentiment expressed toward each aspect. ABSA is considered the gold standard for review analysis because it produces the most actionable insights. Knowing that "customers feel negatively about your shipping speed but positively about product quality" is far more useful than knowing "overall sentiment is 62% positive."

Emotion Detection

A layer beyond simple positive/negative sentiment that identifies specific emotions expressed in text — anger, frustration, delight, surprise, disappointment, gratitude, and others. Emotion detection is particularly valuable for understanding the intensity and nature of customer reactions. "I'm disappointed" and "I'm furious" are both negative, but they signal very different customer states and require different responses.

Sentiment Intensity

The strength or magnitude of the expressed sentiment. "Good" and "extraordinary" are both positive, but their intensity differs significantly. Sentiment intensity scoring captures this gradient. In review analysis, intensity helps prioritize responses — extremely negative reviews warrant faster attention than mildly negative ones.

Valence Shifters

Words or phrases that modify the sentiment of surrounding text. These include negators ("not good"), intensifiers ("very good"), diminishers ("somewhat good"), and modals ("could be good"). Valence shifters are one of the primary challenges in sentiment analysis because they require understanding context, not just individual word sentiment. "Not bad" is positive despite containing the negative word "bad."

Mixed Sentiment

A review or statement that contains both positive and negative sentiment. Most real-world reviews exhibit mixed sentiment — a customer might love a product's features but dislike its price, or praise customer service while criticizing delivery speed. Analysis systems that force reviews into a single positive/negative category lose this nuance.

Analysis Methods

These terms describe the techniques and approaches used to extract insights from review data.

Theme Clustering

The process of grouping related mentions across many reviews into coherent themes. If 500 reviews mention "delivery," "shipping," "arrived late," and "slow arrival," theme clustering groups these into a single "Shipping Speed" theme. This transforms thousands of individual comments into a manageable number of actionable categories. Also called topic clustering.

Natural Language Processing (NLP)

The branch of artificial intelligence focused on enabling computers to understand, interpret, and generate human language. NLP is the foundational technology behind all automated review analysis. It encompasses tokenization, parsing, sentiment analysis, entity recognition, and dozens of other sub-tasks required to extract meaning from text.

Tokenization

The process of breaking text into individual units (tokens), typically words or subwords. "The product works great" becomes ["The", "product", "works", "great"]. Tokenization is the first step in nearly every NLP pipeline. Different tokenization approaches (word-level, subword, character-level) affect downstream analysis quality.

Entity Extraction

Identifying and classifying named entities in text — product names, brand names, competitor names, feature names, locations, and other proper nouns. In review analysis, entity extraction identifies what the reviewer is talking about. "The Samsung Galaxy S26 camera is better than my old iPhone 15" contains three entities: a product (Samsung Galaxy S26), a feature (camera), and a competitor product (iPhone 15). Also called Named Entity Recognition (NER).

Text Classification

Assigning predefined labels or categories to text. In review analysis, this might mean classifying reviews by topic (product quality, customer service, pricing), intent (purchase inquiry, complaint, praise), or urgency (requires immediate response, routine feedback). Text classification can use rule-based systems, machine learning models, or large language models.

SWOT Analysis

A strategic framework that organizes findings into Strengths, Weaknesses, Opportunities, and Threats. When applied to review data, SWOT analysis transforms raw customer feedback into a strategic assessment: strengths are consistently praised attributes, weaknesses are recurring complaints, opportunities are unmet customer needs, and threats are areas where competitors are praised. Sentimyne generates automated SWOT analyses from review data across 12+ platforms, delivering this strategic framework in 60 seconds.

Comparative Analysis

Analyzing reviews of your product or service alongside reviews of competitors to identify relative strengths and weaknesses. Comparative analysis answers questions like "how does our customer service sentiment compare to Competitor X?" and "what features do competitors get praised for that we get criticized for?"

Trend Analysis

Tracking changes in review themes, sentiment, and volume over time to identify emerging patterns. Trend analysis might reveal that complaints about a specific feature have increased 40% over the past quarter, or that a new competitor is being mentioned with increasing frequency. This temporal dimension transforms review analysis from a snapshot into a monitoring system.

Review Summarization

Condensing large volumes of reviews into concise summaries that capture the key themes, sentiments, and insights. Manual summarization does not scale beyond a few dozen reviews. Automated summarization uses NLP to produce readable summaries from thousands of reviews, preserving the most important and representative points.

Anomaly Detection

Identifying unusual patterns in review data that deviate from established baselines — sudden spikes in negative sentiment, unusual review volume, coordinated review campaigns, or abrupt changes in mentioned topics. Anomaly detection helps catch emerging issues before they escalate and can identify potentially fraudulent review activity.

Metrics and Measurements

These terms describe the quantitative measures used to track and benchmark review performance.

Review Velocity

The rate at which new reviews are posted, typically measured as reviews per day, week, or month. Review velocity indicates engagement level and can signal product launches, marketing campaigns, or emerging issues. A sudden increase in review velocity often precedes a shift in sentiment — for better or worse.

Rating Distribution

The breakdown of reviews across rating levels (1-star through 5-star). A healthy rating distribution is typically right-skewed (more high ratings than low). Unusual distributions — like a "J-curve" with many 5-star and 1-star reviews but few in between — may indicate polarized customer experiences or review manipulation.

"Rating distributions tell you more than averages. A product with a 3.5-star average could have universally mediocre reviews or a passionate split between lovers and haters. The distribution reveals which reality you are dealing with."

Mention Volume

The number of times a specific theme, feature, or topic is mentioned across a body of reviews. Mention volume indicates what customers care about most. If "battery life" appears in 2,300 of 10,000 reviews but "screen quality" appears in only 400, customers clearly prioritize battery performance in their evaluation.

Mention Share

The percentage of reviews that mention a specific topic, calculated as (reviews mentioning topic / total reviews) × 100. Mention share normalizes volume across different products or time periods, enabling fair comparisons.

Trend Direction

Whether a metric is increasing, decreasing, or stable over a defined time period. Trend direction applies to sentiment scores, mention volumes, review velocity, and rating distributions. A single sentiment score is a data point; trend direction is intelligence.

Net Sentiment Score

A metric analogous to Net Promoter Score, calculated as the percentage of positive reviews minus the percentage of negative reviews. A product with 70% positive reviews, 10% neutral, and 20% negative has a Net Sentiment Score of +50. This single number provides a quick read on overall customer perception.

See What Your Reviews Really Say

Paste any product URL and get an AI-powered SWOT analysis in under 60 seconds.

Try It Free →Sentiment Variance

The degree of spread in sentiment scores across reviews. Low variance means most customers feel similarly (consistent experience). High variance means customer experiences differ significantly. High variance is a red flag even when average sentiment is positive — it suggests inconsistent quality or service.

Response Rate

The percentage of reviews that receive a response from the business. Response rate is both a metric and a driver of customer perception. Research consistently shows that businesses responding to reviews (including negative ones) experience improved customer trust and higher future ratings.

Time to Response

The average time between a review being posted and the business responding. Faster response times correlate with better customer perception, particularly for negative reviews. Industry benchmarks suggest responding within 24-48 hours for negative reviews and within a week for positive ones.

Review Authenticity Score

A metric estimating the likelihood that a review is genuine rather than fake, incentivized, or automated. Authenticity scoring considers factors like reviewer history, review timing patterns, language naturalness, and similarity to other reviews. Platforms like Amazon and Yelp use proprietary authenticity algorithms; third-party tools provide independent assessments.

Business and Strategy Terms

These terms connect review analysis to business outcomes and strategic decision-making.

Voice of Customer (VoC)

A research methodology for capturing customer expectations, preferences, and experiences. Reviews are one input to VoC programs, alongside surveys, support tickets, social media, and direct customer conversations. VoC programs systematically analyze these inputs to inform product development, marketing strategy, and customer experience improvements.

Review Intelligence

The practice of systematically analyzing review data to generate actionable business insights. Review intelligence goes beyond simply monitoring reviews — it involves structured analysis, competitive benchmarking, trend tracking, and strategic recommendations. It treats reviews as a data source to be mined rather than individual comments to be read.

Competitive Intelligence (from Reviews)

Using competitor review analysis to understand their strengths, weaknesses, and market positioning. Competitive review intelligence reveals what competitors' customers value, what frustrates them, and where unmet needs exist — information that is difficult to obtain through any other channel.

Brand Sentiment

The aggregate sentiment toward a brand across all review platforms, social media, and other public sources. Brand sentiment is broader than product-level sentiment — it encompasses perception of the company, its values, its customer service, and its market position.

Review Mining

The process of extracting structured data and insights from unstructured review text. Review mining applies NLP, machine learning, and statistical analysis to transform free-text reviews into organized, analyzable data. It is essentially the technical process that enables review intelligence.

Churn Signal

An indicator in review data that suggests a customer is likely to leave or has already left. Churn signals include language like "switching to," "last purchase," "canceling," or "looking for alternatives." Detecting churn signals in reviews allows businesses to intervene with at-risk customer segments before they leave.

Customer Pain Point

A specific, recurring source of frustration or dissatisfaction identified in customer reviews. Pain points differ from general complaints in that they represent systemic issues rather than isolated incidents. When 15% of reviews mention the same frustration, that is a pain point requiring structural attention.

Win Theme

The opposite of a pain point — a consistently praised attribute that drives positive customer perception. Win themes are the features, qualities, or experiences that customers specifically highlight as reasons they chose or recommend the product. Understanding win themes is essential for marketing messaging and product positioning.

Feature Request Signal

Language in reviews that indicates unmet customer needs or desired product improvements. Phrases like "I wish it had," "it would be great if," "the only thing missing," and "hopefully they add" signal feature requests. Aggregating these signals across thousands of reviews provides a customer-driven product roadmap.

Technical and Infrastructure Terms

These terms describe the underlying technology and systems used in review analysis.

NLP Pipeline

The sequence of processing steps that transforms raw text into analyzed output. A typical NLP pipeline for review analysis includes: text cleaning, tokenization, part-of-speech tagging, entity extraction, sentiment analysis, theme classification, and output formatting. Each stage feeds into the next, and errors at early stages cascade through the pipeline.

Large Language Model (LLM)

A neural network trained on vast amounts of text data that can understand and generate human language. Modern review analysis increasingly relies on LLMs (like GPT-4, Claude, Gemini) for sentiment analysis, summarization, and insight extraction. LLM-based analysis has largely surpassed older rule-based and traditional ML approaches in accuracy and nuance.

Classification Model

A machine learning model trained to assign categories or labels to input data. In review analysis, classification models might categorize reviews by topic, sentiment, intent, or urgency. These models are typically trained on labeled examples and then applied to new, unlabeled reviews.

Embedding

A numerical vector representation of text that captures semantic meaning. Embeddings allow review analysis systems to understand that "terrible customer service" and "support team was awful" convey similar meanings despite using different words. Modern embedding models map text into high-dimensional vector spaces where semantically similar texts are positioned near each other.

Corpus

The complete collection of text data used for analysis. In review analysis, your corpus might be "all 50,000 reviews of Product X across Google, Amazon, and Trustpilot" or "all competitor reviews in our market segment." Corpus quality and composition directly affect analysis quality.

Text Preprocessing

The cleaning and normalization steps applied to raw review text before analysis. Preprocessing typically includes removing HTML tags, handling special characters, normalizing whitespace, correcting common misspellings, expanding contractions, and removing or handling emoji. Good preprocessing improves downstream analysis accuracy.

Stop Words

Common words (the, is, at, which, on) that carry little semantic meaning and are often removed during text preprocessing. In review analysis, stop word removal must be careful — "not" is technically a common word but is critical for sentiment analysis ("not good" vs "good").

Precision and Recall

Two complementary metrics for evaluating classification accuracy. Precision measures what percentage of items classified as a category actually belong to that category (low false positives). Recall measures what percentage of items that actually belong to a category were correctly identified (low false negatives). In review analysis, high precision means your sentiment labels are accurate; high recall means you are catching all relevant mentions.

F1 Score

The harmonic mean of precision and recall, providing a single metric that balances both. An F1 score of 1.0 is perfect; 0.5 is poor. When evaluating review analysis tools, ask about F1 scores for sentiment classification — scores above 0.85 indicate strong performance.

Confidence Score

A value (typically 0-1 or 0-100%) indicating how certain the model is about its classification. A review classified as "negative" with 0.95 confidence is more reliably negative than one classified with 0.55 confidence. Confidence scores allow you to flag uncertain classifications for human review.

Data Normalization

The process of standardizing review data from different platforms into a consistent format. Google reviews use a 1-5 star scale; NPS uses 0-10; some platforms use thumbs up/down. Normalization converts these into comparable metrics so you can aggregate and compare across platforms. Tools like Sentimyne handle cross-platform normalization automatically, pulling data from 12+ platforms into a unified analysis.

Batch Processing

Analyzing a large collection of reviews at once rather than processing them individually in real time. Batch processing is efficient for periodic analysis (weekly reports, monthly trend reviews) where real-time results are not required. Most review analysis tools operate in batch mode.

API (Application Programming Interface)

A standardized interface for programmatic access to a service's data and functionality. Review platforms offer APIs for accessing review data, and review analysis tools offer APIs for integrating analysis results into other systems. API access is the legally cleanest way to collect review data.

Putting It All Together

These terms do not exist in isolation — they form an interconnected system. A practical review analysis workflow connects many of these concepts:

- Collect review data from your corpus across multiple platforms via APIs

- Run the text through an NLP pipeline that handles text preprocessing, tokenization, and stop word removal

- Apply aspect-based sentiment analysis to determine feature-level sentiment and polarity

- Use entity extraction to identify products, competitors, and features

- Apply theme clustering to group related mentions

- Calculate metrics — sentiment scores, mention volume, review velocity, rating distribution

- Run trend analysis to identify sentiment drift and anomalies

- Generate a SWOT analysis synthesizing win themes, pain points, and feature request signals

- Deliver review intelligence to inform competitive intelligence and VoC programs

"You do not need to understand every technical term to benefit from review analysis. But understanding the vocabulary helps you ask better questions, evaluate tools more critically, and communicate findings more effectively."

This entire pipeline — from collection through SWOT output — is what Sentimyne automates. Rather than requiring you to build each component, Sentimyne processes reviews from 12+ platforms and delivers the strategic output in 60 seconds. The free tier provides 2 analyses per month, which is enough to evaluate whether automated review intelligence fits your workflow before committing to the Pro ($29/month) or Team ($49/month) plans.

Frequently Asked Questions

What is the difference between sentiment analysis and opinion mining?

The terms are often used interchangeably, but technically, opinion mining is the broader discipline that includes identifying opinions, extracting the aspects being discussed, determining sentiment, and attributing opinions to specific entities. Sentiment analysis is a sub-task within opinion mining focused specifically on determining the emotional tone (positive, negative, neutral) of text. In practice, most review analysis tools that claim to do "sentiment analysis" are actually performing aspects of opinion mining.

What does aspect-based sentiment analysis mean in plain language?

Aspect-based sentiment analysis (ABSA) means breaking a review into the specific things it talks about and figuring out the opinion on each one separately. If someone writes "great camera but terrible battery life," ABSA identifies two aspects (camera and battery life) and assigns sentiment to each (positive and negative, respectively). This is more useful than a single overall sentiment score because it tells you exactly what to fix and what to preserve.

How accurate is AI-powered sentiment analysis in 2026?

Modern LLM-based sentiment analysis achieves accuracy rates of 85-95% for straightforward positive/negative classification, depending on the domain and the specific model used. Accuracy drops for nuanced cases involving sarcasm, mixed sentiment, cultural references, or domain-specific jargon. For comparison, human annotators typically agree with each other about 80-85% of the time on sentiment classification, meaning top AI systems are approaching or exceeding human-level consistency.

What is the difference between review intelligence and reputation management?

Reputation management focuses on maintaining and improving how a brand is perceived publicly — responding to reviews, addressing negative feedback, and encouraging positive reviews. Review intelligence is the analytical layer: mining review data for strategic insights, competitive advantages, and product development direction. Reputation management asks "how do we look?" Review intelligence asks "what can we learn?" The best programs combine both.

Do I need to understand NLP to use review analysis tools?

No. Modern review analysis tools abstract away the technical complexity entirely. You do not need to understand tokenization, embeddings, or classification models to use a tool like Sentimyne — you provide the product or business to analyze, and the tool delivers a SWOT analysis with actionable insights. Understanding the terminology helps you evaluate tools and interpret results more critically, but it is not a prerequisite for getting value from automated review analysis.

Ready to try AI-powered review analysis?

Get 2 free SWOT reports per month. No credit card required.

Start FreeRelated Articles

Traditional win/loss analysis relies on expensive interviews with 10-15% response rates. Customer reviews on G2, Capterra, and Trustpilot contain the same buyer signals at scale — for free. Here's the playbook for turning public review data into win/loss intelligence.

How to Analyse Video Product Reviews on YouTube & TikTok at Scale3.4 million video product reviews were posted across YouTube, TikTok and Instagram in a single 5-month period. Learn how to extract structured sentiment, brand mentions, and competitive intelligence from video reviews using AI transcription and NLP.

Review Analysis for Banks, Fintech & Financial Services (2026 Guide)88% of millennials and Gen Z check online reviews before choosing a financial institution. Learn how banks, fintechs, and financial advisors can analyse customer reviews to improve trust, reduce churn, and compete in an industry where a one-star Yelp increase drives 5-9% revenue growth.