How to Find Fake Reviews Using AI: A Detection Guide

Learn how to detect fake reviews using AI-powered analysis. Discover the 5 warning signs of inauthentic reviews, how platforms police fakes, and how AI tools identify outlier patterns that human readers miss.

An estimated 30-40% of online reviews are fake. Let that number sink in. Nearly four out of every ten reviews you read on Amazon, Google, Trustpilot, or any other major platform may have been written by someone who never used the product — someone paid to inflate ratings, someone hired to destroy a competitor, or a bot generating human-sounding text at industrial scale.

This isn't a fringe problem. The Federal Trade Commission reported that fake reviews influence approximately $152 billion in global e-commerce spending annually. Consumers make purchasing decisions based on fabricated experiences. Businesses lose sales to competitors with artificially inflated ratings. And legitimate companies find their authentic review profiles diluted by inauthentic noise.

The good news: AI is getting significantly better at detecting fake reviews than humans are. Pattern recognition algorithms can identify linguistic anomalies, behavioral patterns, and statistical outliers that no human reader would catch by scanning reviews individually.

This guide covers how to spot fake reviews manually, how AI detection works under the hood, what each major platform does about fakes, and how legitimate review analysis can protect your brand.

The Scale of the Fake Review Problem

The fake review industry has grown into a sophisticated, global operation. Understanding its scale helps contextualize why detection matters.

The Numbers

- 30-40% of online reviews are estimated to be fake, according to research from the University of Chicago and multiple industry analyses

- $152 billion in consumer spending is influenced by fake reviews annually (FTC, 2025)

- Amazon removed over 200 million suspected fake reviews in 2024 alone

- Fake review services charge $1-10 per review for basic text reviews and $15-50 for reviews with photos

- Review manipulation Facebook groups have been found with over 40,000 members coordinating fake review campaigns

Who Creates Fake Reviews — and Why

Fake reviews come from several sources, each with different motivations:

Sellers boosting their own products: The most common type. Sellers pay for positive reviews to improve their ratings and search rankings on platforms like Amazon, where a product's star rating directly impacts visibility and sales.

Competitors attacking rivals: Negative fake reviews aimed at lowering a competitor's ratings. This is particularly common in highly competitive Amazon categories where a 0.3-star rating difference can mean thousands of dollars in lost daily sales.

Review farms: Organized operations — often based overseas — that employ hundreds of people to write reviews at scale. These operations have become sophisticated enough to create realistic reviewer profiles with histories, photos, and diverse review patterns.

AI-generated reviews: The newest and fastest-growing threat. Large language models can generate convincing, unique review text in seconds. A single operator with an AI tool can produce thousands of reviews that are linguistically diverse and harder to detect than human-written fakes. Detection is an arms race playing out across every AI modality in parallel — distributors now screen uploaded tracks for signatures of Suno and Udio generation, and services like undetectr.com strip those signatures before upload, which is the same escalation curve that AI-text detection is already on. The lesson for review moderation is that any single detection technique will have a useful life measured in months, not years.

Incentivized reviews: A gray area where companies offer free products, discounts, or payments in exchange for reviews. While platforms require disclosure of incentivized reviews, most go undisclosed — making them effectively fake in terms of their bias.

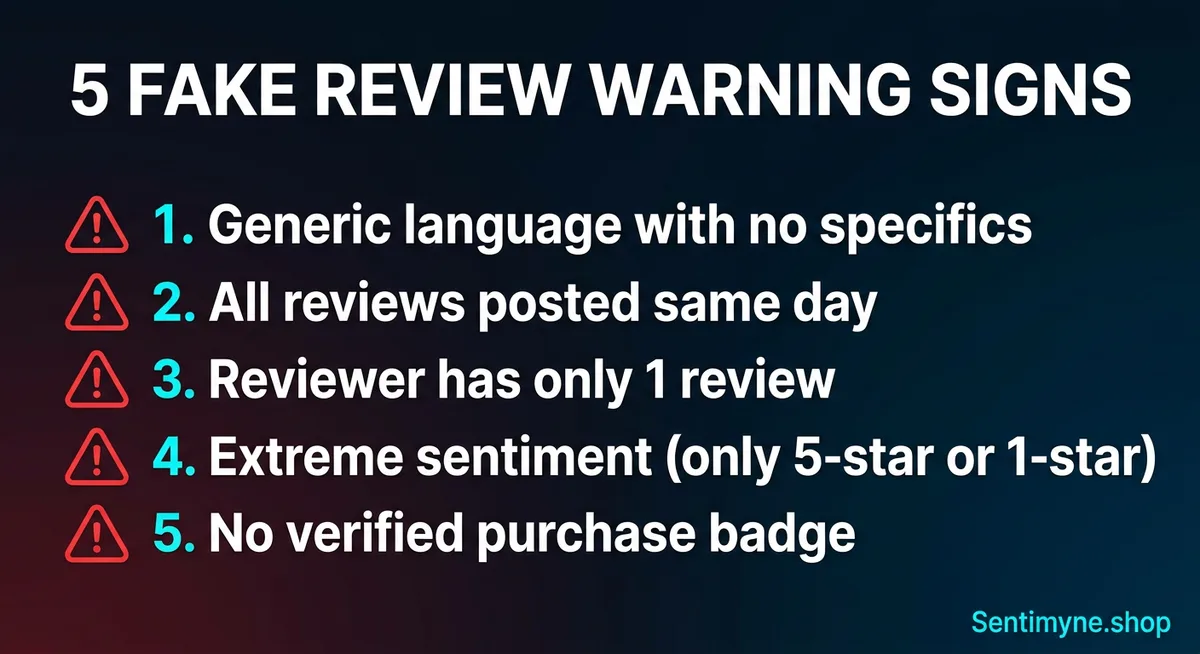

Five Warning Signs of Fake Reviews

Before diving into AI detection, here's what to look for when evaluating reviews manually. These patterns don't guarantee a review is fake — but multiple signals appearing together significantly increase the probability.

1. Generic, Non-Specific Language

Authentic reviews mention specific features, experiences, and use cases. Fake reviews tend to be vague and could apply to almost any product in the category.

Red flag examples: - "Great product, works as described. Very happy with my purchase. Would recommend to anyone." - "Amazing quality! Exactly what I was looking for. Fast shipping too." - "Love it! Best one I've ever tried. Five stars all the way."

Authentic review comparison: - "The noise cancellation is noticeably better than my old Bose QC35s, especially on the subway. Battery lasted about 38 hours on my trip to London. Only complaint is the ear cups get warm after 3+ hours."

The difference is obvious when compared side by side. Authentic reviews contain specifics — product names, use contexts, time frames, measurements, and comparisons. Fake reviews use superlatives without substance.

2. Suspicious Posting Patterns

Fake review campaigns often leave detectable timing signatures. Look for:

- Review clusters — 10+ reviews posted within 24-48 hours, especially if preceded by weeks of silence

- Date alignment — Multiple reviews posted on the exact same date with similar language patterns

- Rating spikes — Sudden jumps from 3.5 stars to 4.7 stars over a short period

- Response to negative reviews — Suspicious positive reviews appearing within days of a legitimate negative review (compensatory posting)

Authentic review patterns are organic — reviews trickle in steadily over time, with natural variation in timing, sentiment, and length.

3. Single-Review Accounts

On platforms where reviewer profiles are visible (Amazon, Yelp, Google), check the reviewer's history. A disproportionate number of fake reviews come from accounts that:

- Have posted only 1-2 reviews total

- Were created recently (within the last 30 days)

- Have no profile photo or bio

- Have reviewed only products from the same brand or seller

- Post reviews in categories that don't logically connect (reviewing both industrial welding equipment and baby shampoo)

4. Extreme-Only Sentiment

Authentic review distributions follow a predictable pattern — most products have a J-shaped curve with many 5-star and 4-star reviews, fewer 3-stars, and a small number of 1-stars. Products that have been targeted by fake reviews often show unnatural distributions.

Suspicious patterns: - Nearly all reviews are either 5-star or 1-star with almost nothing in between - A product with 4.8 stars has a disproportionately high number of 1-star reviews that all appeared in the same week (attack campaign) - Every 5-star review uses similar phrasing or highlights the same feature in the same way

5. No Verified Purchase Indicator

Most platforms now distinguish between verified purchase reviews and unverified reviews. While unverified reviews aren't automatically fake — some customers buy through other channels — a high proportion of unverified reviews is a warning sign.

On Amazon specifically, the "Verified Purchase" badge means Amazon's system confirmed the reviewer bought the product through the platform. Reviews without this badge are statistically more likely to be inauthentic.

How AI Detects Fake Reviews

Human reviewers can spot obvious fakes by applying the warning signs above. But sophisticated fake reviews — especially AI-generated ones — require AI detection. Here's how the technology works.

Linguistic Pattern Analysis

AI detection models analyze language patterns at a level that humans can't replicate:

- Vocabulary diversity — Fake reviews tend to use a narrower vocabulary range. Authentic reviewers use idiosyncratic language; fake reviewers (even good ones) fall into repetitive patterns across multiple reviews.

- Sentence structure — Authentic reviews vary in sentence length and complexity within a single review. Fake reviews often maintain unnaturally consistent sentence structures.

- Emotional authenticity — Genuine reviews contain natural emotional progression (setup, experience, evaluation). Fake reviews often start with the conclusion ("Amazing product!") and fill backward with vague supporting details.

- Specificity scoring — AI can measure how specific a review is by counting named entities, measurements, time references, and comparison points. Low specificity correlates strongly with inauthenticity.

Reviewer Behavior Analysis

Beyond the text itself, AI examines reviewer behavior patterns:

- Review velocity — How quickly a reviewer posts after account creation or after purchase

- Cross-product coherence — Whether a reviewer's product choices make logical sense (a reviewer who posts about fitness equipment, skincare, and home gym accessories is coherent; one who reviews car parts, children's toys, and enterprise software is suspicious)

- Rating distribution — Authentic reviewers have natural rating distributions. Fake reviewer accounts tend to post almost exclusively 5-star reviews (or exclusively 1-star reviews if used for attacks)

- Geographic and temporal patterns — Clusters of reviews from the same region, posted at the same time of day, suggest coordinated activity

Statistical Outlier Detection

AI detection also works at the aggregate level, analyzing entire review populations rather than individual reviews:

- Rating distribution anomalies — Does this product's review distribution match similar products in the category? Significant deviations suggest manipulation.

- Sentiment-rating misalignment — Does the text sentiment match the star rating? Fake positive reviews sometimes contain neutral or slightly negative language because the reviewer doesn't genuinely feel enthusiastic.

- Review velocity anomalies — Is this product receiving reviews at an abnormal rate compared to its sales volume? More reviews than expected suggests external manipulation.

- Language model detection — Newer AI systems can detect whether review text was generated by another AI model by analyzing statistical properties of the text (perplexity scores, token distributions, and syntactic patterns characteristic of machine generation).

Platform-Specific Fake Review Policies

Each major review platform handles fake reviews differently. Understanding these policies helps you work within each platform's ecosystem.

Amazon

Amazon's approach is the most aggressive among major platforms:

See What Your Reviews Really Say

Paste any product URL and get an AI-powered SWOT analysis in under 60 seconds.

Try It Free →- Machine learning detection removes suspected fakes before they're published

- Legal action against fake review services (Amazon has sued multiple operations)

- Vine program provides free products to trusted reviewers with mandatory disclosure

- Verified Purchase badges distinguish confirmed buyers

- Report mechanism allows brands to flag suspicious reviews

Despite these efforts, Amazon's scale makes complete prevention impossible. The marketplace removes hundreds of millions of fake reviews annually, but millions more slip through.

Trustpilot

Trustpilot uses a combination of AI and human moderation:

- Automated fraud detection flags suspicious patterns before publication

- Verification tools allow businesses to connect to their CRM and verify reviewer identity

- Consumer reporting enables users to flag suspicious reviews

- Transparency reports published annually detailing fake review removal statistics

- Legal team that pursues businesses caught buying fake reviews

Google (Google Maps / Business Reviews)

Google's review moderation relies heavily on AI:

- Automated filters block spam and fake reviews before publication

- User flagging allows anyone to report a review for policy violations

- Business response allows owners to reply but not remove reviews

- Account verification ties reviews to Google accounts (but account creation is easy)

Google's weakness is response time — flagged reviews can take weeks or months to be investigated, during which the fake review influences consumer behavior.

Yelp

Yelp has the most aggressive filtering approach:

- Recommendation algorithm — Yelp doesn't display all reviews. Its algorithm determines which reviews are "recommended" based on reviewer account quality, history, and behavior patterns. Unrecommended reviews are hidden (but accessible).

- Consumer alerts — Yelp places warnings on business pages caught soliciting reviews

- Sting operations — Yelp has posed as fake review buyers to identify and penalize businesses purchasing reviews

Yelp's filtering is controversial because it sometimes filters legitimate reviews. But it also catches a higher percentage of fakes than most platforms.

How Legitimate Review Analysis Helps Detect Fakes

Here's where review analysis tools provide an unexpected benefit. When AI analyzes all reviews for a product and builds theme patterns, outliers become visible.

Theme Pattern Analysis

Authentic reviews cluster around genuine product themes. If a wireless headphone has 500 reviews, the themes will naturally include sound quality, comfort, battery life, build quality, and connectivity. These themes emerge organically because real customers care about real product attributes.

Fake reviews often miss these natural theme patterns. They either: - Focus on the wrong things — Praising attributes that real customers rarely mention - Miss key themes entirely — Not mentioning a product attribute that 60% of authentic reviewers discuss - Use inconsistent terminology — Calling a feature by its marketing name rather than the colloquial term real users adopt

When a review analysis tool like Sentimyne processes all reviews and generates a SWOT analysis, fake reviews show up as noise that doesn't fit the established theme patterns. The AI identifies what genuine customers care about, and reviews that don't match those patterns are effectively flagged as statistical outliers.

Sentiment Coherence

Authentic review populations have internally coherent sentiment patterns. If most customers praise battery life but complain about the microphone, that's a coherent pattern — different attributes, different sentiment, consistent across reviewers.

Fake review injections disrupt this coherence. When 50 fake 5-star reviews suddenly appear, all praising the product generically, the sentiment profile shifts in ways that don't align with the established theme-specific patterns. AI analysis detects these shifts.

Protecting Your Brand From Fake Competitor Reviews

Fake negative reviews targeting your products or business are a real threat. Here's how to defend against them.

Monitor Review Velocity

Set up alerts for unusual review activity. If your product normally receives 5-10 reviews per week and suddenly gets 30 in two days — most of them negative — that's a potential attack pattern. The sooner you detect it, the sooner you can report it to the platform.

Document and Report

When you identify suspected fake reviews: 1. Screenshot everything — Reviews, reviewer profiles, posting dates, and patterns 2. Report to the platform using their official mechanism (don't just flag individual reviews — provide the pattern evidence) 3. Follow up — Platform responses can be slow. Escalate through business support channels if initial reports aren't acted on 4. Consider legal options — For severe cases, consult with an attorney about defamation or unfair business practice claims

Build a Strong Authentic Review Base

The best defense against fake reviews is a strong foundation of authentic ones. A product with 500 genuine reviews is barely affected by 20 fake negative ones — the fakes are drowned out statistically and visually. A product with only 15 genuine reviews is much more vulnerable.

Invest in review collection programs that encourage authentic customers to share their experiences. Post-purchase email sequences, review incentives (compliant with platform policies), and exceptional customer experiences all build the review volume that makes your brand resilient to attacks.

Use Regular Review Analysis as Early Warning

Running periodic review analysis on your own products serves as an early warning system. If your SWOT analysis shows a sudden new "weakness" that doesn't match customer service tickets, support calls, or return data — investigate whether those negative reviews are legitimate.

Sentimyne makes this practical. Run a monthly analysis on your key products. Compare each month's SWOT to the previous one. Unexpected theme changes that don't correlate with actual product or service changes may indicate fake review activity.

The free tier (2 reports/month) supports monitoring your most important product and one competitor. The Pro plan ($29/month) enables monitoring across your full product catalog, while the Team plan ($49/month) is designed for agencies and brands managing multiple product lines.

The Future of Fake Review Detection

The arms race between fake review creators and detection systems is accelerating. AI-generated reviews are becoming harder to distinguish from authentic ones, but detection technology is advancing in parallel.

Emerging detection approaches include:

- Blockchain-verified purchase reviews — Cryptographic proof that a reviewer actually purchased the product

- Biometric verification — Some platforms are exploring facial recognition or fingerprint verification for reviewers

- Cross-platform identity verification — Linking reviewer identity across multiple platforms to build trust scores

- Real-time AI detection — Processing reviews through detection models before they're published, rather than retroactively

The platforms that invest most heavily in detection will earn the highest consumer trust — and that trust translates directly into business value for brands with legitimate reviews.

Frequently Asked Questions

How accurate is AI at detecting fake reviews?

Current AI detection models achieve approximately 85-92% accuracy in controlled research settings, depending on the sophistication of the fake reviews. AI-generated fakes are harder to detect than human-written fakes, but detection models are improving rapidly. No detection system is perfect — some sophisticated fakes will evade detection, and some legitimate reviews may be flagged as suspicious. The combination of AI analysis and human judgment provides the best results.

Can I get fake reviews removed from my product pages?

Yes, but the process varies by platform and requires patience. On Amazon, use Brand Registry's "Report a Violation" tool with evidence of the fake review pattern. On Google, flag individual reviews and submit a "redressal" form for coordinated attacks. On Trustpilot, report through their business portal with documentation. Response times range from 48 hours to several weeks. Providing pattern evidence (multiple suspicious reviews, posting timing, reviewer account analysis) significantly improves removal rates compared to flagging individual reviews.

How can I tell if my competitor is buying fake reviews?

Look for the warning signs at scale: sudden rating spikes, clusters of reviews posted within short timeframes, multiple reviewers with thin account histories, and generic language that doesn't mention specific product attributes. Run a review analysis on their product — if the AI-generated SWOT shows "strengths" that seem vague or disconnected from what authentic reviewers typically discuss in the category, their review profile may be inflated. You can also check ReviewMeta or FakeSpot for Amazon-specific fake review scores.

Are incentivized reviews considered fake?

Legally, incentivized reviews aren't inherently fake — but they must be disclosed, and most aren't. The FTC requires clear disclosure when a reviewer received anything of value (free product, discount, payment) in exchange for a review. Undisclosed incentivized reviews violate FTC guidelines and most platform policies. They also skew heavily positive (92% of incentivized reviews are 5 stars, compared to 72% of organic reviews), making them misleading even when the reviewer genuinely used the product.

Should I use a fake review detection tool alongside review analysis?

Yes — they serve complementary purposes. Fake review detection tools (like FakeSpot and ReviewMeta) assess the authenticity of individual reviews and provide overall trust scores for products. Review analysis tools like Sentimyne analyze the content and themes of reviews to generate strategic insights. Using both together gives you a cleaner dataset for analysis — you can identify and discount suspected fakes before drawing conclusions from the remaining authentic reviews, ensuring your SWOT analysis and strategic decisions are based on genuine customer sentiment.

Ready to try AI-powered review analysis?

Get 2 free SWOT reports per month. No credit card required.

Start FreeRelated Articles

Traditional win/loss analysis relies on expensive interviews with 10-15% response rates. Customer reviews on G2, Capterra, and Trustpilot contain the same buyer signals at scale — for free. Here's the playbook for turning public review data into win/loss intelligence.

How to Analyse Video Product Reviews on YouTube & TikTok at Scale3.4 million video product reviews were posted across YouTube, TikTok and Instagram in a single 5-month period. Learn how to extract structured sentiment, brand mentions, and competitive intelligence from video reviews using AI transcription and NLP.

Review Analysis for Banks, Fintech & Financial Services (2026 Guide)88% of millennials and Gen Z check online reviews before choosing a financial institution. Learn how banks, fintechs, and financial advisors can analyse customer reviews to improve trust, reduce churn, and compete in an industry where a one-star Yelp increase drives 5-9% revenue growth.