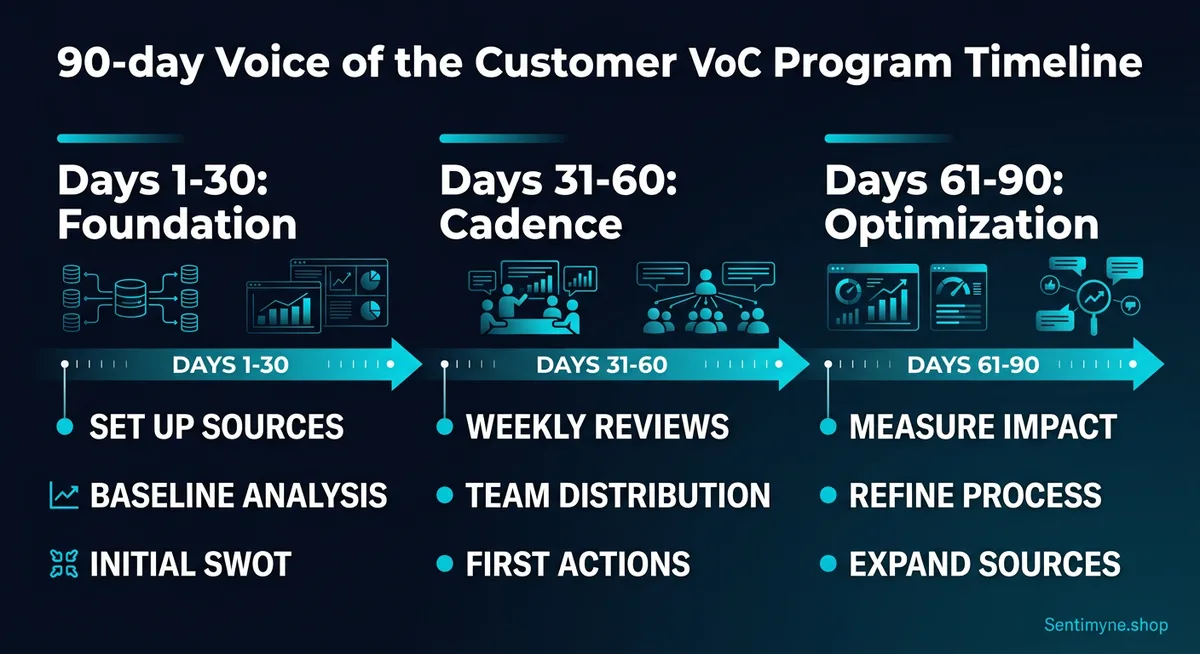

How to Build a Voice of Customer Program From Zero to Customer-Centric in 90 Days

A complete 90-day implementation plan for building a Voice of Customer program from scratch. Covers foundation (Days 1-30), cadence (Days 31-60), and optimization (Days 61-90) with practical frameworks for collecting, analyzing, and distributing customer insights across your organization.

Building a Voice of Customer program feels like assembling furniture without instructions. You know the outcome you want — an organization that systematically listens to customers, extracts insights, and acts on them. But the path from "we should really listen to our customers more" to a functioning VoC program with regular cadence, clear ownership, and measurable impact is surprisingly unclear.

Most VoC initiatives fail within their first six months. They start with enthusiasm, produce a few interesting reports, and then gradually fade as competing priorities take over and nobody can point to specific business outcomes the program generated. The insight reports pile up unread. The dashboards get checked less frequently. The weekly review meeting becomes biweekly, then monthly, then theoretical.

The 90-day framework in this guide is designed to prevent that decay. It moves fast enough to generate visible wins before organizational patience expires, but systematically enough to build lasting infrastructure. By Day 90, you will have a VoC program that runs on its own rhythm — not on the enthusiasm of whoever championed it.

Before You Start: The VoC Readiness Checklist

Before diving into the 90-day plan, verify that the prerequisites are in place:

- [ ] Executive sponsor identified. Someone with budget authority and cross-functional influence must champion the program. Without this, the program dies at the first resource conflict.

- [ ] Review volume exists. You need at least 50 reviews across your platforms to have enough raw material. If you have fewer, spend the first 30 days generating review volume before starting the VoC program.

- [ ] One person owns it. VoC needs a single owner who is accountable for execution, even if insights are distributed across teams. This can be part-time — a dedicated hour per week is enough to start.

- [ ] Willingness to act. The most common VoC failure is generating insights that nobody acts on. Before starting, confirm that leadership is prepared to make changes based on what customers say, even when it contradicts internal assumptions.

"A VoC program without executive sponsorship and willingness to act is just an expensive listening exercise. Confirm both before you invest a single hour."

Phase 1: Foundation (Days 1-30)

The first 30 days are about infrastructure — identifying sources, setting up collection, establishing a baseline, and defining the metrics that will prove the program's value.

Week 1: Source Identification and Mapping

Day 1-2: Audit all existing feedback sources.

Create a complete inventory of every place customers currently provide feedback:

| Source Type | Specific Platform | Current Volume | Currently Monitored? | Structured? |

|---|---|---|---|---|

| Review platforms | Google, Yelp, Trustpilot, G2, etc. | Count per platform | Yes/No | Semi-structured |

| Support tickets | Zendesk, Intercom, email, etc. | Monthly volume | Yes (for resolution) | Semi-structured |

| Survey responses | NPS, CSAT, post-purchase | Response rate | Partially | Structured |

| Social media | Twitter/X, Instagram, LinkedIn, Facebook | Monthly mentions | Sporadically | Unstructured |

| Sales call notes | CRM records, Gong recordings | Monthly volume | Rarely | Unstructured |

| Community forums | Reddit, own community, Discord | Monthly posts | Sometimes | Unstructured |

| App store reviews | Apple, Google Play | Monthly volume | Sometimes | Semi-structured |

| Direct feedback | Emails, phone calls, in-person | Monthly volume | Never aggregated | Unstructured |

Most companies discover they have far more feedback sources than they realized — the data just sits in silos. A support team sitting on 500 tickets per month contains more customer intelligence than most companies' annual survey programs, but it has never been analyzed for strategic insights.

Day 3-5: Prioritize sources by signal quality.

Not all sources deserve equal attention. Rank by three criteria:

- Volume — Higher volume means more statistically reliable insights

- Authenticity — Unsolicited feedback (reviews, support tickets) is more honest than prompted feedback (surveys)

- Actionability — Some sources produce clearer action items than others

For most businesses, the top three sources will be: online reviews, support tickets, and NPS/CSAT survey verbatim comments. These three alone provide sufficient signal for a robust VoC program.

Week 2: Collection Infrastructure

Day 6-10: Set up systematic collection.

The goal is a single location where all feedback is accessible. This does not require enterprise software — a well-structured Google Sheet or Notion database works for companies under 500 reviews per month.

Minimum viable VoC collection system:

- Review aggregation — Use Sentimyne to pull reviews from 12+ platforms into structured SWOT analyses. This eliminates the most time-consuming part of review collection — manually checking each platform.

- Support ticket tagging — Add a "VoC Theme" field to your ticketing system. Train support agents to tag each ticket with a primary theme (e.g., "pricing confusion," "feature request," "bug report," "onboarding issue"). This takes agents five seconds per ticket and produces a categorized dataset over time.

- Survey verbatim collection — Export the open-text responses from your NPS and CSAT surveys into your central VoC repository. The scores are useful but the verbatim comments are where the insights live.

- Social listening — Set up Google Alerts for your brand name, product names, and key competitor names. This catches mentions across the web without requiring social media monitoring software.

Week 3: Baseline SWOT Analysis

Day 11-17: Establish your starting point.

Before you can measure improvement, you need to know where you stand. This baseline becomes the reference point for every future analysis.

Run your baseline SWOT:

Using Sentimyne, run a comprehensive SWOT analysis across all available review platforms. Document the results in detail:

Strengths Baseline: - List every strength identified, with the approximate percentage of reviews that mention it - Rank strengths by frequency and intensity - Note which strengths align with your brand positioning (these are assets to protect)

Weaknesses Baseline: - List every weakness, again with frequency estimates - Rank by impact — which weaknesses correlate with the lowest star ratings? - Identify which weaknesses are operational (fixable) vs. structural (require major investment)

Opportunities Baseline: - Catalog customer suggestions and wishes - Cross-reference with your product roadmap — are you already building what customers want? - Identify opportunities that competitors are also not addressing (blue ocean)

Threats Baseline: - Note competitive mentions and the context (are customers switching away or comparing?) - Identify market trends mentioned in reviews (changing expectations, new alternatives) - Flag any emerging negative themes that were not present six months ago

This baseline SWOT becomes your Day 0 snapshot. Print it out, share it with your team, and refer back to it at Day 30, Day 60, and Day 90.

Week 4: Define Success Metrics

Day 18-25: Establish VoC KPIs.

A VoC program without defined metrics will be killed during the next budget review. Establish metrics across three layers:

Program Health Metrics (Is the VoC program itself running well?)

| Metric | Baseline | Day 30 Target | Day 60 Target | Day 90 Target |

|---|---|---|---|---|

| Review sources monitored | Current count | +2 sources | All priority sources | All sources |

| Weekly VoC routine completion rate | 0% | 75% | 90% | 100% |

| Insight reports distributed | 0 | 2 per month | 4 per month | 4 per month |

| Action items generated | 0 | 4 per month | 6 per month | 8 per month |

| Action items completed | 0 | 1 per month | 3 per month | 5 per month |

Customer Sentiment Metrics (Are customers happier?)

| Metric | Baseline | Day 90 Target |

|---|---|---|

| Average star rating (primary platform) | Current | +0.1 to +0.2 stars |

| Negative review percentage | Current % | -5 to -10 percentage points |

| Top weakness mention frequency | Current % | -20% frequency |

| Review response rate | Current % | 100% |

Business Impact Metrics (Is VoC driving business results?)

| Metric | Baseline | Day 90 Target |

|---|---|---|

| Product changes driven by VoC data | 0 | 3+ |

| Marketing messages updated from VoC language | 0 | 5+ |

| Support process improvements from VoC | 0 | 2+ |

| Revenue impact (estimated) | $0 | Positive trend |

Day 26-30: Present the foundation to leadership.

Create a brief presentation covering: sources identified, collection system established, baseline SWOT results, defined metrics, and the plan for Phase 2. This is not just a report — it is a recommitment checkpoint. Getting leadership to affirm the program at Day 30 ensures continued support through the harder work of Phases 2 and 3.

Phase 2: Cadence (Days 31-60)

Phase 1 built the infrastructure. Phase 2 establishes the rhythm — the recurring activities that transform VoC from a project into a program.

Establishing the Weekly Review Routine

The VoC Weekly (30-45 minutes, same day each week):

- Collect (10 minutes) — Pull the week's new reviews, support ticket themes, and any social mentions into your central repository. If using Sentimyne, run a fresh analysis on new review data.

- Analyze (10 minutes) — Identify this week's primary themes. What is new? What is recurring? What is getting better or worse? Compare to your baseline SWOT.

- Distribute (5 minutes) — Send a brief VoC summary to relevant teams. This should be concise — three to five bullet points maximum. Example format:

> This Week's VoC Summary: > - 12 new reviews across Google and Trustpilot (avg 4.2 stars) > - Top positive theme: Fast delivery (mentioned 5 times) > - Top negative theme: Mobile app crashes (mentioned 3 times, up from 0 last month) > - Competitor mention: 2 reviews compared us favorably to [Competitor] on pricing > - Action needed: Mobile app stability — escalate to engineering

4. Act (10 minutes) — Review last week's action item. Is it complete? What is this week's action item based on the analysis? Assign an owner and a deadline.

Distributing Insights to Teams

The most common VoC failure is insight hoarding — the VoC owner generates great analysis that never reaches the people who can act on it. Establish distribution channels:

Product team: Monthly VoC digest focusing on feature requests, usability complaints, and competitive feature comparisons. Include direct customer quotes. Product managers respond to quantified pain points — "37% of negative reviews mention confusing navigation" is actionable in a way that "some customers find it hard to use" is not.

Marketing team: Quarterly positioning update focusing on customer language, brand attributes, and competitive perception. Provide the exact words customers use to describe your strengths — these become your most authentic marketing copy.

Support/CX team: Weekly theme update focusing on emerging issues, resolution satisfaction, and response time feedback. Support teams should see the review feedback loop — when they resolve an issue well, it shows up in positive reviews.

Sales team: Monthly competitive intelligence brief drawn from competitor review analysis. Include competitor weaknesses that sales can address in conversations and customer-validated value propositions.

Leadership: Monthly executive summary with sentiment trends, key metrics, and business impact of VoC-driven changes. Keep it to one page. Include a "decisions needed" section if any insights require executive action.

Taking First Actions and Tracking Results

By Day 45, your VoC program should have generated at least four specific action items. Track them using a simple format:

See What Your Reviews Really Say

Paste any product URL and get an AI-powered SWOT analysis in under 60 seconds.

Try It Free →| Action Item | Source | Owner | Deadline | Status | Impact Measurement |

|---|---|---|---|---|---|

| Fix mobile app crash on checkout | Reviews (3 mentions) | Engineering | Day 50 | In progress | Track crash-related review mentions |

| Add sizing guide to product pages | Support tickets (15/month) | Product | Day 55 | Completed | Track sizing-related returns and complaints |

| Update homepage copy with customer language | VoC language analysis | Marketing | Day 40 | Completed | A/B test conversion rate |

| Respond to all Google reviews within 24 hours | Baseline: 40% response rate | CX | Ongoing | In progress | Track response rate weekly |

Tracking the Sentiment Baseline

Compare your Week 4-8 SWOT to your Week 1 baseline:

- Are the same weaknesses present? (If yes, action items may not be addressing root causes)

- Are any new weaknesses emerging? (If yes, something has changed — investigate)

- Are strengths stable or growing? (Growing strengths confirm you are protecting them)

- Are opportunities being captured? (Cross-reference with your roadmap)

If you are using Sentimyne, run a fresh SWOT analysis at Day 45 and Day 60 to track movement. Even at the free tier, using your two monthly analyses at strategic intervals gives you trend data.

Phase 3: Optimization (Days 61-90)

Phase 3 is where the VoC program transitions from "new initiative" to "how we operate." The focus shifts to measuring impact, refining processes, expanding coverage, and building the business case for continued investment.

Measuring the Impact of Changes

For every action taken based on VoC data, measure the before-and-after:

Measurement framework:

- Identify the VoC signal — "23% of negative reviews mentioned slow checkout"

- Document the action — "Redesigned checkout flow, reduced steps from 5 to 3"

- Set the measurement window — "Track checkout mentions in reviews for 60 days post-change"

- Compare — "Checkout complaints dropped from 23% to 8% of negative reviews"

- Estimate business impact — "Checkout-related 1-star reviews decreased by 65%. Estimated revenue recovery: $X based on reduced cart abandonment"

Not every action will produce measurable results within the 90-day window. Some improvements take months to show up in reviews. But documenting the expected measurement timeline for each action prevents premature conclusions.

Refining the Process

By Day 60, you have enough experience to identify what is working and what is not in your VoC process:

Common refinements at this stage:

- Collection frequency adjustment. You may discover that weekly collection is too frequent for some sources and not frequent enough for others. Adjust to match the natural rhythm of each source.

- Theme taxonomy refinement. Your initial theme categories will be too broad or too granular. Refine them based on 60 days of categorization experience.

- Distribution format tuning. Some teams want more detail, others want less. Customize the format for each audience rather than sending one-size-fits-all reports.

- Action item process improvement. Are action items getting completed on time? If not, the problem is usually either unclear ownership or unrealistic deadlines. Adjust accordingly.

Expanding Sources

If your initial program focused on two to three sources, Phase 3 is the time to expand:

- Add competitor review monitoring. Run monthly Sentimyne SWOT analyses on your top two competitors. Track their weaknesses for sales enablement and their strengths for competitive response.

- Integrate support ticket data. If support tagging started in Phase 1, you now have 60 days of tagged data. Analyze theme trends across the full period.

- Connect sales feedback. Start capturing why deals are won and lost, and cross-reference with review themes. If reviews mention a weakness that also appears in lost deal reasons, that weakness has quantified revenue impact.

Presenting ROI to Leadership

The Day 90 presentation is the most important deliverable of the entire program. It determines whether VoC continues to receive resources and attention.

Structure the presentation around outcomes, not activities:

What we learned (2 slides): - Top three insights that surprised us or contradicted internal assumptions - Most significant trend change since Day 0 baseline - Competitive intelligence that informed strategic decisions

What we did (2 slides): - Actions taken with before/after measurements - Revenue or cost impact (estimated when exact figures are not available) - Customer sentiment improvement quantified (rating changes, complaint reduction)

What we recommend (1 slide): - Continue/expand/adjust the VoC program - Specific investments needed for Phase 2 maturity (tools, people, processes) - Top three priorities for the next 90 days based on current VoC data

The financial case (1 slide): - Total cost of VoC program to date (time, tools, actions taken) - Total estimated impact (revenue protected, revenue generated, cost avoided) - ROI calculation

"At Day 90, your VoC program should have enough concrete results to justify itself financially. If it does not, the problem is not the data — it is the gap between insight and action."

Who Owns VoC? The Organizational Question

Option A: CX/Support Ownership

Pros: Already closest to customer feedback, natural extension of their role, strong at operational improvements. Cons: May lack influence over product and marketing decisions, risk of VoC being siloed as a "support thing."

Option B: Product Ownership

Pros: Direct line from insight to feature decisions, strong analytical capability, natural integration with roadmap planning. Cons: May deprioritize non-product insights (brand perception, support quality), risk of VoC being filtered through product bias.

Option C: Cross-Functional Ownership

Pros: Broadest organizational buy-in, insights reach all relevant teams, reduces silo risk. Cons: Diffuse accountability, slower decision-making, risk of becoming everyone's secondary priority and nobody's primary one.

Recommendation: Start with a single owner (CX or Product, depending on your organization) with a cross-functional distribution mandate. The owner runs the collection and analysis. Distribution is mandatory to product, marketing, CX, and sales. Action items are assigned to the team best positioned to execute, regardless of where ownership sits.

After 90 days, evaluate whether the ownership model is working. If insights are not reaching certain teams, adjust distribution. If certain teams are not acting on insights, escalate through the executive sponsor.

Tools at Each Phase

| Phase | Tool Need | Budget Option | Growth Option |

|---|---|---|---|

| Phase 1 (Foundation) | Review aggregation, baseline SWOT | Sentimyne Free (2 analyses/mo) + Google Sheets | Sentimyne Pro ($29/mo) + Notion |

| Phase 2 (Cadence) | Regular analysis, team distribution | Sentimyne Pro ($29/mo) + email summaries | Sentimyne Team ($49/mo) + Slack integration |

| Phase 3 (Optimization) | Competitor analysis, trend tracking, ROI measurement | Sentimyne Team ($49/mo) + Google Looker Studio | Sentimyne Team + BI dashboard |

The most important tool investment is not the software — it is the time commitment. A 30-minute weekly routine with free tools outperforms enterprise software that nobody checks.

Common Failure Points and How to Avoid Them

Failure Point 1: Insight Overload

Symptom: Weekly reports contain 20 insights. Teams feel overwhelmed and stop reading them. Fix: Limit each report to the top three to five insights, ranked by impact. Save the full analysis for monthly deep dives. Teams act on focused priorities, not comprehensive lists.

Failure Point 2: Analysis Without Action

Symptom: Beautiful dashboards, interesting trends, zero operational changes. Fix: Every VoC report must end with at least one specific action item with an owner and a deadline. If the report does not generate an action, question whether the analysis is measuring the right things.

Failure Point 3: Champion Dependency

Symptom: The program runs only when its champion is available. Vacations, role changes, or departures kill the program. Fix: Document every process, template every report, and cross-train at least one backup person by Day 60. The program should survive the champion's absence.

Failure Point 4: Ignoring Positive Feedback

Symptom: VoC becomes a complaint management program. Teams only hear about problems. Fix: Dedicate at least 30% of every VoC report to positive themes. Strengths matter as much as weaknesses — they tell you what to protect and what to amplify in marketing.

Failure Point 5: No Feedback Loop

Symptom: Changes are made based on VoC data but nobody checks whether they actually improved customer sentiment. Fix: Every action item includes a measurement plan. Track whether complaint frequencies decrease after operational changes. Close the loop by verifying impact in subsequent review data.

Your Day 1 Action Plan

Do not wait for a strategy meeting or a budget approval. Start today:

- List your feedback sources — spend 30 minutes creating the source inventory table above

- Run a baseline SWOT — use Sentimyne's free tier to analyze your existing reviews across platforms

- Identify your VoC owner — even if it is you, name the person accountable

- Set your first weekly VoC meeting — put 30 minutes on the calendar for the same time every week

- Define your top three metrics — pick one program metric, one sentiment metric, and one business impact metric

Ninety days from now, you will have a functioning VoC program that systematically converts customer feedback into business improvement. Every week between now and then builds on the previous one. The customers are already talking. The only question is whether you are going to build the system to listen.

Frequently Asked Questions

What is the minimum team size needed to run a VoC program?

One person spending three to five hours per week can run an effective VoC program for a company with up to 200 monthly reviews. The key is having the right tools to reduce manual collection time and a clear weekly routine. Sentimyne's automated SWOT analysis eliminates hours of manual review reading. As review volume grows beyond 200 per month or the organization exceeds 50 employees, consider a dedicated part-time VoC role (approximately 15-20 hours per week).

How do I get buy-in from teams that are resistant to VoC insights?

Start with their existing pain points. If the product team is struggling with feature prioritization, show them how review data quantifies which features customers actually want. If the marketing team is debating messaging, show them the exact words customers use to describe the brand. Frame VoC as a tool that makes their job easier, not an additional reporting obligation. Early wins — even small ones — build credibility that overcomes resistance.

What if our reviews are mostly positive and there is not much to act on?

Positive reviews are as actionable as negative ones. They reveal which strengths to protect, which attributes to amplify in marketing, and which customer segments are most loyal. A predominantly positive review base also benefits from competitive analysis — run Sentimyne SWOT on competitors to find weaknesses you can exploit in your positioning. Additionally, look beyond star ratings to the specific language in reviews for improvement opportunities that customers frame positively but still suggest changes.

Should we share VoC data with customers?

Yes, selectively. When you make a change based on customer feedback, announce it: "You asked for X, and we built it." This closes the feedback loop publicly and encourages more customers to leave reviews because they see their input leads to action. However, never share raw VoC data externally — internal analysis, competitive comparisons, and sentiment scores are for internal use only.

How do I measure the ROI of a VoC program in dollar terms?

Track three revenue-related metrics: (1) revenue impact of product improvements driven by VoC data (reduced churn, increased conversion from fixing identified friction points), (2) cost savings from proactively identifying and resolving issues before they generate support tickets or returns, and (3) marketing efficiency gains from using customer language in campaigns (measurable through A/B testing conversion rates). Most companies find that a single product improvement driven by review data — like fixing the number one complaint — generates returns that far exceed the entire VoC program cost within the first quarter.

Ready to try AI-powered review analysis?

Get 2 free SWOT reports per month. No credit card required.

Start FreeRelated Articles

Traditional win/loss analysis relies on expensive interviews with 10-15% response rates. Customer reviews on G2, Capterra, and Trustpilot contain the same buyer signals at scale — for free. Here's the playbook for turning public review data into win/loss intelligence.

How to Analyse Video Product Reviews on YouTube & TikTok at Scale3.4 million video product reviews were posted across YouTube, TikTok and Instagram in a single 5-month period. Learn how to extract structured sentiment, brand mentions, and competitive intelligence from video reviews using AI transcription and NLP.

Review Analysis for Banks, Fintech & Financial Services (2026 Guide)88% of millennials and Gen Z check online reviews before choosing a financial institution. Learn how banks, fintechs, and financial advisors can analyse customer reviews to improve trust, reduce churn, and compete in an industry where a one-star Yelp increase drives 5-9% revenue growth.