AI vs Human Review Analysis: When to Use Each (And How to Combine Both)

Compare AI and human review analysis across 8 dimensions: speed, accuracy, cost, scalability, nuance, consistency, bias, and actionability. Learn when to use AI-only, human-only, or hybrid approaches, with accuracy benchmarks for modern LLM-based analysis systems.

The debate between AI and human review analysis is not really a debate at all. It is a question of trade-offs, task fit, and — increasingly — how to combine both approaches for the best possible outcome.

For decades, review analysis meant a person reading reviews. Analysts combed through feedback manually, categorized themes in spreadsheets, and produced quarterly reports that took weeks to compile. That approach works beautifully for small datasets and strategic interpretation. It fails completely at scale.

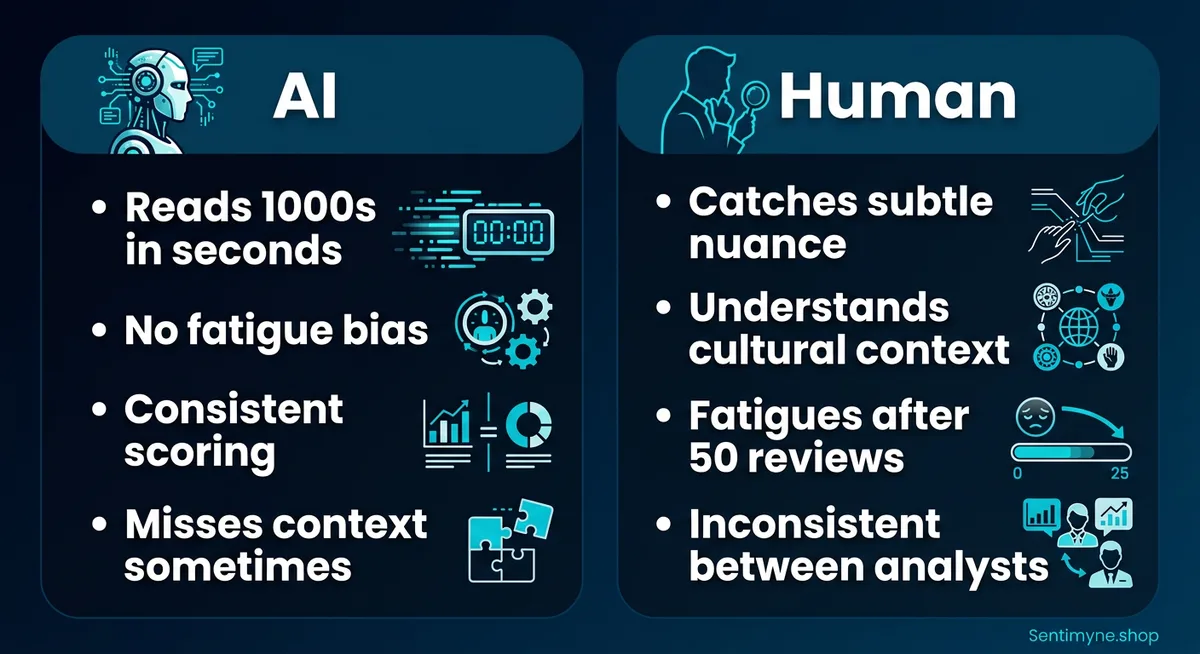

AI review analysis inverted the equation. Modern language models can process 50,000 reviews in the time it takes a human to read 50. They do not get tired, do not bring personal biases to Monday morning analysis sessions, and do not forget the categorization scheme by review 200. But they can miss sarcasm, misinterpret cultural references, and produce confidently wrong conclusions about nuanced sentiment.

The real question is not "which is better?" It is "which is better for what, when, and at what scale?" This guide compares both approaches across every dimension that matters and outlines a practical framework for combining them.

What AI Does Better

AI's advantages in review analysis are not subtle. They are overwhelming in specific dimensions.

Speed

A modern LLM-based review analysis system processes a single review in 200-500 milliseconds. That is 2,000-5,000 reviews per minute, or 120,000-300,000 per hour. A skilled human analyst reads and categorizes approximately 30-40 reviews per hour.

The math is stark:

| Volume | AI Processing Time | Human Processing Time |

|---|---|---|

| 100 reviews | 30-50 seconds | 2.5-3.5 hours |

| 1,000 reviews | 5-8 minutes | 25-35 hours |

| 10,000 reviews | 50-80 minutes | 250-350 hours |

| 50,000 reviews | 4-7 hours | 1,250-1,750 hours |

For any analysis involving more than a few hundred reviews, AI is not just faster — it is the only viable approach. No business can justify 1,500 hours of analyst time for a single review analysis project.

Scale

Speed enables scale. AI can analyze review data across dozens of platforms, thousands of products, and millions of individual reviews. This makes previously impossible analyses routine. Comparing your review sentiment against five competitors across twelve platforms over three years? AI handles this in minutes. A human team would need months.

Scale also enables statistical reliability. Patterns identified across 50,000 reviews are far more trustworthy than patterns identified across 50. AI makes large-sample analysis the default rather than the exception.

Consistency

The 5,000th review an AI system analyzes receives exactly the same analytical rigor as the 1st. There is no fatigue, no drift in categorization criteria, no unconscious bias that shifts after lunch. AI applies the same rules, the same sensitivity thresholds, and the same categorization scheme from start to finish.

Human analysts experience consistency degradation over time. Studies show that human coders' inter-rater reliability drops by approximately 15% after four hours of continuous review analysis. By the end of a long coding session, analysts are less precise, more likely to use default categories, and more likely to miss subtle themes.

No Emotional Fatigue

Reading thousands of negative reviews is psychologically taxing. Human analysts who spend days processing complaint-heavy review data experience emotional fatigue that affects both their well-being and their analytical quality. They may begin dismissing complaints as "people just whining" or become desensitized to legitimate issues.

AI does not experience frustration, boredom, or emotional exhaustion. This is particularly valuable for industries with high volumes of negative or emotionally charged reviews — healthcare, financial services, and consumer products with safety concerns.

"AI does not need a mental health day after reading 10,000 one-star reviews about your product. Your human analysts might."

Pattern Detection at Scale

AI excels at identifying patterns that would be invisible to human analysts working with limited samples. If 0.3% of reviews mention a specific rare but critical issue — say, a safety concern — AI can flag this across 100,000 reviews, surfacing 300 relevant mentions. A human analyst sampling 200 reviews would likely encounter zero or one mention and miss the pattern entirely.

What Humans Do Better

Human advantages are less about speed and more about depth, judgment, and contextual understanding.

Nuance and Context

"This vacuum sucks" is positive in a product review context. "This vacuum does not suck" is negative. AI systems have improved dramatically at handling this kind of contextual interpretation, but humans still outperform AI on the most nuanced, context-dependent language.

Other nuance-heavy scenarios where humans excel:

- Cultural references: "This is the Nokia of smartwatches" — is that praising durability or criticizing innovation? It depends on the reviewer's cultural context and generation.

- Industry jargon: In some industries, terms have specialized meanings that general-purpose AI models may misinterpret.

- Implied sentiment: "Well, it certainly arrived on time" — the sarcastic undertone requires understanding that "arriving on time" is being used dismissively to imply nothing else was satisfactory.

Sarcasm Detection

Sarcasm remains AI's Achilles' heel in sentiment analysis. While LLMs have improved significantly, studies show that AI correctly identifies sarcasm in reviews approximately 70-75% of the time, compared to human accuracy of 85-90%. That 15-20 point gap matters when sarcasm is common in your review data.

Sarcasm-heavy review categories include:

- Consumer electronics (especially when premium products underperform)

- Restaurant reviews (particularly from experienced food critics)

- Service industry reviews (when expectations are dramatically unmet)

- App store reviews (where sarcasm is a cultural norm)

Strategic Interpretation

AI can tell you that 34% of negative reviews mention "customer support" and that sentiment toward customer support has declined 12% over the past quarter. AI cannot tell you that the decline coincides with the company's shift from phone support to chatbot-only, that the chatbot's limitations are driving the frustration, and that reversing this decision would cost $X but would likely recover $Y in prevented churn.

Strategic interpretation — connecting analytical findings to business context, competitive dynamics, resource constraints, and organizational priorities — is fundamentally a human capability. AI provides the what; humans provide the so what and the now what.

Identifying Emerging Issues Before They Have Data

Experienced human analysts develop intuition about emerging problems. They read a handful of reviews mentioning a new issue and recognize it as a potential trend before statistical evidence accumulates. This pattern recognition from small samples — sometimes called "weak signal detection" — is something humans do remarkably well and AI struggles with.

AI needs enough data points to identify a statistical pattern. Humans can read five reviews mentioning the same unusual complaint and think "this is going to be a problem." That intuitive early warning is valuable.

Handling Edge Cases and Exceptions

Reviews that do not fit neatly into any category, reviews that contain mixed signals, reviews that seem to contradict themselves — humans handle these better. They can use judgment to determine intent, ask clarifying mental questions, and make reasonable inferences from incomplete information.

AI systems, even sophisticated LLMs, can struggle with genuinely ambiguous input. They will produce a classification, but their confidence may be misplaced. A human analyst reading the same ambiguous review will flag it as "unclear" — a more honest and ultimately more useful assessment.

Head-to-Head Comparison Across 8 Dimensions

Here is how AI and human review analysis compare across the dimensions that matter most for practical deployment.

See What Your Reviews Really Say

Paste any product URL and get an AI-powered SWOT analysis in under 60 seconds.

Try It Free →| Dimension | AI Analysis | Human Analysis | Winner |

|---|---|---|---|

| Speed | 2,000-5,000 reviews/minute | 30-40 reviews/hour | AI (by 3,000-10,000x) |

| Accuracy | 88-94% for clear sentiment | 85-92% inter-rater agreement | Comparable, slight AI edge for consistency |

| Cost | $0.001-0.05 per review | $0.50-2.00 per review (analyst time) | AI (by 10-2,000x) |

| Scalability | Virtually unlimited | Limited by team size and hours | AI |

| Nuance | Good; struggles with sarcasm, culture | Excellent; catches what AI misses | Human |

| Consistency | Perfect across all reviews | Degrades with fatigue and volume | AI |

| Bias | Systematic (trainable); no emotional bias | Variable; personal and emotional bias | Neither (different bias types) |

| Actionability | High for data delivery; low for strategy | High for strategic interpretation | Human for strategy; AI for data |

The Accuracy Question in Detail

Accuracy deserves a deeper look because it is the most common concern about AI review analysis.

Modern LLM-based sentiment analysis systems achieve:

- 88-94% accuracy for binary (positive/negative) classification

- 82-88% accuracy for three-class (positive/negative/neutral) classification

- 75-85% accuracy for aspect-based sentiment analysis (identifying what the sentiment is about)

- 70-75% accuracy for sarcasm detection

For comparison, human inter-rater reliability (how often two humans agree on the same classification):

- 85-92% agreement for binary sentiment classification

- 78-85% agreement for three-class classification

- 72-80% agreement for aspect-based analysis

- 85-90% agreement for sarcasm detection

The surprising finding is that AI and human accuracy are now comparable for most standard review analysis tasks. AI is slightly more consistent (no variance between reviewers), while humans are slightly better at the hardest cases (sarcasm, cultural context, ambiguity). For the vast majority of review analysis work — the 85% of reviews with clear, unambiguous sentiment — AI performs as well as or better than humans.

The Bias Question

Both AI and human analysis exhibit biases, but of fundamentally different types.

AI biases: - Training data bias (if the model was trained on mostly English-language reviews, it may underperform on other languages) - Systematic errors that are consistent and predictable (once you know the model always misclassifies sarcasm about delivery speed, you can account for it) - No emotional bias — a negative review from a celebrity gets the same analysis as one from an anonymous user

Human biases: - Recency bias (recent reviews weighted more heavily than earlier ones) - Anchoring (first few reviews set expectations for subsequent analysis) - Confirmation bias (finding themes you expected to find) - Emotional bias (sympathizing with reviewers or becoming defensive on behalf of the business) - Fatigue effects (declining accuracy over time)

Neither approach is unbiased. The advantage of AI bias is that it is systematic and correctable. The advantage of human bias is that experienced analysts recognize their biases and can consciously compensate.

The Hybrid Approach: AI for Analysis, Humans for Strategy

The most effective review analysis programs combine AI and human capabilities in a structured workflow.

How the Hybrid Model Works

- AI handles data collection and initial analysis. Pull reviews from all platforms, classify sentiment, extract themes, calculate metrics, identify trends, flag anomalies.

- AI produces structured outputs. SWOT analyses, sentiment dashboards, trend reports, competitive comparisons — all generated automatically.

- Humans review AI outputs for strategic interpretation. What do these trends mean for our business? Which insights require action? What is the priority order? What context does the AI not have?

- Humans handle edge cases flagged by AI. Reviews with low confidence scores, ambiguous sentiment, mixed signals — these get human review.

- Humans make strategic decisions. Product changes, marketing adjustments, operational improvements, competitive responses — all informed by AI analysis but directed by human judgment.

"The hybrid approach gives you AI's speed and scale for the 85% of analysis that is straightforward, and human depth and judgment for the 15% that requires it. You get 100% coverage with resources optimized for each task."

When to Go AI-Only

AI-only analysis is appropriate when:

- Volume exceeds human capacity. If you need to analyze more than 1,000 reviews on a regular basis, human-only analysis is impractical.

- Speed is critical. Monitoring review sentiment in near-real-time during a product launch or PR crisis requires AI.

- Consistency matters most. When you need to apply identical criteria across a large dataset — for regulatory compliance, trend tracking, or benchmarking — AI's consistency is essential.

- Budget is limited. AI analysis costs 10-2,000x less than human analysis per review. For budget-constrained teams, AI analysis is the only option for comprehensive review coverage.

Sentimyne is designed for this use case — AI-powered analysis that delivers a complete SWOT from 12+ platforms in 60 seconds. The output is structured for human strategic interpretation: you get the analysis automatically, then apply your business context and judgment to the results.

When to Go Human-Only

Human-only analysis is appropriate when:

- Volume is very small. Analyzing 20-50 reviews for a one-time competitive analysis or product launch debrief is often faster to do manually than to set up tooling.

- The reviews require deep cultural or industry context. Highly specialized industries (medical devices, financial instruments, luxury goods) may have review language that general-purpose AI misinterprets.

- The goal is qualitative understanding, not quantitative measurement. If you want to deeply understand the customer experience rather than measure it, reading reviews yourself provides insights that no dashboard captures.

- Stakes are extremely high. When a single misclassified review could drive a wrong strategic decision (rare, but possible in crisis situations), human verification is worth the cost.

When to Use the Hybrid Approach

The hybrid approach is appropriate — and optimal — for most real-world scenarios:

- Regular competitive intelligence. AI analyzes thousands of competitor reviews; humans interpret the strategic implications.

- Product development feedback. AI identifies the top feature requests and pain points; product managers evaluate feasibility and priority.

- Brand health monitoring. AI tracks sentiment trends continuously; marketing leaders review monthly reports and adjust strategy.

- Crisis response. AI provides real-time sentiment monitoring; human judgment drives the response strategy.

Accuracy Benchmarks for Modern LLM-Based Analysis

For teams evaluating AI review analysis tools, here are the accuracy benchmarks you should expect from modern, LLM-based systems:

| Task | Minimum Acceptable | Good | Best-in-Class |

|---|---|---|---|

| Binary sentiment (pos/neg) | 85% | 90% | 94%+ |

| Three-class sentiment | 78% | 84% | 88%+ |

| Aspect extraction | 70% | 78% | 85%+ |

| Aspect sentiment | 68% | 76% | 82%+ |

| Theme clustering | 72% | 80% | 86%+ |

| Sarcasm detection | 60% | 70% | 78%+ |

| Fake review detection | 75% | 82% | 90%+ |

These benchmarks reflect the state of the art as of early 2026. Systems using frontier LLMs (GPT-4 class and above) typically hit "Good" or "Best-in-Class" across most dimensions. Older systems using traditional ML approaches often fall below minimum acceptable thresholds for nuanced tasks.

How to Test Accuracy

If you are evaluating a review analysis tool:

- Create a gold standard dataset. Have multiple human analysts classify 200-500 reviews from your specific domain.

- Run the tool on the same dataset. Compare the tool's classifications against your human gold standard.

- Measure agreement. Calculate precision, recall, and F1 score for each classification category.

- Focus on your domain. A tool that performs well on restaurant reviews may underperform on B2B software reviews. Test with your data.

- Test edge cases. Include sarcastic reviews, mixed-sentiment reviews, very short reviews, and reviews with industry jargon.

The Future: AI Gets Better, Humans Stay Essential

The trend is clear: AI review analysis accuracy is improving every year. The gap between AI and human performance on straightforward tasks has already closed, and the gap on nuanced tasks is narrowing.

But this does not mean humans become irrelevant to review analysis. It means the human role shifts from doing analysis to directing analysis. The analyst of 2026 does not spend hours reading reviews and filling spreadsheets. They spend hours interpreting AI-generated insights, connecting them to business context, and making strategic decisions.

This shift is happening across every knowledge work domain, and review analysis is simply one of the clearest examples. The tools handle the data processing; the humans handle the thinking.

For teams getting started with this hybrid approach, Sentimyne provides the AI analysis layer: SWOT analysis from 12+ platforms in 60 seconds, with structured output designed for human strategic interpretation. The free tier (2 analyses per month) lets you experience the AI analysis firsthand and evaluate where human interpretation adds the most value for your specific context.

Frequently Asked Questions

Can AI completely replace human review analysts?

Not yet, and likely not in the near term. AI can replace humans for data processing tasks — reading, categorizing, scoring, and summarizing reviews at scale. But strategic interpretation, contextual understanding, and decision-making remain human strengths. The most effective approach combines AI processing with human judgment. Over time, the boundary will shift further toward AI, but the human role in strategic interpretation is durable.

How accurate is AI sentiment analysis compared to humans?

For straightforward positive/negative classification, modern LLM-based systems achieve 88-94% accuracy, which is comparable to or slightly better than human inter-rater agreement (85-92%). The accuracy gap widens for nuanced tasks: humans still outperform AI on sarcasm detection (85-90% vs 70-75%) and culturally contextual interpretation. For practical purposes, AI accuracy is sufficient for the vast majority of review analysis tasks.

What is the cost difference between AI and human review analysis?

AI review analysis costs approximately $0.001-0.05 per review, depending on the tool and analysis depth. Human analysis costs approximately $0.50-2.00 per review when accounting for analyst time, which translates to 10-2,000x cost savings with AI. For a dataset of 10,000 reviews, AI analysis might cost $10-500 while human analysis would cost $5,000-20,000.

How do I know if my business needs AI review analysis or if manual review is sufficient?

The volume threshold is roughly 200 reviews per analysis cycle. Below 200, manual analysis is feasible and may even be preferable for its depth. Above 200, AI analysis becomes increasingly necessary for comprehensive coverage. If you are analyzing reviews across multiple platforms, multiple products, or over extended time periods, AI analysis is almost certainly needed regardless of volume per individual source.

What should I look for when choosing an AI review analysis tool?

Prioritize accuracy on your specific data (test with a sample), multi-platform coverage (most businesses have reviews on 3-5+ platforms), output actionability (does it produce insights you can act on, not just data?), and ease of use (complex tools with steep learning curves reduce adoption). Look for tools that produce structured strategic output like SWOT analyses rather than just sentiment scores, as the strategic framing makes insights more actionable for decision-makers.

Ready to try AI-powered review analysis?

Get 2 free SWOT reports per month. No credit card required.

Start FreeRelated Articles

Traditional win/loss analysis relies on expensive interviews with 10-15% response rates. Customer reviews on G2, Capterra, and Trustpilot contain the same buyer signals at scale — for free. Here's the playbook for turning public review data into win/loss intelligence.

How to Analyse Video Product Reviews on YouTube & TikTok at Scale3.4 million video product reviews were posted across YouTube, TikTok and Instagram in a single 5-month period. Learn how to extract structured sentiment, brand mentions, and competitive intelligence from video reviews using AI transcription and NLP.

Review Analysis for Banks, Fintech & Financial Services (2026 Guide)88% of millennials and Gen Z check online reviews before choosing a financial institution. Learn how banks, fintechs, and financial advisors can analyse customer reviews to improve trust, reduce churn, and compete in an industry where a one-star Yelp increase drives 5-9% revenue growth.